On this Page

| Table of Contents | ||||||

|---|---|---|---|---|---|---|

|

Snap type: | Transform | |||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

Description: | This Snap takes an expression, evaluates it, and writes the result to the provided target path. If an expression fails to evaluate, use the Views tab to specify error handling. For more information on expressions, see Understanding Expressions in SnapLogic. This Snap supports both binary and document data streams. The default input and output is document, but you can select Binary from the Views tab in the Snap's settings. Structural TransformationsThe following structural transformations from the Structure Snap are supported in the Mapper Snap:

For performance reasons, the Mapper does not make a copy of any arrays or objects written to the Target Path. If you write the same array or object to more than one target path and plan to modify the object, make the copy yourself. For example, given the array "$myarray" and the following mappings:

Any future changes made to either "$MyArray" or "$OtherArray" are in the both arrays. In that case, make a copy of the array as shown below:

The same is true for objects, except you can make a copy using the ".extend()" method as shown below:

| |||||||||||||||||||||

| Prerequisites: | [None] | |||||||||||||||||||||

| Support and limitations: | Works in Ultra Pipelines. | |||||||||||||||||||||

| Account: | Accounts are not used with this Snap. | |||||||||||||||||||||

| Views: |

Passing Binary DataYou would convert binary data to document data by preceding the Mapper Snap with the Binary-to-Document Snap. Likewise, to convert the document output of the Mapper Snap to binary data, you would add the Document-to-Binary Snap after the Mapper Snap. Currently, you can do this transformation within the Mapper Snap itself. You set the Mapper Snap to take binary data as its input and output by using the $content expression.

| |||||||||||||||||||||

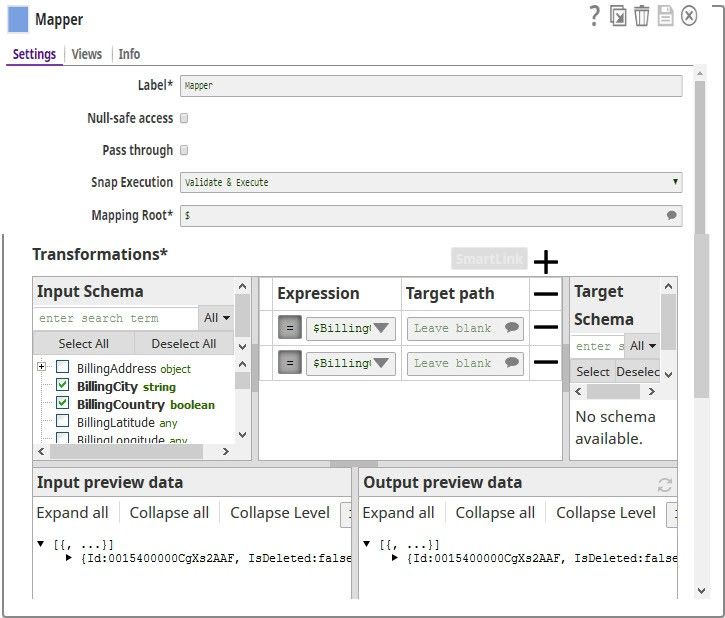

Settings | ||||||||||||||||||||||

Label | Required. The name for the Snap. You can modify this to be more specific, especially if you have more than one of the same Snap in your pipeline. | |||||||||||||||||||||

Null-safe access | Enabled: Lets you set the target value to null in case the source path does not exist. For example $person.phonenumbers.pop() ->$ lastphonenumber may result in an error if person.phonenumbers does not exist on the source data. Enabling Null-safe access allows the Snap to write null to lastphonenumber instead of causing an error. | |||||||||||||||||||||

Pass through | Required. This setting determines if data should be passed through or not. If not selected, then only the data transformation results that are defined in the mapping section will appear in the output document and the input data will be discarded. If selected, then all of the original input data will be passed into the output document together with the data transformation results.

Default: Not selected. When to always select Pass throughAlways select Pass through if you plan to leave the Target path field blank in the Mapper Snap; otherwise, the Snap throws an error informing you that the field that you want to delete doesn't exist. This is expected behavior. Say you have an input file that contains a number of attributes; but you need only two of these downstream. So, you connect a Mapper to the Snap supplying the input file, select the two attributes you need by listing them in the Expression fields, leave the Target path field blank, and select Pass through. When you execute the pipeline, the Mapper Snap evaluates the input documents/binary data and picks up the two attributes that you want, and passes the entire document/binary data through to the Target schema. From the list of available attributes in the Target Schema, the Mapper Snap picks up the two attributes you listed in the Expression fields, and passes them as output. However, if you hadn't selected the Pass through check box, the Target Schema would be empty, and the Mapper would throw the expected error: | |||||||||||||||||||||

Mapping Root | Required. This setting specifies the sub-section of the input data to be mapped. For more information, see Understanding the Mapping Root. Default: $ | |||||||||||||||||||||

| Transformations: Mapping table | Required. Expression and target to write the result of the expression. Expressions that are evaluated will remove the source targets at the end of the run. For example:

See Understanding Expressions in SnapLogic for more information on the expression language and Using Expressions for usage guidelines. Target Path RecommendationIris simplifies configuring the Target path property in the Mapper Snap by recommending suggestions for the Expression and Target path property mapping. To make these suggestions, Iris analyzes Expression and Target path mappings in other Pipelines in your Org and suggests the exact matches for the Expressions in your current Pipeline. The suggestions are displayed upon clicking For example, you have the Expression $Emp.Emp_Personal.FirstName in one of your Pipelines. And you have set the Target path for this expression as $FirstName. Now, if you use the expression $Emp.Emp_Personal.FirstName in a new Pipeline, then Iris suggests $FirstName as one of the recommended Target paths. This helps you standardize the naming standards within your org. The following video illustrates how Iris recommends Target path in a Mapper Snap:

Managing Numeric Inputs in Mapper ExpressionsWhile working with upstream numeric data, you may see some unexpected behavior. For example, consider a mapping that reads as follows:

Say the value being passed from upstream for $num is 20.05. You would expect the value of $numnew to now be 120.05. But, when you execute the Snap, the value of $numnew is shown as 20.05100. This happens because, as of now, the Mapper Snap reads all incoming data as strings, unless they are expressly listed as integers (INT) or decimals (FLOAT). So, to ensure that the upstream numeric data is appropriately interpreted, parse the data as a float. This will convert the numeric data into a decimal; and all calculations performed on the upstream data in the Mapper Snap will work as expected:

The value of $numnew is now shown as 120.05. | |||||||||||||||||||||

|

| |||||||||||||||||||||

Mapping Table

The mapping table makes it easier to do the following:

- Determine which fields in a schema are mapped or unmapped.

- Create and manage a large mapping table through drag-and-drop.

- Search for specific fields.

For more information, see Using the Mapping Table.

Example

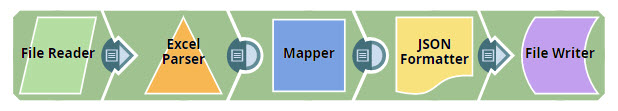

Removing Columns from Excel Files Using Mapper

In this example, you read an Excel file from the SLDB and remove columns that you do not need from the file. You then write the updated data back into the SLDB as a JSON file.

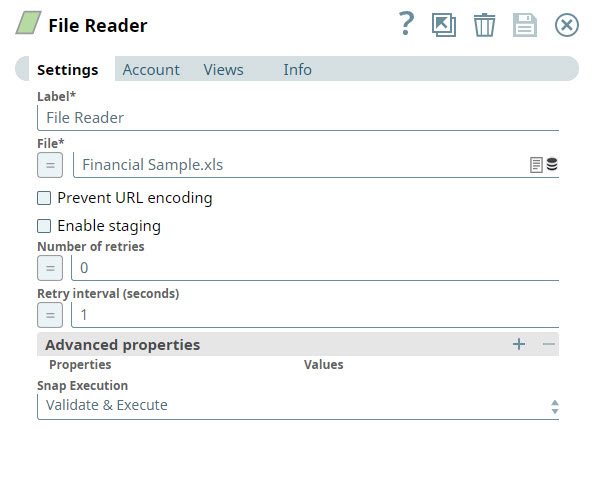

- You add a File Reader Snap to the Canvas and configure it to read the Excel file from which you want to remove specific columns.

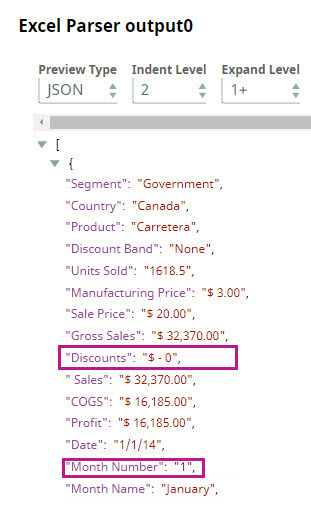

Parse the file using the Excel Parser Snap. You can preview the parsed data by clicking the icon.

- From the preview file, you can see the columns that you want to remove. In this instance, you decide to remove the Discounts and Month Number columns. To do so, you add a Mapper Snap to the Pipeline.

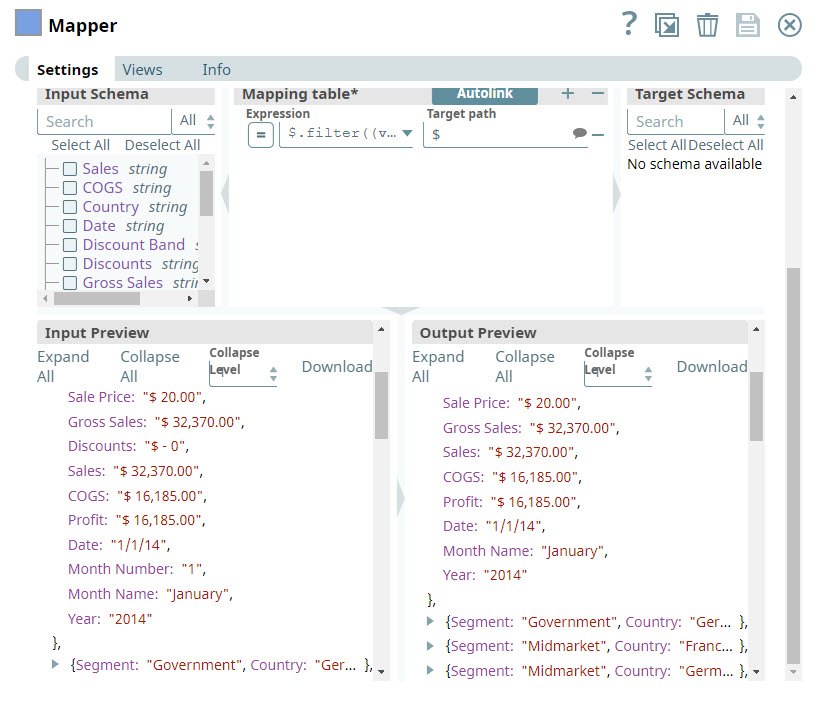

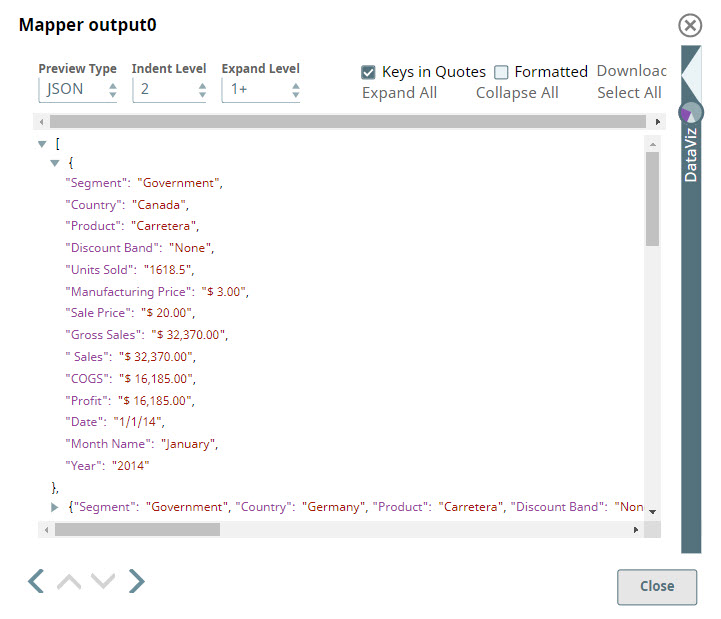

In the Expression field, you enter the criteria that you want to use to remove the Discounts and Month Name columns.

Paste code macro $.filter((value, key) => !key.match("Discounts|Month Number"))

You enter $ in the Target field to indicate that you want to leave the other column names unchanged.

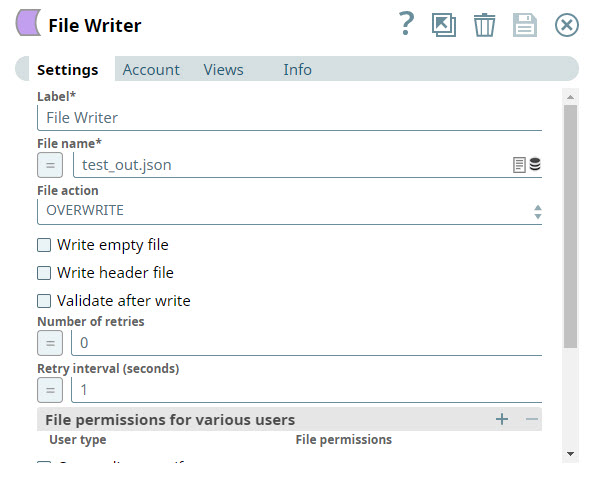

You validate the Snap, and can see that the Discounts and Month Name columns are skipped. - You now need to write the updated data back into the SLDB as a JSON file. To do so, you add a JSON Formatter Snap to the Pipeline to convert the documents coming in from the Mapper Snap into binary data. You then add a File Writer Snap and configure it to write the input streaming data to the SLDB.

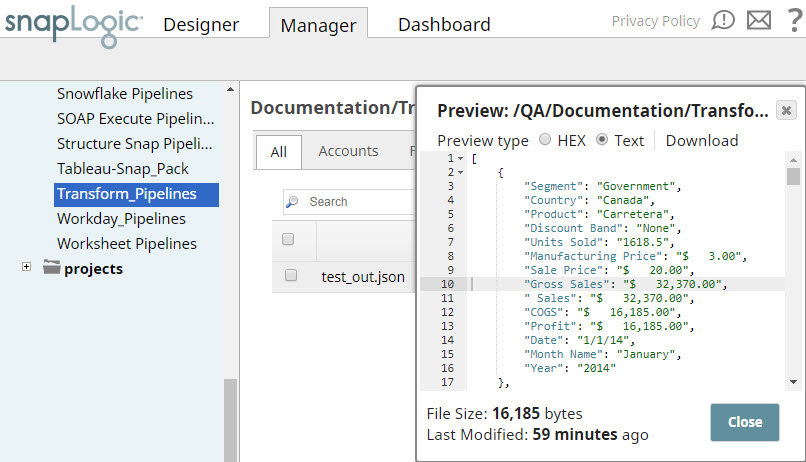

- You can now view the saved file in the destination project in SnapLogic Manager.

Example Data Output

Successful Mapping

If your source data looks like:

| Code Block |

|---|

{

"first_name": "John",

"last_name": "Smith",

"phone_num": "123-456-7890"

} |

And your mapping looks like:

- Expression: $first_name.concat(" ", $last_name)

- Target path: $full_name

Your outgoing data will look like:

| Code Block |

|---|

{

"full_name": "John Smith",

"phone_num": "123-456-7890"

} |

Unsuccessful Mapping

If your source data looks like:

| Code Block |

|---|

{

"first_name": "John",

"last_name": "Smith",

"phone_num": "123-456-7890"

} |

And your mapping looks like:

- Expression: $middle_name.concat(" ", $last_name)

- Target path: $full_name

An error will be thrown.

Example:

Escaping Special Characters in Source Data

This example demonstrates how you can use the Mapper Snap to customize source data containing special characters so that it is correctly read and interpreted by downstream Snaps.

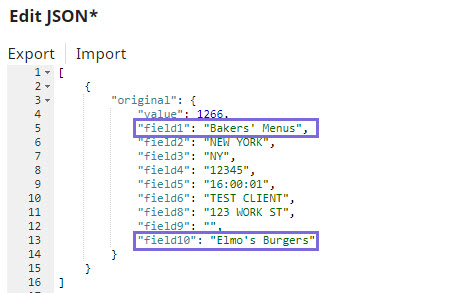

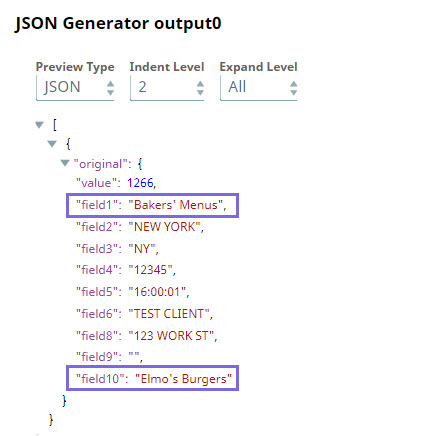

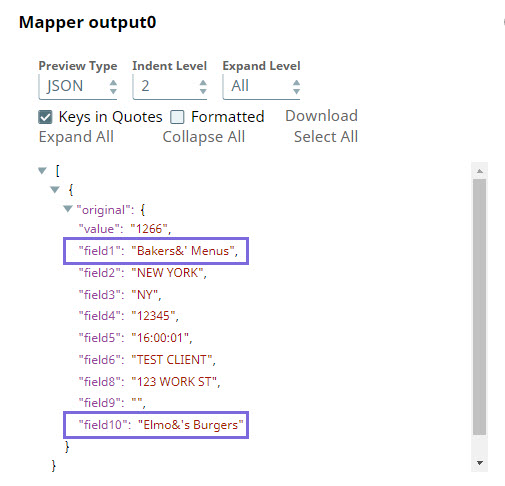

In the sample Pipeline, custom JSON data is provided in the JSON Generator Snap, wherein the values of field1 and field10 include the special character (').

The output preview of the JSON Generator Snap displays the special character correctly:

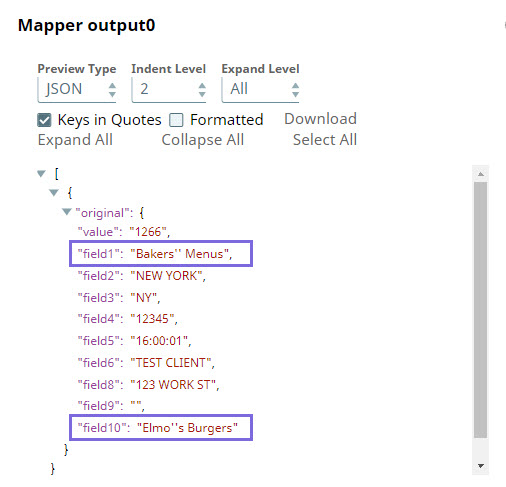

Before sending this data to downstream Snaps, you may need to prefix the special characters with an escape character so that downstream Snaps correctly interpret these.

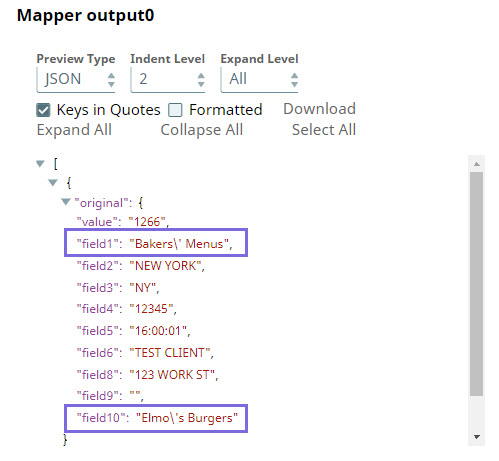

You can do this using the Expression field in the Mapper Snap. Based on the accepted escape characters in the endpoint, you can select from the following expressions:

| If the Escape Character is | Use Expression | Sample Output | ||

|---|---|---|---|---|

Single quote (') | JSON: $original.mapValues((value,key)=> value.toString().replaceAll("'","''")) OR $original.mapValues((value,key)=> value.toString().replaceAll("'","\''")) CSV: $[' Business-Name'].replace ("'","''") | |||

Ampersand (&) | JSON: $original.mapValues((value,key)=> value.toString().replaceAll("'","\&'")) OR $original.mapValues((value,key)=> value.toString().replaceAll("'","&'")) CSV: $[' Business-Name'].replace ("'","&'") | |||

Backslash (\) | JSON: $original.mapValues((value,key)=> value.toString().replaceAll("'","\\'"))

CSV: $[' Business-Name'].replace ("'","\\'") |

In this way, you can customize the data to be passed on to downstream Snaps using the Expression field in the Mapper Snap.

Refer to the Community discussion for more information.

See it in Action

The SnapLogic Data Mapper

| Widget Connector | ||

|---|---|---|

|

SnapLogic Best Practices: Data Transformations and Mappings

| Widget Connector | ||

|---|---|---|

|

| View file | ||||

|---|---|---|---|---|

|

| Attachments | ||

|---|---|---|

|

| Insert excerpt | ||||||

|---|---|---|---|---|---|---|

|