On this Page

...

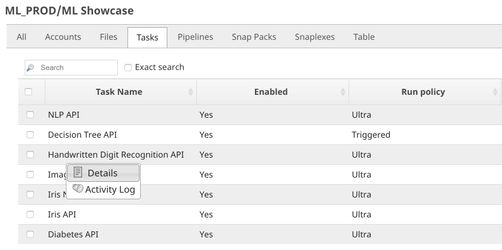

The live demo is available at our Machine Learning Showcase.

Objectives

- Model Building: Use Remote Python Script Snap from ML Core Snap Pack to deploy python script to train convolutional neural networks model on MNIST dataset.

- Model Testing: Test the model with a sample.

- Model Hosting: Use Remote Python Script Snap from ML Core Snap Pack to deploy python script to host the model and schedule an Ultra Task to provide API.

- API Testing: Use REST Post Snap to send a sample request to the Ultra Task to make sure the API is working as expected.

We will build 4 pipelines: Model Building, Model Testing, Model Hosting, and API Testing; and an Ultra Task to accomplish the above objectives. Each of these pipelines is described in the Pipelines section below.

Pipelines

Model Building

The Remote Python Script Snap downloads the MNIST dataset (we use Keras library to get the dataset), train CNN model, and evaluate the model. We then format the model with the JSON Formatter Snap and save the model on SnapLogic File System (SLFS) using File Writer Snap.

...

We use SLTool.ensure to automatically install required libraries. SLTool class contains useful methods: ensure, execute, encode, decode, etc. In this case, we need keras and tensorflow. The tensorflow 1.5.0 does not have optimization, hence it is recommended for old CPUs.

| Paste code macro | ||

|---|---|---|

| ||

from snaplogic.tool import SLTool as slt

# Ensure libraries.

slt.ensure("keras", "2.2.4")

slt.ensure("tensorflow", "1.5.0") |

We then use SL.ensure to automatically install python libraries required by this script. In this case, we need keras and tensorflow. The tensorflow 1.5.0 does not have optimization so it is recommended for old CPUs.

In snaplogic_init, we create a new session. We download the dataset and build the CNN model in snaplogic_final. The dataset can be obtained directly from Keras. The raw data shape is (N, 28, 28), we need to reshape it to (N, 28, 28, X) in order to use the Conv2D layer. Since the images in this dataset contain one color channel (grayscale), X is 1. We scale the color intensity to range [0,1]. We apply one hot encoding to targets (y_train, y_test).

...

The prediction of the Remote Python Script Snap is shown below. The digit has been correctly identified.

Python Script

The input of the Remote Python Script Snap can be either the neural networks model or a sample. If it is the model, we use base64 to decode the model. If the incoming document is a sample, we will add it to the queue. Once the model is loaded, we apply the model to samples in the queue and output predictions. In order to preserve lineage property in Ultra Task, SLTool.get_drop_doc() is returned for the document describing the model.

| Paste code macro | ||

|---|---|---|

| ||

# Imports

import base64

import os

import uuid

import numpy

import tensorflow

import keras

# Global Variables

model = None

request_queue = []

# This function will be executed once before consuming the data.

def snaplogic_init():

return None

# This function will be executed on each document from the upstream snap.

def snaplogic_process(row):

global model

global request_queue

# Create a list of outputs to be sent to the downstream snap.

output_list = []

is_model = False

# Load model.

if "model" in row:

is_model = True

model = {}

model_tmp_path = SL.get_random_path()

model_bin = base64.b64decode(row["model"])

with open(model_tmp_path, "wb") as model_file:

model_file.write(model_bin)

model["graph"] = tensorflow.Graph()

with model["graph"].as_default():

model["session"] = tensorflow.Session()

with model["session"].as_default():

model["model"] = keras.models.load_model(model_tmp_path)

model["model"]._make_predict_function()

os.remove(model_tmp_path)

# Add new request to the queue.

else:

request_queue.append(row)

# If the model is ready, process requests in the queue.

if model is not None:

while len(request_queue) > 0:

try:

request = request_queue.pop(0)

feature = numpy.array([request["img"]])

with model["graph"].as_default():

with model["session"].as_default():

pred_list = model["model"].predict(feature)

pred = pred_list[0].argmax()

conf_level = pred_list[0][pred]

output_list.append({"pred": pred, "conf": conf_level})

except:

output_list.append({"pred": "The request is not valid."})

if is_model:

# Do not output a model document to preserve lineage property.

output_list.append(SL.get_drop_doc())

return output_list

# This function will be executed after consuming all documents from the upstream snap.

def snaplogic_final():

return None |

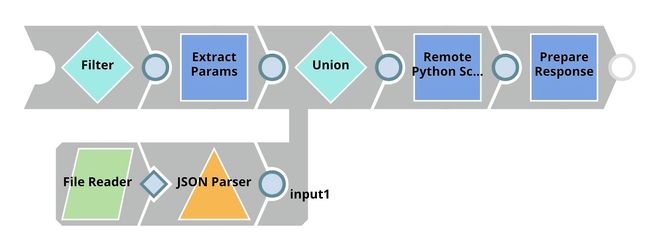

Model Hosting

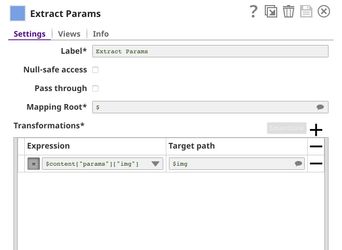

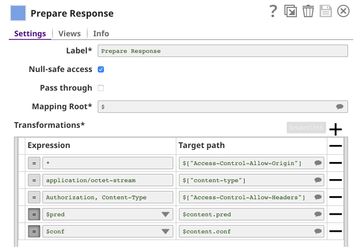

This pipeline is scheduled as an Ultra Task to provide a REST API that is accessible by external applications. The core components of this pipeline are File Reader, JSON Parser, Union, and Remote Python Script Snap Snaps that are the same as in the Model Testing pipeline. Instead of taking the data from the JSON Generator, the Remote Python Script Snap takes the data from API request. The Filter Snap is used to authenticate the request by checking the token that can be changed in pipeline parameters. The Extract Params Snap (Mapper) extracts the required fields from the request. The Prepare Response Snap (Mapper) maps from prediction to $content.pred and confidence level to $content.conf which will be the response body. This Snap also adds headers to allow Cross-Origin Resource Sharing (CORS).

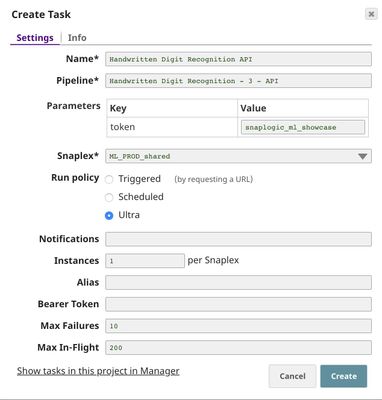

Building API

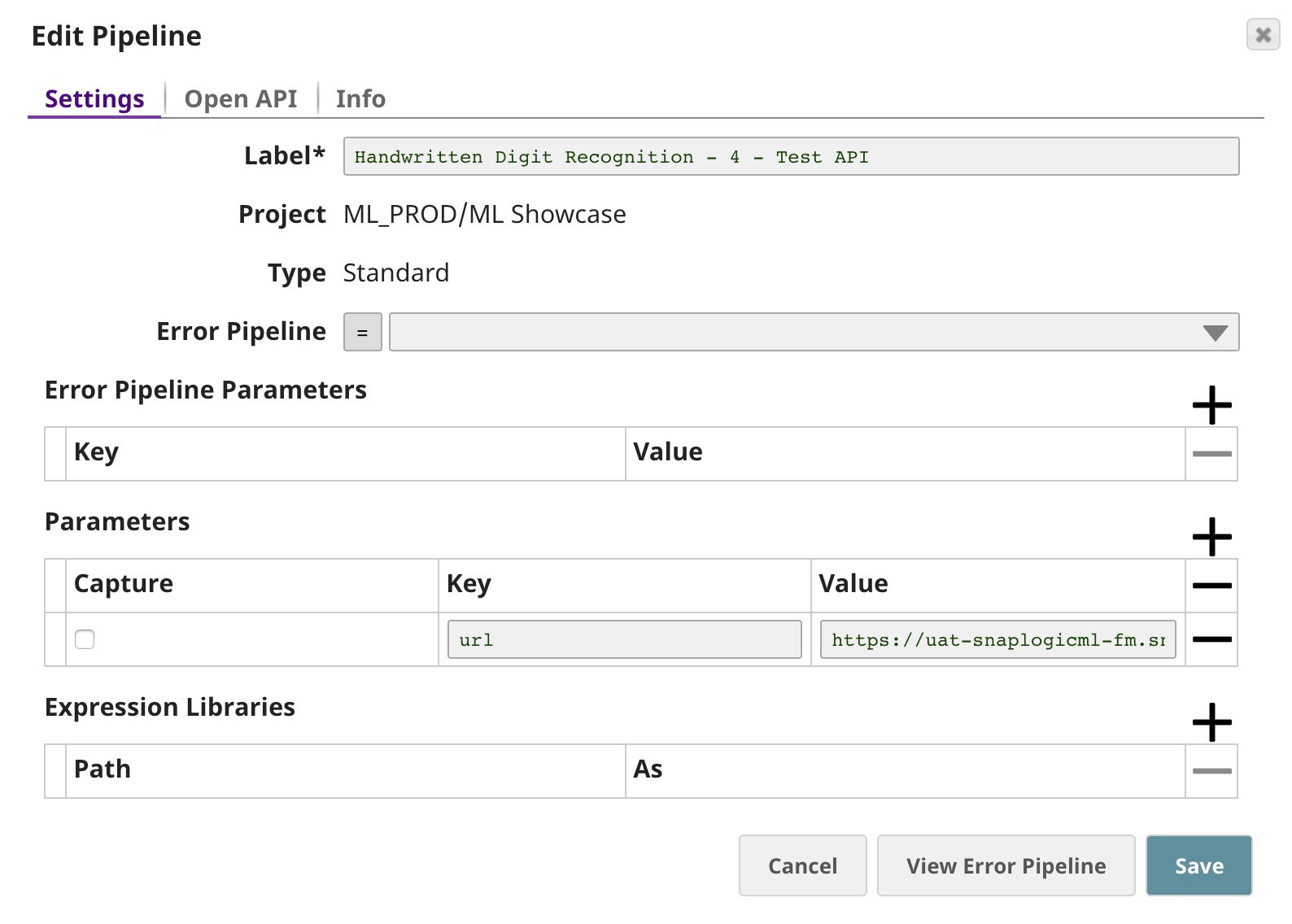

To deploy this pipeline as a REST API, click the calendar icon in the toolbar. Either Triggered Task or Ultra Task can be used.

Triggered Task is good for batch processing since it starts a new pipeline instance for each request. Ultra Task is good to provide REST API to external applications that require low latency. In this case, the Ultra Task is preferable. Bearer token is not needed here since the Filter Snap will perform authentication inside the pipeline.

In order to get the URL, click Show tasks in this project in Manager in the Create Task window. Click the small triangle next to the task then Details. The task detail will show up with the URL.

API Testing

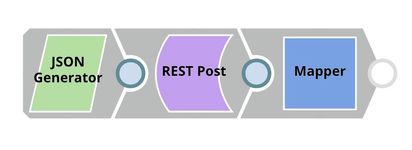

In this pipeline, a sample request is generated by the JSON Generator. The request is sent to the Ultra Task by REST Post Snap. The Mapper Snap is used to extract response which is in $response.entity.

Below is the content of the JSON Generator Snap. It contains $token and $params which will be included in the request body sent by REST Post Snap.

The REST Post Snap gets the URL from the pipeline parameters. Your URL can be found in the Manager page. In some cases, it is required to check Trust all certificates in the REST Post Snap.

The output of the REST Post Snap is shown below. The last Mapper Snap is used to extract $response.entity from the request. In this case, the prediction is 1 with the confidence level of 0.9987.

Downloads

| Attachments | |||||

|---|---|---|---|---|---|

|