On this Page

| Table of Contents | ||||||

|---|---|---|---|---|---|---|

|

Overview

This Snap provides the functionality of SCD (Slowly Changing Dimension) Type 2 on a target Snowflake table. The Snap executes one SQL lookup request per set of input documents to avoid making a request for every input record. Its output is typically a stream of documents for the Snowflake - Bulk Upsert Snap, which updates or inserts rows into the target table. Therefore, this Snap must be connected to the Snowflake - Bulk Upsert Snap to accomplish the complete SCD2 functionality.

Input and Output

Expected input: Each document in the input view should contain a data map of key-value entries. The input data must contain data in the Natural Key (primary key) and Cause-historization fields.

Expected output: Each document in the output view contains a data map of key-value entries for all fields of a row in the target Snowflake table.

Expected upstream Snaps: Any Snap, such as a Mapper or JSON Parser Snap, whose output contains a map of key-value entries.

Expected downstream Snaps: Snowflake Bulk Upsert snap must be used as downstream snap since the Snowflake SCD2 snap only generates set of rows to be inserted or updated and it doesn't do any write operation on the table.

Prerequisites

- Read and write access to the Snowflake instance.

- The target table should have the following three columns for field historization to work:

- Column to demarcate whether a row is a current row or not. For example, "CURRENT_ROW". For the current row, the value would be true or 1. For the historical row, the value would be false or 0.

- Column to denote the starting date of the current row. For example, "START_DATE".

- Column to denote when the row was historized. For example, "END_DATE". For the active row, it is null. For a historical row, it has the value that indicates it was effective till that date.

Insert excerpt Snowflake - Bulk Load Snowflake - Bulk Load nopanel true Security Prerequisites: You should have the following permissions in your Snowflake account to execute this Snap:

- Usage (DB and Schema): Privilege to use database, role and schema.

- Create table: Privilege to create a table on the database. role and schema.

For more information on Snowflake privileges, refer to Access Control Privileges.

Internal SQL Commands

This Snap uses the SELECT command internally. It enables querying the database to retrieve a set of rows.

Configuring Accounts

This Snap uses account references created on the Accounts page of SnapLogic Manager to handle access to this endpoint. See Snowflake Account for information on setting up this type of account.

Configuring Views

Input | This Snap has exactly one document input view. |

|---|---|

| Output | This Snap has exactly one document output view. |

| Error | This Snap has at most one document error view. |

Troubleshooting

None.

Limitations and Known Issues

None.

Modes

- Ultra Pipelines: Works in Ultra Pipelines.

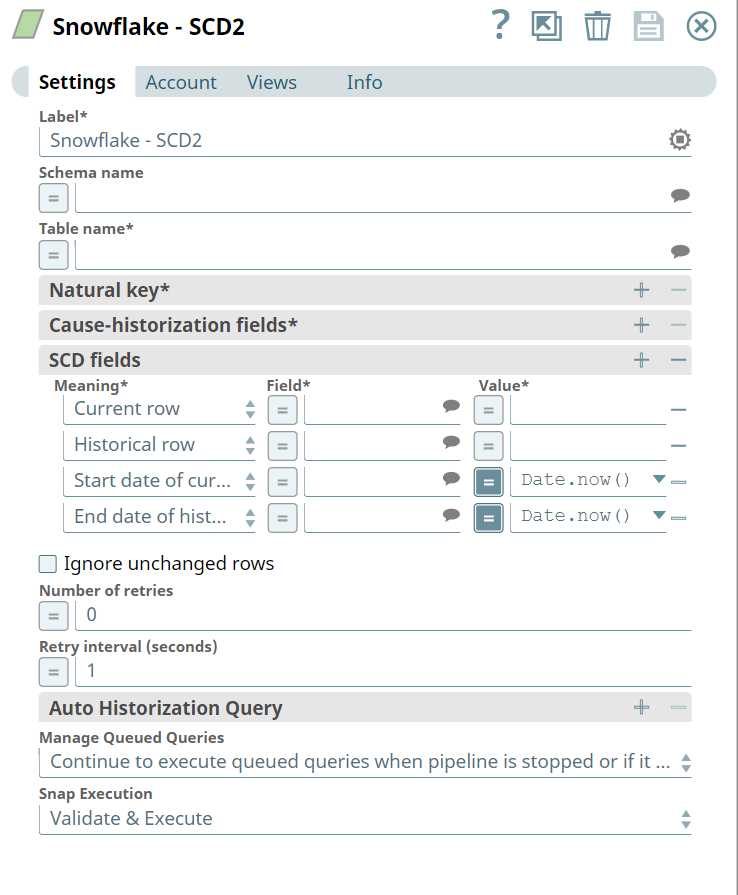

Snap Settings

| Label | Required. The name for the Snap. Modify this to be more specific, especially if there are more than one of the same Snap in the pipeline. | ||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Schema name | The name of the schema containing the target table. Providing the schema name along with the table name in the Table name field is sufficient. The suggestible field that lists all available schema in the configured account. Default value: None Example: "TestSchema" | ||||||||||||

| Table name | Required. The name of the target table. Syntax is "<schema_name>"."<table_name>". This is a suggestible field that lists all available tables if the schema is provided in the Schema name field. Alternatively, if the Schema name field is blank, it lists all tables within the account if the schema is not provided. Default value: None Example: "TestSchema"."TestTable"

| ||||||||||||

| Natural key | Names of fields that identify a unique row in the target table. The identity key cannot be used as the Natural key, since a current row and its historical rows cannot have the same natural key value Default value: None Example: id (Each record has to have a unique value) | ||||||||||||

| Cause-historization fields | Names of fields where any change in value causes the historization of an existing row and the insertion of a new current row. Default value: None Example: gold bullion rate | ||||||||||||

| SCD fields | Required. The historical and updated information for the Cause-historization field. Click + to add SCD fields. By default, there are four rows in this field-set:

| ||||||||||||

| Meaning | Specifies the table columns that are to be updated for implementing the SCD2 type transformation. Default value:

| ||||||||||||

| Field | The fields in the table will contain the historical information. Default value: None Below are the values that must be configured for each row:

| ||||||||||||

| Value | The value to be assigned to the current or historical row. For date-related rows, the default is Date.now(). Default value:

The Value field should be configured as follows:

| ||||||||||||

| Ignore unchanged rows | Specifies whether the Snap must ignore writing unchanged rows from the source table to the target table. If you enable this option, the Snap generates a corresponding document in the target only if the Cause-historization column in the source row is changed. Else, the Snap does not generate any corresponding document in the target. Default value: Not selected | ||||||||||||

| Number of retries | The number of times that the Snap must try to write the fields in case of an error during processing. An error is displayed if the maximum number of tries has been reached. Default value: 0 | ||||||||||||

| Retry interval (seconds) | The time interval, in seconds, between subsequent retry attempts. Default value: 1 | ||||||||||||

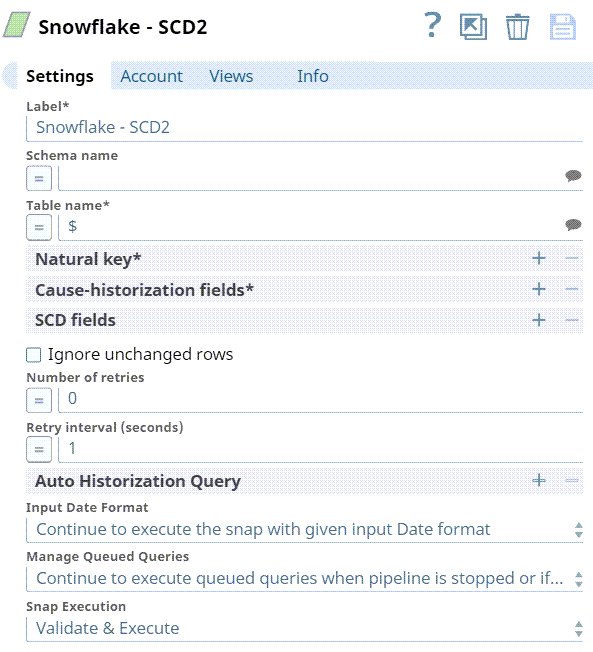

| Auto Historization Query | This field-set is used to specify the fields that are to be used to historize table data. Historization is in the sort order specified. Care must be taken that the field is sortable. You can also add multiple fields here; historizaton occurs when even of the fields is changed. | ||||||||||||

| Field | The name of the field. This is a suggestible field and suggests all the fields in the target table. Example: Invoice_Number Default value: N/A

| ||||||||||||

| Sort Order | The order in which the selected field is to be historized. Available options are:

Default value: Ascending Order | ||||||||||||

| Input Date Format | The property has the following two options:

| ||||||||||||

| Manage Queued Queries | Select this property to decide whether the Snap should continue or cancel the execution of the queued Snowflake Execute SQL queries when you stop the pipeline.

Default value: Continue to execute queued queries when pipeline is stopped or if it fails | ||||||||||||

|

|

Examples

Historizing Incoming Records

This example demonstrates how you can use the Snowflake SCD2 Snap to auto-historize records. In this example, since the existing record in the Snowflake table is the latest, the incoming records are historized.

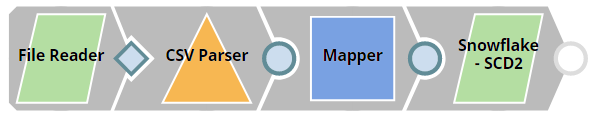

This Pipeline performs the following operations:

- Read, parse, and map the input data with the fields in the target table.

- Historize records based on the specified criteria.

- Upsert the latest data into the target table.

| Info | ||

|---|---|---|

| ||

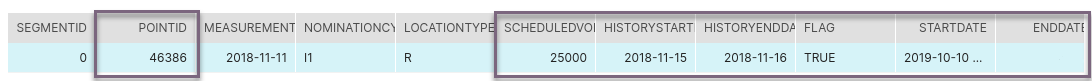

Before we start, let us look at the target table and understand some of its columns that are necessary for the Pipeline: We focus on the highlighted columns above to demonstrate auto-historization and describe their function in the table.

|

Input Data: Reading, Parsing and Mapping

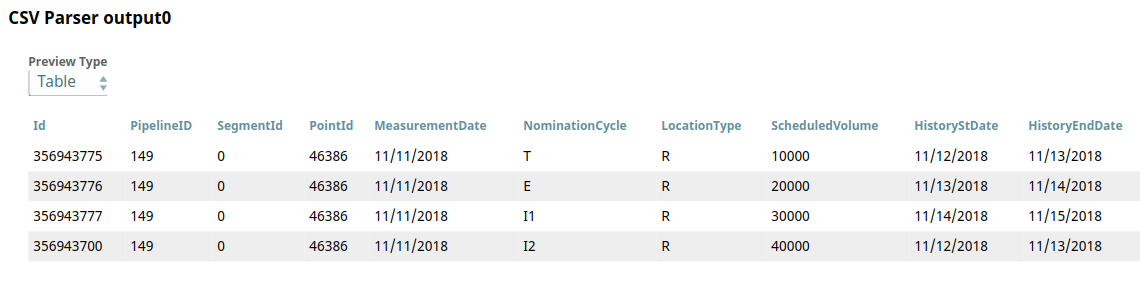

This Pipeline is configured to send records into the target table. The File Reader Snap is configured to read a CSV file that contains the records. The downstream CSV Parser Snap parses the CSV file read by the File Reader Snap. Below is a preview of this file:

Based on the values of HistoryStDate and HistoryEndDate, it is clear that the existing record in the target table is the latest (or current) record.

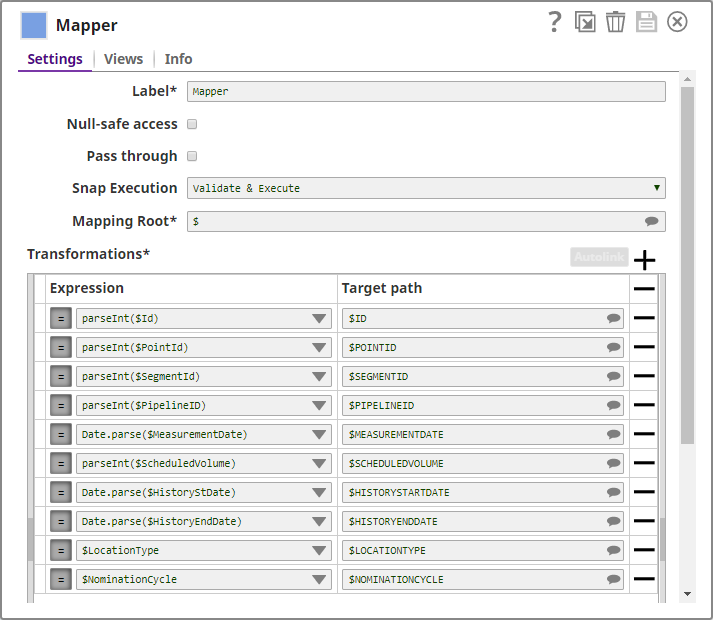

Since the output from the CSV Parser Snap is a string, it has to be parsed into the appropriate data type. Parsing and data mapping is done using the Mapper Snap, as shown below:

This mapped data is then sent to the Snowflake SCD2 Snap.

Data Processing

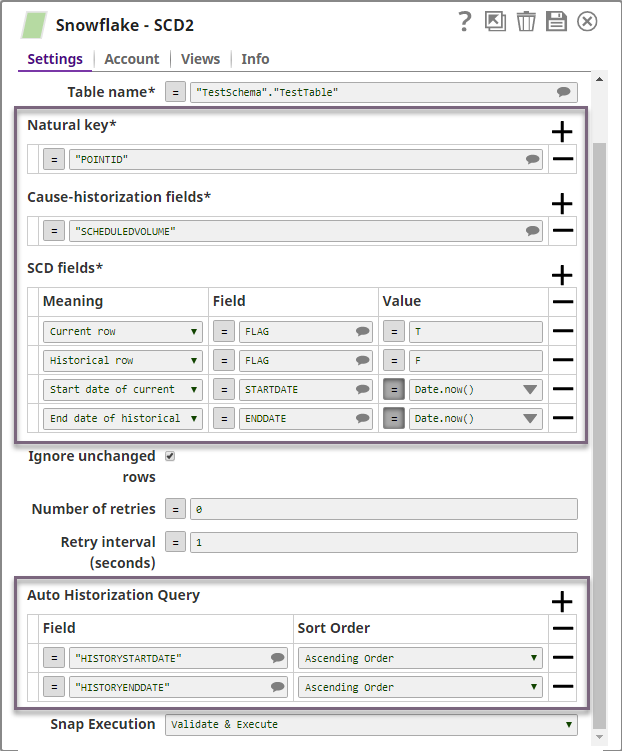

The Snowflake SCD2 Snap performs SCD2 operations on the target table. We configure it as shown below:

Let us take a look at the highlighted Snap fields and how they affect the Snap functionality in this example:

- Natural key: The Snap looks for records with matching POINT ID values in the incoming documents to group the records.

- Cause-historization fields: For each unique POINT ID, changes in SCHEDULEDVOLUME initiate historization. If a change has not occurred, the incoming records are historized..

- SCD fields:

- The state of the current or historical record is marked in the FLAG field, T for current record and F for the historical record.

- The columns STARTDATE and ENDDATE in the target table are maintained to denote the start and end dates of the current state of the table's data. The ENDDATE is always blank for a current record.

- Auto Historization Query: The Snap sorts the values in the HISTORYSTARTDATE and HISTORYENDDATE columns for the same POINT ID in the Snowflake table and the incoming documents in ascending order. The record with the highest value in those fields is considered the current record.

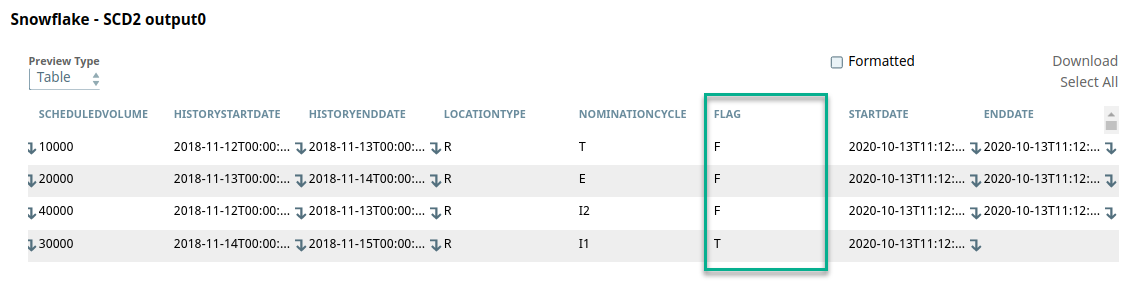

All incoming records pertaining to a POINT ID are historized. The value F is assigned under the FLAG column to these fields and the corresponding STARTDATE and ENDDATE are evaluated by the expression Date.now().

This can be seen in the SCD2 Snap's output preview:

The Snap identifies the current and historical records and this data is now ready to be updated and inserted into the target table.

Upsert Data into the Target Table

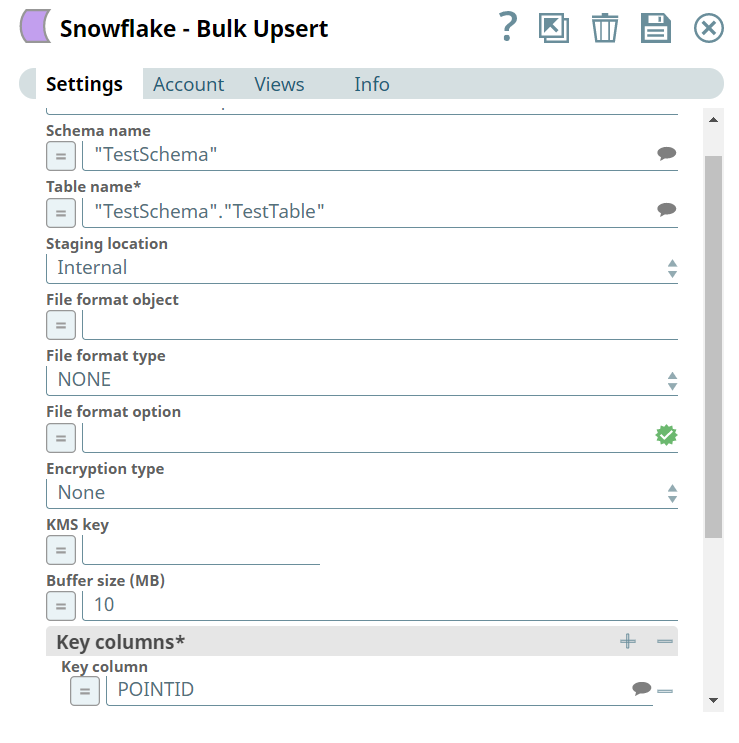

We use the Snowflake Bulk Upsert Snap to update the target table with this historized data. We configure the Snowflake Bulk Upsert Snap as shown below:

Download this Pipeline and sample data. This is a compressed file, unzip it to extract its contents before importing them in SnapLogic.

Downloads

| Attachments | ||

|---|---|---|

|

| View file | ||||

|---|---|---|---|---|

|

| Insert excerpt | ||||||

|---|---|---|---|---|---|---|

|