On this Page

| Table of Contents | ||||

|---|---|---|---|---|

|

Snap type: | Write | |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

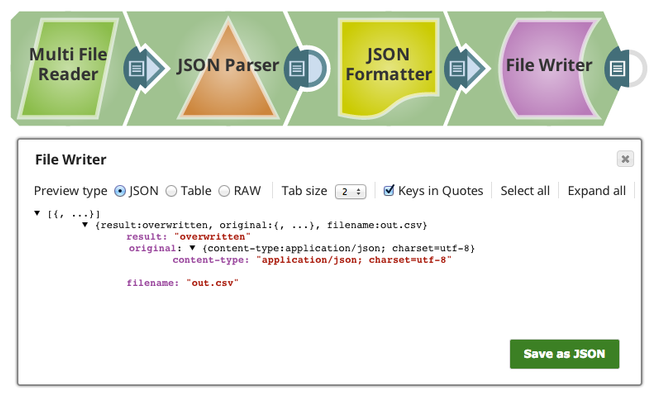

Description: | This Snap reads a binary data stream from the input view and writes it to a specified file destination. Possible file destinations include: SLDB, HTTP, S3, FTP, SFTP, FTPS, or HDFS. If File permissions for the file are provided, the Snap set those permissions to the file.

The value of the "result" field can be "overwritten", "created", "ignored", or "appended". The value "ignored" indicates that the Snap did not overwrite the existing file because the value of the File action property is "IGNORE".

| |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Prerequisites: |

| |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Support and limitations: |

| |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Known Issues: | This Snap does not create an output file when using the input from SAS Generator Snap configured with only the DELETE SAS permission. This is not the case when the target file exists. | |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Account: | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| Excerpt |

|---|

Specify a name for the Snap. You can modify this to be more specific, especially if you have more than one of the same Snap in your Pipeline. |

File name*

Specify the URI of the destination file to which the the data (binary input from the upstream input view) is written. It may start with one of the following protocols:

- http:

- https:

- s3:

- sftp:

- ftp:

- ftps:

- hdfs:

- sldb:

- smb:

file: (only for use with a Groundplex)

wasb:

wasbs:

gs:

adl:

For SLDB files, if you enter:

just a file name, such as file.csv, then it writes the file to: /<org>/projects/<pipeline project>/file1.csv (where <org> is your organization name and <pipeline project> is the project where the pipeline is stored) if the pipeline is in a project other than the shared project.

shared/file1.csv, then it writes the file to: /<org>/shared/file1.csv.

The Snap can write a file to its own project directory or the shared project, and cannot write it to another project directory.

For S3, your account must have full access.

The File property can be a JavaScript expression with the "=" button pressed.

For example, if the "File" property is:

"sldb:///out_" + Date.now() + ".csv"

then the evaluated filename can be:

sldb:///out_2013-11-13T00:22:31.880Z.csv

Fields in the binary header can be also be accessed when computing a file name. For example, if a File Reader Snap was directly connected to a File Writer, you could access the "content-location" header field to get the original path to the file.

You could then compute a new file name based on the old one, for instance, to make a backup file:

$['content-location'].match('/([^/]+)$')[1] + '.backup'

For http: and https: protocols, the Snap uses http PUT method only. This property should have the syntax:

[protocol]://[host][:port]/[path]

Please note "://" is a separator between the file protocol and the rest of the URL and the host name and the port number should be between "://" and "/". If the port number is omitted, a default port for the protocol is used. The hostname and port number are omitted in the sldb and s3 protocols.

This property should be an absolute path for all protocols except sldb.The file:/// protocol is supported only on Groundplex. In Cloudplex configurations, please use sldb or other file protocols. When using the file:/// protocol, the file access is conducted using the permissions of the user in whose name the Snaplex is running (by default Snapuser). File system access is to be used with caution, and it is the customer's own responsibility to ensure that file system is cleaned up after use.

For HDFS, if you want to be able to suggest information, use the HDFS Writer Snap.This Snap uses account references created on the Accounts page of SnapLogic Manager to handle access to this endpoint. This Snap supports several account types, as listed in the table below, or no account. See Configuring Binary Accounts for information on setting up accounts that work with this Snap.

Account types supported by each protocol are as follows:

| Protocol | Account types |

|---|---|

| sldb | no account |

| s3 | AWS S3, S3 Dynamic |

| ftp | Basic Auth |

| sftp | Basic Auth, SSH Auth |

| ftps | Basic Auth |

| hdfs | no account |

| http | no account |

| https | optional account |

| smb | SMB |

| file | no account |

| wasb | Azure Storage |

| wasbs | Azure Storage |

| gs | Google Storage |

| adl | Azure Data lake |

| Note |

|---|

The FTPS file protocol works only in explicit mode. The implicit mode is not supported. |

Required settings for account types are as follows:

| Account Type | Settings |

|---|---|

| Basic Auth | Username, Password |

| AWS S3 | Access-key ID, Secret key, Server-side encryption |

| S3 Dynamic | Access-key ID, Secret key, Security token, Server-side encryption |

| SSH Auth | Username, Private key, Key Passphrase |

| SMB | Domain, Username, Password |

| Azure Storage | Account name, Primary access key |

| Google Storage | Approval prompt, Application scope, Auto-refresh token |

| Azure Data Lake | Tenant ID, Access ID, Secret Key |

| Note |

|---|

SnapLogic automatically appends "azuredatalakestore.net" to the store name you specify when using Azure Data Lake; therefore, you do not need to add 'azuredatalakestore.net' to the URI while specifying the directory. |

| Input | This Snap has exactly one binary input view, where it gets the binary data to be written to the file specified in the File Name property. |

|---|---|

| Output | This Snap has at most one document output view. |

| Error | This Snap has at most one document error view and produces zero or more documents in the view. |

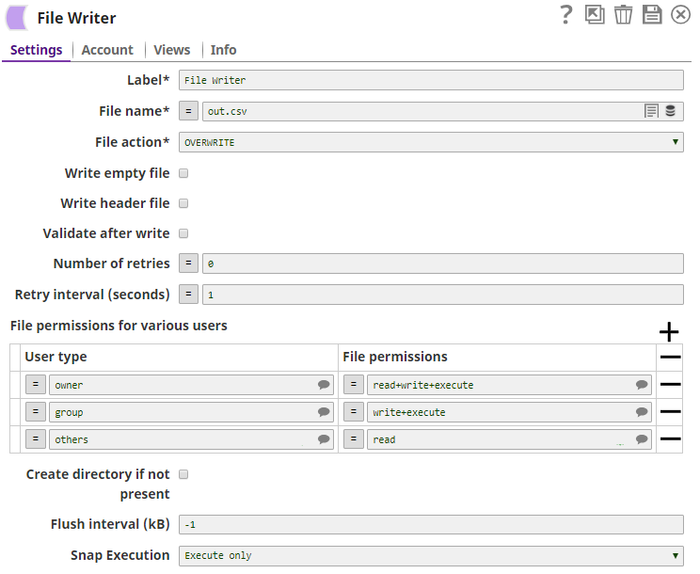

Settings

| , Password | |

| Azure Storage | Account name, Primary access key |

| Google Storage | Approval prompt, Application scope, Auto-refresh token |

| Azure Data Lake | Tenant ID, Access ID, Secret Key |

| Note |

|---|

SnapLogic automatically appends "azuredatalakestore.net" to the store name you specify when using Azure Data Lake; therefore, you do not need to add 'azuredatalakestore.net' to the URI while specifying the directory. |

| Input | This Snap has exactly one binary input view, where it gets the binary data to be written to the file specified in the File Name property. |

|---|---|

| Output | This Snap has at most one document output view. |

| Error | This Snap has at most one document error view and produces zero or more documents in the view. |

Settings

Label*

| Excerpt |

|---|

Specify a name for the Snap. You can modify this to be more specific, especially if you have more than one of the same Snap in your Pipeline. |

File name*

Specify the URI of the destination file to which the the data (binary input from the upstream input view) is written. It may start with one of the following protocols:

- http:

- https:

- s3:

- sftp:

- ftp:

- ftps:

- hdfs:

- sldb:

- smb:

file: (only for use with a Groundplex)

wasb:

wasbs:

gs:

adl:

| Info | ||

|---|---|---|

| ||

For SLDB files, if you enter:

The Snap can write a file to its own project directory or the shared project, and cannot write it to another project directory. |

| Info | ||

|---|---|---|

| ||

To write files in S3, your account must have full access. |

| Info | ||||

|---|---|---|---|---|

You can also access the fields in a binary header when specifying a file name. For example, if you have a File Reader Snap upstream of a File Writer Snap, you can access the "content-location" header field to get the original path of the file. You can then use a new file name based on the old one, for instance, to make a backup file: For http: and https: protocols, the Snap uses http PUT method only. This property should have the following syntax:

|

| Note |

|---|

For more information about referencing SMB file names, see Microsoft's documentation. |

Default value: [None]

Examples:

s3:///<S3_bucket_name>@s3.<region_name>.amazonaws.com/<path><path>For region names and their details, see AWS Regions and Endpoints.

Example: s3 s3:///mybucket@s3.eu-west-1.amazonaws.com/test.jsonsftp://ftp.snaplogic.com:22/dir/filenamesmb://smb.Snaplogic.com:445/test_files/csv/input.csv- _filename (A key/value pair with "filename" key should be defined as a pipeline parameter.)file:///D:/testFolder/ (if Pipeline parameter:

_filename - If the Snap is executed in the Windows Groundplex and needs to access D: drive)wasb:

file:///snaplogicD:/testDir/sampl/csv (totestFolder/ - To write 'sample.csv' file into the 'testDir' folder in the 'Snaplogic' container)gs:

wasb:///snaplogic/mybuckettestDir/csvsampl/test.csv (tocsv - To read 'test.csv' file in the 'csv/' folder of the 'mybucket' bucket)

- adl://storename/folder/filename (to read the file from a location of the storage)

Default value: [None]

The format should be in the following syntax:/<org>/projects/<project_space>/<filename>

For example:

/snaplogic/projects/Automation_Project/EmployeeList.csv

Adding a new folder in the File Name field will create a folder and write the file within that folder. Because SnapLogic does not display these folders within the SnapLogic Assets in the SnapLogic Manager, the following format should not be used and is not supported.

/<org>/projects/<project_space>/<folder_name>/<filename>- ' bucket):

gs:///mybucket/csv/test.csv - To read the file from a location of the storage:

adl://storename/folder/filename

File action*

Specify the action to perform if the file already exists. The available options are:

- Overwrite - If Overwrite is selected, the Snap attempts to write the file without checking for the file's existence for a better performance, and the "fileAction" field will be "overwritten" in the output view data.

- Append - Append is supported for file, FTP, FTPS and SFTP protocols only.

- Ignore - If Ignore, it will not overwrite the file and will do nothing but write the status and file name to its output view.

- Error - If Error is selected, the error displays in the Pipeline Run Log. If an error view is defined, the error will be written there as well.

| Note |

|---|

|

Default value: Overwrite

Select this checkbox to write an empty file when the incoming binary document has empty data. If there is no incoming document at the input view of the Snap, no file is written regardless of the value of the property.

Default value: Not selected.

Write header file

The binary data stream in the input view may contain header information about the binary data in the form of a document with key-value-pair map data. If this property is checked, the Snap writes a header file whose name is generated by appending ".header" to the value of the File name property. The same header information is also included in the output view data, as shown in the "Expected output" section above, under the key "original". Note that if the header has no keys other than Content-Type or Content-Encoding, the .header file will not be written

Default value: Not selected

Validate after write

Select this checkbox to enable the Snap to check if the file exists after the completion of the file write. This may delay a few more seconds for the validation.

Default value: Not selected

Specify the maximum number of retry attempts when the Snap fails to write. If the value is larger than 0, the Snap first stores the input data in a temporary local file before writing to the target file.

| Info |

|---|

|

Minimum value: 0

Default value: 0

Example: 3

Multiexcerpt include macro name retries page File Reader

Specify the minimum number of seconds for which the Snap must wait before attempting recovery from a network failure.

Minimum value: 1

Default value: 1

Example: 3

File permissions for various users

Set the file permission to assign to the file.

Note:

- Supported for sftp, ftp, ftps, file, and hdfs protocols only.

- FTP/FTPS servers on Windows machines may not be supported.

| User type | It should be 'owner' or 'group' or 'others'. Each row can have only one user type and each user type should appear only once. Please select one from the suggested list. Example: owner, group, others |

|---|---|

| File permissions | It can be any combination of {read, write, execute} separated by '+' character. Please select one from the suggested list. Example: read, write, execute, read+write, read+write+execute |

User type

The user type to assign the permissions. This field is case-insensitive. Suggestible options are:

- owner

- group

- others

| Note |

|---|

Specify at most one row per user type. |

File permissions

Specify any combination of {read, write, execute}, separated by a plus sign (+). This field is case-insensitive. Suggestible options are:

- read

- write

- execute

- read+write

- read+execute

- write+execute

- read+write+execute

Create directory if not present

Select this checkbox to enable the Snap to create a new directory if the specified directory path does not exist. This field is not supported for HTTP, HTTPS, SLDB and SMB file protocols.

Default value: Not selected

Flush interval in kilobytes during the file upload. The Snap can flush a given size of data output stream written to the target file server. If the value is zero, the Snap flushes in maximum frequency after each byte block is written. Larger the value is, the less frequent flushes the Snap performs. Leave the property at default -1 for no flush during the upload. This property may help if the file upload experiences an intermittent failure. However, more frequent flushes will result in a slower file upload.

Default value: -1

Example: 100

| Multiexcerpt include macro | ||||

|---|---|---|---|---|

|

| Multiexcerpt include macro | ||||

|---|---|---|---|---|

|

| Multiexcerpt include macro | ||||

|---|---|---|---|---|

|

| Multiexcerpt include macro | ||||

|---|---|---|---|---|

|

Examples

- For SFTP files, if you attempt to write a file into a directory where you do not have a write access right, the write operation will fail with "access denied" error. When you get an SFTP account credential, it is also important to know where your home directory is, for example, sftp://ftp.snaplogic.com/home/mrtest for username "mrtest"

- HDFS Example

For HDFS file access, please use a SnapLogic on-premises Groundplex and make sure that its instance is within the Hadoop cluster and SSH authentication has already been established. You can access HDFS files in the same way as other file protocols in File Reader and File Writer Snaps. There is no need to use any account in the Snap.

| Note |

|---|

HDFS 2.4.0 is supported for the hdfs protocol. |

An example for HDFS is:

| Code Block |

|---|

hdfs://<hostname>:<port number>/<path to folder>/<filename> |

If Cloudera Hadoop Namenode is installed in AWS EC2 and its hostname is "ec2-54-198-212-134.compute-1.amazonaws.com" and its port number is 8020, then you would enter:

| Code Block |

|---|

hdfs://ec2-54-198-212-134.compute-1.amazonaws.com:8020/user/john/input/sample.csv |

| Expand | ||

|---|---|---|

| ||

Example pipeline file for an sldb file write as shown below: |

Troubleshooting

| Error | Reason | Resolution |

|---|---|---|

Could not evaluate expression: filepath Mismatched input ':' expecting {<EOF>, '||', '&&', '^', '==', '!=', '>', '<', '>=', '<=', '+', '-', '*', '/', '%', '?', '[', PropertyRef}. | The expression toggle (=) is selected on the File name field, so it is trying to evaluate the filepath as an expression. | Check the expression syntax. Click on the toggle to take the field out of expression mode. |

Failure: | The expression toggle (=) is selected on the File name field, so it is trying to evaluate the filename as an expression. | Check expression syntax and data types. Click on the toggle to take the field out of expression mode. |

Downloads

| Attachments | ||||||

|---|---|---|---|---|---|---|

|

See Also

| Insert excerpt | ||||||

|---|---|---|---|---|---|---|

|