...

In this article

| Table of Contents | ||||

|---|---|---|---|---|

|

Overview

Use this Snap to run multiple child Pipelines through one parent Pipeline.

| Note |

|---|

| To execute a SnapLogic Pipeline that is exposed as a REST service, use the REST Get Snap instead. |

Key Features

The Pipeline Execute Snap enables you to do the following:

- Structure complex Pipelines into smaller segments through child Pipelines.

- Initiate parallel data processing using the Pooling option.

- Orchestrate data processing across nodes, within the Snaplex or across Snaplexes.

- Distribute global values through Pipeline parameters across a set of child Pipeline Snaps.

Supported Modes for Pipelines

...

Reuse mode: In reuse mode, child Pipelines are started and each child Pipeline instance can process multiple input documents from the parent. If you set the pool size to n (default 1), then n number of child pipelines are started and they will process input document in a streaming manner. The child pipeline needs to have an unlinked input view for use in reuse mode.

Reuse mode is more performant, but reuse mode has the restriction that the child pipeline has to be a streaming pipeline.

| Info | ||

|---|---|---|

| ||

This Snap replaces the ForEach and Task Execute Snaps, as well as the Nested Pipeline mechanism. |

...

Resumable Child Pipeline: Resumable Pipeline does not support the Pipeline Execute Snap. A regular mode pipeline can use the Pipeline Execute Snap to call a Resumable mode pipeline. If the child pipeline is a Resumable pipeline, then the batch size cannot be greater than one.

...

Works in Ultra Task Pipelines with the following exception: Reusing a runtime on another Snaplex is not supported. See Snap Support for Ultra Pipelines.

Without reuse enabled, the one-in-one-out requirement for Ultra means batching will not be supported. There will be a runtime check which will fail the parent pipeline if the batch size is set greater than 1. This would be similar to the current behavior in DB insert and other snaps in ultra mode.

...

Pooling Enabled: The pool size and batch size can both be set to greater than one, in which case the input documents are spread across the child pipeline in a round-robin fashion to ensure that if the child Pipeline is doing any external calls that are slow, then the processing is spread across the children in parallel. The limitation of this option is that the document order is not maintained.

Prerequisites

None.

Limitations

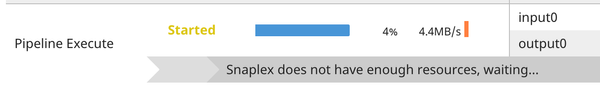

If there are not enough Snaplex nodes to execute the Pipeline on the Snaplex, then the Snap waits until Snaplex resources are available. When this situation occurs, the following message appears in the execution statistics dialog:

Because a large number of Pipeline runtimes can be generated by this Snap, only the last 500 completed child Pipeline runs are saved for inspection in the Dashboard.

- The Pipeline Execute Snap cannot exceed a depth of 64 child Pipelines before they begin failing.

- Child Pipelines do not display data preview details. You can view the data preview for any child Pipeline when the Pipeline Execute Snap completes execution in the parent Pipeline.

Ultra Pipelines do not support batching.

Unlike the Group By N Snap, when you configure the Batch field, the documents are processed one by one by the Pipeline Execute Snap and then transferred to the child Pipeline as soon as the parent Pipeline receives it. When the batch is over or the input stream ends, the child Pipeline is closed.

Snap Views

...

Binary or Document

...

- Min:0

- Max:1

...

- Mapper Snap

- Copy Snap

...

Binary or Document

...

- Min:0

- Max:1

...

- Mapper Snap

- Router Snap

- If the child Pipeline has an unlinked output, then documents or binary data from that view are passed out of this view.

- If the child Pipeline does not have an unlinked view, then output document or binary data is generated for successful runtimes with the run_id field.

- Unsuccessful Pipeline runs write a document to the error view. If Reuse executions to process documents is unselected, then the original input document or binary data is added to the output document.

| Note | ||

|---|---|---|

| ||

If the value in the Pool Size field is greater than one, then the order of records in the output is not guaranteed to be the same as that of the input. |

...

Error handling is a generic way to handle errors without losing data or failing the Snap execution. You can handle the errors that the Snap might encounter when running the Pipeline by choosing one of the following options from the When errors occur list under the Views tab:

Stop Pipeline Execution: Stops the current Pipeline execution when the Snap encounters an error.

Discard Error Data and Continue: Ignores the error, discards that record, and continues with the remaining records.

Route Error Data to Error View: Routes the error data to an error view without stopping the Snap execution.

Learn more about Error handling in Pipelines.

Snap Settings

| Info |

|---|

|

...

Label*

...

Pipeline*

...

Specify the absolute or relative (expression-based) path of the child Pipeline to run. If you only specify the Pipeline name, the Snap searches for the Pipeline in the following folders in this order:

- Current project

- Shared project-space

- Shared folder of the Org

You can specify the absolute path to the target project using the Org/project_space/project notation.

You can also dynamically choose the Pipeline to run by entering an expression in this field when Expression enabled. For example, to run all of the Pipelines in a project, you can connect the SnapLogic List Snap to this Snap to retrieve the list of Pipelines in the project and run each one.

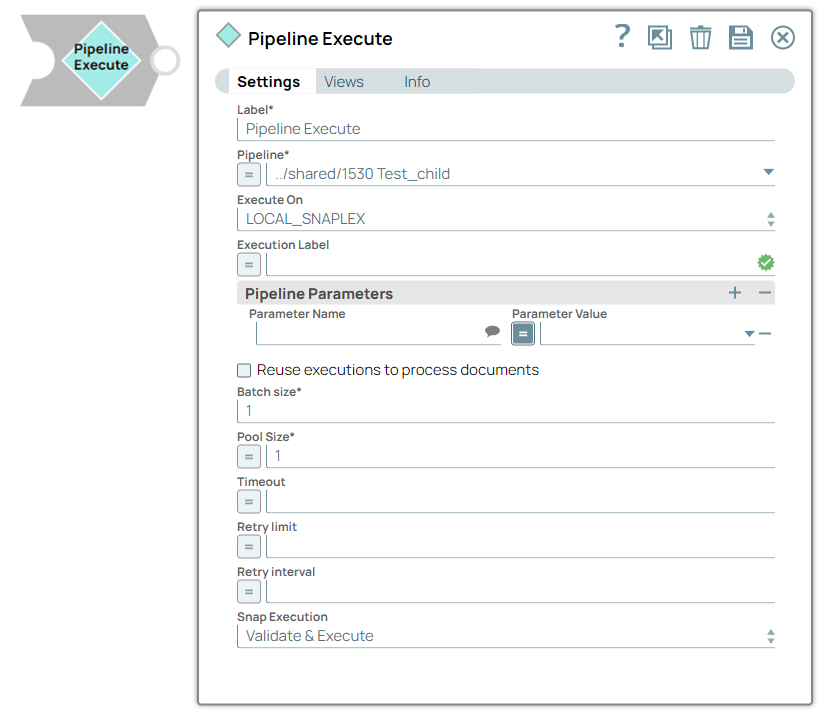

Execute On

...

Select the one of the following Snaplex options to specify the target Snaplex for the child Pipeline:

- SNAPLEX_WITH_PATH. Runs the child Pipeline on a user-specified Snaplex. When you choose this option, the Snaplex Path field appears.

- LOCAL_NODE. Runs the child Pipeline on the same node as the parent Pipeline.

- LOCAL_SNAPLEX. Runs the child Pipeline on one of the available nodes in the same Snaplex as the parent Pipeline.

...

| title | Best Practices |

|---|

When choosing which Snaplex to run, always consider first the LOCAL_NODE option. With this selection, the child Pipeline runtime occurs on the same Snaplex node as that of the parent Pipeline. Due to absence of network traffic required to start the child Pipeline on another node, having the runtime on the same node makes the execution faster and more reliable.

In both of these cases, you do not need to set an Org-specific property. This scenario facilitates usage for the user importing the Pipeline.

However, if you do need to run the child Pipeline on a different Snaplex than the parent Pipeline, then select SNAPLEX_WITH_PATH and enter the target Snaplex name.

In this case, you can take advantage of the following strategies:

- Use a relative path for the Snaplex name and try to use the same Snaplex names in your development, QA, stage, or production Orgs.

For example, if the Snaplex property is set to cloud, the Pipeline is run on the Snaplex named cloud in the shared directory of the current Org. - Use a Pipeline parameter or expression library 1 to specify the path to the Snaplex.

- For complicated setups, you might want to create an expression library file that contains the details of the environment and import that into the Pipeline. In this model, each Org would have its own version of the library with the appropriate settings for the environment. The Pipeline can then be moved around to each Org without needing to be updated.

| Note | ||

|---|---|---|

| ||

We do not recommend using the |

Snaplex Path

...

Appears when you select SNAPLEX_WITH_PATH option for Execute On.

Enter the name of the Snaplex on which you want the child Pipeline to run. Click to select from the list of Snaplex instances available in your Org.

| Note |

|---|

If you do not provide a Snaplex Path, then by default, the child Pipeline runs on the same node as the parent Pipeline. |

Execution Label

...

Specify the label to display in the Pipeline view of the Dashboard. You can use this field to differentiate one Pipeline execution from another.

Pipeline Parameters

...

Use this field set to define the Pipeline Parameters for the Pipeline selected in the Pipeline field. When you select Reuse executions to process documents, you cannot change parameter values from one Pipeline invocation to the next.

...

Parameter Name

Default Value: N/A

Example: Postal_Code

...

Enter the name of the parameter. You can select the Pipeline Parameters defined for the Pipeline selected in the Pipeline field.

...

Parameter Value

Default Value: N/A

Example:94402

...

Enter the value for the Pipeline Parameter, which can be an expression based on incoming documents or a constant.

If you configure the value as an expression based on the input, then each incoming document or binary data is evaluated against that expression when invoking the Pipeline. The result of the expression is JSON-encoded if it is not a string. The child Pipeline then needs to use the JSON.parse() expression to decode the parameter value.

When Reuse executions to process documents is enabled, the parameter values cannot change from one invocation to the next.

Reuse executions to process documents

...

Select this option to start a child Pipeline and pass multiple inputs to the Pipeline. Reusable executions continue to live until all of the input documents to this Snap have been fully processed.

| Info |

|---|

|

Batch size*

...

Enter the number of documents in the batch size. If Batch Size is set to N, then N input documents are sent to each child Pipeline that is started. After N documents, the child Pipeline input view is closed until the child Pipeline completes its execution. The output of the child Pipeline (one or more documents) passes to the Pipeline Execute output view. New child Pipelines are started after the original Pipeline completes.

| Note |

|---|

|

| Info | ||

|---|---|---|

| ||

For existing Pipeline Execute Snap instances, null and zero values for the property are treated as a Batch size of 1. The behavior for existing Pipelines should not change, the child pipeline should get one document per execution. |

Pool Size

...

Enter multiple input documents or binary data to be processed concurrently by specifying an execution pool size. When the pool size is greater than one, then the Snap starts Pipeline executions as needed for up to the specified pool size.

When you select Reuse executions to process documents, the Snap starts a new execution only if all executions are busy working on documents or binary data and the total number of executions is below the pool size.

Timeout

...

Enter the maximum number of seconds for which the Snap must wait for the child Pipeline to complete the runtime. If the child Pipeline does not complete the runtime before the timeout, the execution process stops and is marked as failed.

...

In this article

| Table of Contents | ||||

|---|---|---|---|---|

|

Overview

The Pipeline Execute Snap executes a pipeline in a specific Snaplex with the specified parameters. You can use this Snap to execute multiple child pipelines from a single parent pipeline. Configure the Snap to execute the pipeline and data input.

| Info |

|---|

To execute a SnapLogic pipeline that is exposed as a REST service, use the HTTP Client Snap. |

Key Features

The Pipeline Execute Snap enables you to:

Structure complex pipelines into smaller segments through child pipelines

Initiate parallel data processing using the pooling option

Orchestrate data processing across nodes, in the Snaplex or across Snaplexes

Distribute global values through pipeline parameters across a set of child pipeline Snaps

Supported Modes for Pipelines

Modes | Description |

|---|---|

Standard mode (default) |

|

Reuse mode |

|

Resumable Child pipeline |

|

Ultra Task pipelines |

|

ELT Mode |

|

Pooling Enabled |

|

| Info |

|---|

Replaces Deprecated Snaps: This Snap replaces the ForEach and Task Execute Snaps and the Nested pipeline mechanism. |

Prerequisites

None.

Limitations

If there are insufficient Snaplex nodes to execute the pipeline, the Snap waits until the resources become available. In this scenario, a message appears in the execution statistics dialog.

Only the last 100 completed child pipeline runs are saved for inspection in the Dashboard because this Snap generates many pipeline runtimes.

The Pipeline Execute Snap cannot exceed a depth of 64 child pipelines before they begin to fail.

The child pipelines do not display data preview details. However, you can view the data preview for any child pipeline after the Pipeline Execute Snap completes execution in the parent pipeline.

Ultra Pipelines do not support batching.

Unlike the Group By N Snap, when you configure the Batch field, the documents are processed one by one by the Pipeline Execute Snap and then transferred to the child pipeline when the parent pipeline receives it. The child pipeline closes when the batch or input stream ends.

Known Issues

None.

Snap Views

Type | Format | Number of Views | Examples of Upstream and Downstream Snaps | Description |

|---|---|---|---|---|

Input | Binary or Document |

|

| The document or binary data to send to the child pipeline. Retry is not supported if the input view is a Binary data type |

Output | Binary or Document |

|

|

|

Error | Error handling is a generic way to handle errors without losing data or failing the Snap execution. You can handle the errors that the Snap might encounter when running the Pipeline by choosing one of the following options from the When errors occur list under the Views tab:

Learn more about Error handling in Pipelines. | |||

Snap Settings

| Info |

|---|

|

...

Field Name | Field Type | Field Dependency | Description | |

|---|---|---|---|---|

Label* Default Value: Pipeline Execute | String | None. | Specify the name for the Snap. You can modify this to be more specific, especially if you have more than one of the same Snap in your pipeline. | |

Pipeline* Default Value: N/A | String/Expression | None | Specify the child pipeline's absolute or relative (expression-based) path to run. If you specify only the pipeline name, Snap searches for the pipeline in the following folders in this order:

You can specify the absolute path to the target project using the Org/project_space/project notation. You can also dynamically choose the pipeline to run by entering an expression in this field when Expressions are enabled. For example, to run all of the pipelines in a project, you can connect the SnapLogic List Snap to this Snap to retrieve the list of pipelines in the project and run each one. | |

Execute On Default Value: SNAPLEX_WITH_PATH | Dropdown list | N/A | Select one of the following Snaplex options to specify the target Snaplex for the child pipeline:

For more information, refer to the Best Practices. | |

Snaplex Path Default Value:N/A | String/Expression | Appears when you select SNAPLEX_WITH_PATH for Execute On. | Enter the name of the Snaplex on which you want the child pipeline to run. Click | |

Execution Label Default Value: N/A | String/Expression | N/A | Specify the label to display in the Pipeline view of the Dashboard. You can use this field to differentiate each pipeline execution. | |

Pipeline Parameters | Use this fieldset to define the Pipeline Parameters for the pipeline selected in the Pipeline field. When you select Reuse executions to process documents, you cannot change parameter values from one Pipeline invocation to the next. | |||

Parameter Name Default Value: N/A | String | Debug mode checkbox is not selected. | Enter the name of the parameter. Select the defined Pipeline Parameters in the Pipeline field. | |

Parameter Value Default Value: N/A | ||||

...

94002 | String | None | Enter the |

...

| Note |

|---|

This feature is incompatible with reusable executions. Retry is not supported if the input view is a Binary data type. |

Retry interval

...

Enter the minimum number of seconds that the Snap must wait between two retry requests. A retry happens only when the previous attempt results in an error.

| Note |

|---|

This feature is incompatible with reusable executions. Retry is not supported if the input view is a Binary data type. |

Snap Execution

...

| Multiexcerpt include macro | ||||

|---|---|---|---|---|

|

Guidelines for Child Pipelines

No unlinked binary input or output views. When you enter an expression in the Pipeline field, the Snap needs to contact the SnapLogic cloud servers to load the Pipeline runtime information. Also, if Reuse executions to process documents is enabled, the result of the expression cannot change between documents.

- When reusing Pipeline executions, there must be one unlinked input view and zero or one unlinked output view.

- If you do not enable Reuse executions to process documents, then use at most one unlinked input view and one unlinked output view.

- If the child Pipeline has an unlinked input view, make sure the Pipeline Execute Snap has an input view because the input document or binary data is fed into the child Pipeline.

- If you rename the child Pipeline, then you must manually update the reference to it in the Pipeline field; otherwise, the connection between child and parent Pipeline breaks.

- The child Pipeline is executed in preview mode when the Pipeline containing the Pipeline Execute Snap is saved. Consequently, any Snaps marked not to execute in preview mode are not executed and the child Pipeline only processes 50 documents.

- You cannot have a Pipeline call itself: recursion is not supported.

Schema Propagation Guidelines

...

The Pipeline Execute Snap is capable of propagating schema in both directions: upstream as well as downstream. See the example Schema Propagation in Parent Pipeline – 3.

| Info |

|---|

|

Ultra Mode Compatibility

- If you selected Reuse executions to process documents for this Snap in an Ultra Pipeline, then the Snaps in the child Pipeline must also be Ultra-compatible.

- If you need to use Snaps that are not Ultra-compatible in an Ultra Pipeline, you can create a child Pipeline with those Snaps and use a Pipeline Execute Snap with Reuse executions to process documents disabled to invoke the Pipeline. Since the child Pipeline is executed for every input document, the Ultra Pipeline restrictions do not apply.

For example, if you want to run an SQL Select operation on a table that would return more than one document, you can put a Select Snap followed by a Group By N Snap with the group size set to zero in a child Pipeline. In this configuration, the child Pipeline performs the select operation during execution, and then the Group By Snap gathers all of the outputs into a single document or as binary data. That single output document or binary data can then be used as the output of the Ultra Pipeline.

Creating a Child Pipeline by Accessing Pipelines in the Pipeline Catalog in Designer

...

value for the Pipeline Parameter, which can be an expression based on incoming documents or a constant. If you configure the value as an expression based on the input, then each incoming document or binary data evaluates against that expression when to you invoke the pipeline. The result of the expression is JSON-encoded if it is not a string. The child Pipeline then needs to use the When Reuse executions to process documents is enabled, the parameter values cannot change from one invocation to the next. | ||||||

Reuse executions to process documents Default Value: Deselected | Checkbox | None | Select this checkbox to start a child pipeline and pass multiple inputs to the pipeline. Reusable executions continue to live until all of the input documents to this Snap are fully processed.

| |||

Batch size* Default Value: 1 | Integer/Expression | None | Specify the number of documents in the batch size. If Batch Size is set to N, then N input documents are sent to each child pipeline that is started. After N documents, the child pipeline input view is closed until the child pipeline completes its execution. The output of the child pipeline (one or more documents) passes to the Pipeline Execute output view. New child pipelines are started after the original pipeline is complete.

| |||

Pool Size* Default Value: 1 | Integer/Expression | None | Specify an execution pool size to process multiple input documents or binary data concurrently. When the pool size is greater than one, the Snap starts Pipeline executions as needed up to the specified pool size. When you select Reuse executions to process documents, the Snap starts a new execution only if either all executions are busy working on documents or binary data and the total number of executions is below the pool size. | |||

Timeout (in seconds) Default Value: N/A | Integer/Expression | N/A | Specify the number of seconds for which the Snap must wait for the child pipeline to complete the runtime. If the child pipeline does not complete the runtime before the timeout, the execution process stops and is marked as failed. | |||

Retry limit Default Value: N/A | Integer/Expression | N/A | Specify the maximum number of retry attempts that the Snap must make in the case of a failure. If the child pipeline does not execute successfully, an error document is written to the error view. If the child pipeline is not in a completed state, then it will retry. The pipeline failure at the application level could have various causes, including network failures.

| |||

Retry interval Default Value: N/A | String/Expression | N/A | Specify the minimum number of seconds the Snap must wait between two retry requests. A retry happens only when the previous attempt results in an error.

| |||

Snap Execution Default Value: | Dropdown list | N/A | Select one of the following three modes in which the Snap executes. The available options are:

| |||

Troubleshooting

Error | Reason | Resolution |

|---|---|---|

Account validation failed. | The Pipeline ended before the batch could complete execution because of a connection error. | Verify that the Refresh token field is configured to handle the inputs properly. If you are not sure when the input data is available, configure this field as zero so the connection is always open. |

Access pipelines in the Pipeline Catalog in the Designer to create a Child pipeline

You can now browse the pipeline catalog for the target child pipeline and then select, drag, and drop it into Canvas. The Designer automatically adds the child pipeline using the Pipeline Execute Snap. You can preview a child pipeline by hovering over a Pipeline Execute Snap while the parent

...

pipeline is open on the Designer

...

.

...

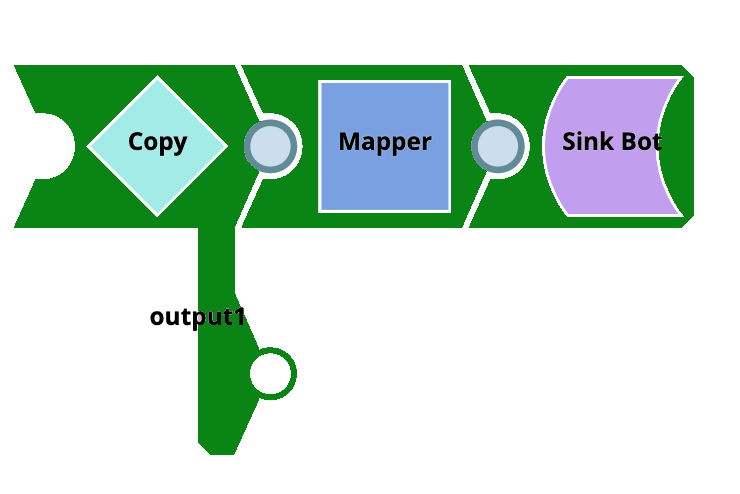

Return child pipeline output to the parent pipeline

A common use case for the Pipeline Execute Snap is to run a child Pipeline whose output is immediately returned to the parent

...

pipeline for further processing. You can achieve this return with the following Pipeline design for the child Pipeline.

In this example, the document in this child Pipeline is sent to the parent Pipeline through output1 of the

...

child pipeline. Any unconnected output view is returned to the parent Pipeline. You can use any Snap that completes execution in this way.

...

Execution States

When a Pipeline Execute Snap activates its child

...

pipeline, you can view the status as it executes on the SnapLogic Designer canvas.

A child

...

pipeline also reports its execution to the parent

...

pipeline.

...

In the Monitor overview and Dashboard, you can hover over the status shown in the Status column for a pipeline with a Pipeline Execute Snap, and the following messages are displayed for the following scenarios:

If the parent Pipeline has the status Failed shown in the Status column,

...

the following message is displayed: One of the child Pipelines Failed.

If the parent Pipeline has the status Completed with Errors shown in the Status column, the following message is displayed: One

...

of the child Pipelines completed with Errors.

These execution state messages apply even when the child

...

pipeline does not appear on the Dashboard because of child

...

pipeline execution limits.

The Monitor Execution overview does not include the Completed with Warnings status as a searchable status.

Examples

Run a Child

...

pipeline multiple times

The PE_Multiple_

...

Executions project demonstrates how

...

to configure the Pipeline Execute Snap to execute a child

...

pipeline multiple times. The project contains the following

...

pipelines:

PE_Multiple_Executions_Child:

...

This is a simple child

...

pipeline that writes out a document with a static string and the number of input documents received by

...

Snap.

PE_Multiple_Executions_NoReuse_Parent:

...

This parent pipeline executes the PE_Multiple_Executions_Child

...

Pipeline five times. You can save

...

the Pipeline to examine the output documents. Note that the output contains a copy of the original document, and the $inCount field is always set to one because the Pipeline was separately executed five times.

PE_Multiple_Executions_Reuse_Parent:

...

This parent Pipeline

...

executes the PE_Multiple_Executions_Child Pipeline once and feeds the child Pipeline execution five documents. You can save the Pipeline to examine the output documents. Note that the output does not contain a copy of the original document, and the $inCount field goes up for each document

...

because the same Snap instance is being used to process each document.

PE_Multiple_Executions_UltraSplitAggregate_Parent:

...

This parent Pipeline

...

is an example of using Snaps that are not Ultra-compatible in an Ultra Pipeline.

...

It can be turned into an Ultra Pipeline by removing the JSON Generator Snap at the head of the Pipeline and creating an Ultra Task.

PE_Multiple_Executions_UltraSplitAggregate_Child: A child Pipeline that splits an array field in the input document and sums the values of the $num field in the resulting documents.

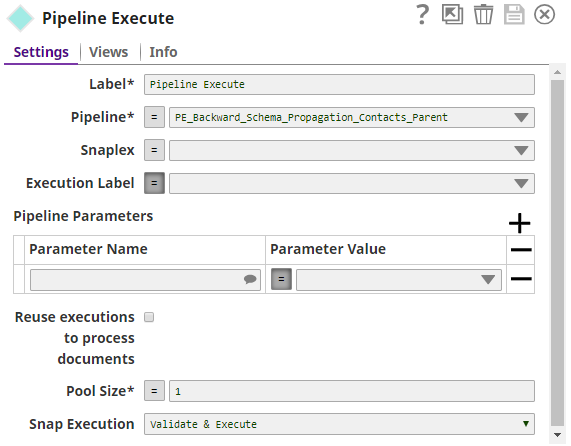

Propagate a Schema Backward

The project, PE_Backward_Schema_Propagation_Contacts, demonstrates the schema

...

that suggests a feature of the Pipeline Execute Snap. It contains the following files:

PE_Backward_Schema_Propagation_Contacts_Parent

PE_Backward_Schema_Propagation_Contacts_Child

contact.schema (Schema file)

test.json (Output file)

The parent

...

pipeline is shown below:

...

The child Pipeline is as shown below:

...

The Pipeline Execute Snap is configured as:

The following schema is provided in the JSON Formatter Snap. It has three properties - $firsName, $lastName, and $age. This schema is back propagated to the parent

...

pipeline.

...

The parent Pipeline must be validated in order for the child Pipeline's schema to be back-propagated to the parent Pipeline. Below is the Mapper Snap in the parent

...

pipeline:

...

Notice that the Target Schema section shows the three properties of the schema in the child

...

pipeline:

...

...

On execution, the data passed in the Mapper Snap will be written into the test.json file in the child Pipeline. The exported project is available in the Downloads section below.

Propagate Schema Backward and Forward

The project, PE_Backward_Forward_Schema_Propagation, demonstrates the Pipeline Execute Snap's capability of propagating schema in both directions – upstream

...

and downstream. It contains the following Pipelines:

PE_Backward_Forward_Schema_Propagation_Parent

PE_Backward_Forward_Schema_Propagation_Child

The parent Pipeline is as shown below:

...

The Pipeline Execute Snap is configured to call the Pipeline schema-child. This child Pipeline consists of a Mapper Snap that is configured as shown below:

...

The Mapper Snaps upstream and downstream of the Pipeline Execute Snap: Mapper_InputSchemaPropagation

...

and Mapper_TargetSchemaPropagation are configured as shown below:

...

...

...

When the Pipeline is executed, data propagation takes place between the parent and child Pipeline:

The string expression $foo is propagated from the child Pipeline to the Pipeline Execute Snap.

The Pipeline Execute Snap propagates it to the upstream Mapper Snap (Mapper_InputSchemaPropagation), as visible in the Target Schema section. Here it is assigned the value 123.

This is passed from the Mapper to the Pipeline Execute Snap

...

, which internally passes the value to the child Pipeline. Here, $foo is mapped to $bar. $baz is another string expression in the child Pipeline (assigned the value 2).

$bar, and $baz are propagated to the Pipeline Execute Snap and propagated forward to the downstream Mapper Snap (Mapper_TargetSchemaPropagation). This can be seen in the Input Schema section of the Mapper Snap.

Migrating from Legacy-Nested

...

pipelines

The Pipeline Execute Snap can replace some uses of the Nested Pipeline mechanism

...

and the ForEach and Task Execute Snaps. For now, this Snap only supports

...

child Pipelines with unlinked document views (binary views are not supported). If these limitations are not a problem for your use case, read on to find out how

...

to transition to this Snap and the advantages of doing so.

Nested Pipeline

Converting a Nested Pipeline will require the child

...

pipeline to be adapted to have no more than a single unlinked document input view and no more than a single unlinked document output view. If the child

...

pipeline can be made compatible, then you can use this Snap by dropping it on the canvas and selecting the child

...

pipeline for the Pipeline property.

You will also want to enable the Reuse property to preserve the existing execution semantics of Nested

...

pipelines. The advantages of using this Snap over Nested

...

pipelines are:

Multiple executions can be started to process documents in parallel.

The Pipeline to execute can be determined dynamically by an expression.

The original document or binary data is attached to any output documents or binary data if reuse is not enabled.

ForEach

Converting

...

the ForEach Snap to the Pipeline Execute Snap is

...

a simple process. Select the pipeline to run and populate the

...

parameters. The

...

benefits of

...

utilizing the Snap compared to the ForEach Snap are:

Documents or binary data fed into the Pipeline Execute Snap can be sent to the child Pipeline execution through an unlinked input view in the child.

Documents or binary data sent

...

from an unlinked output view in the child Pipeline execution is written out of the Pipeline Execute's output view.

The execution label can be changed.

The Pipeline to execute can be determined dynamically by an expression.

Executing a Pipeline does not require communication with the cloud servers.

Task Execute

Converting a Task Execute Snap to

...

a Pipeline Execute Snap is also straightforward

...

because the properties are similar.

...

To start, you only need to select the Pipeline you

...

want to use

...

; you no longer have to create a Triggered Task. If you set the Batch Size property in the Task Execute to one, then you will not want to enable the Reuse property. If the Batch Size was greater than one, then you should enable Reuse. The Pipeline parameters should be the same between the Snaps. The advantages of using this Snap

...

to the Task Execute Snap are:

A task does not need to be created.

...

You can start multiple executions simultaneously to process documents in parallel.

...

You can process an unlimited number of documents that can be processed

...

with a single execution (that is, no batch size).

The execution label can be changed.

...

Determine the pipeline to execute

...

dynamically by an expression.

The original document

...

is attached to any output documents if reuse is not enabled.

...

No requirement to communicate with the cloud servers

...

to execute a pipeline.

Converting a

...

pipeline that uses the Router Snap in "auto" mode can be done by moving the duplicated portions of the Pipeline into a new

...

pipeline and then calling that Pipeline using a Pipeline Execute. After refactoring the Pipeline, you can adjust the "Pool Size" of the Pipeline Execute Snap to control how many operations are done in parallel. The advantages of using this Snap over an "Auto" Router are:

De-duplication of Snaps in the

...

pipeline.

...

Adjustment of the level of parallelism is

...

trivial simply change the Pool Size value.

Downloads

| Note |

|---|

...

Important Steps to Successfully Reuse Pipelines

|

| Attachments | ||

|---|---|---|

|

| Insert excerpt | ||||||

|---|---|---|---|---|---|---|

|