In this Article

You can deploy Groundplex nodes in a Kubernetes environment and enable elastic scaling of resources for Groundplex nodes by completing the steps in this tutorial.

Kubernetes (K8s) is a container-orchestration system for automating computer application deployment, scaling, and management. In Kubernetes, the containers that share storage and network resources are called Pods. Groundplex node resources are represented by Pods in the SnapLogic implementation of elastic scaling, which leverages the Horizontal Pod Autoscaler (HPA), a Kubernetes API resource, that automatically scales the number of Pods in a deployment based on the observed CPU/Memory utilization. The number of Pods made available in a cluster depends on the load on the server farm. Other types of metrics require a monitoring application like Prometheus Adapter software.

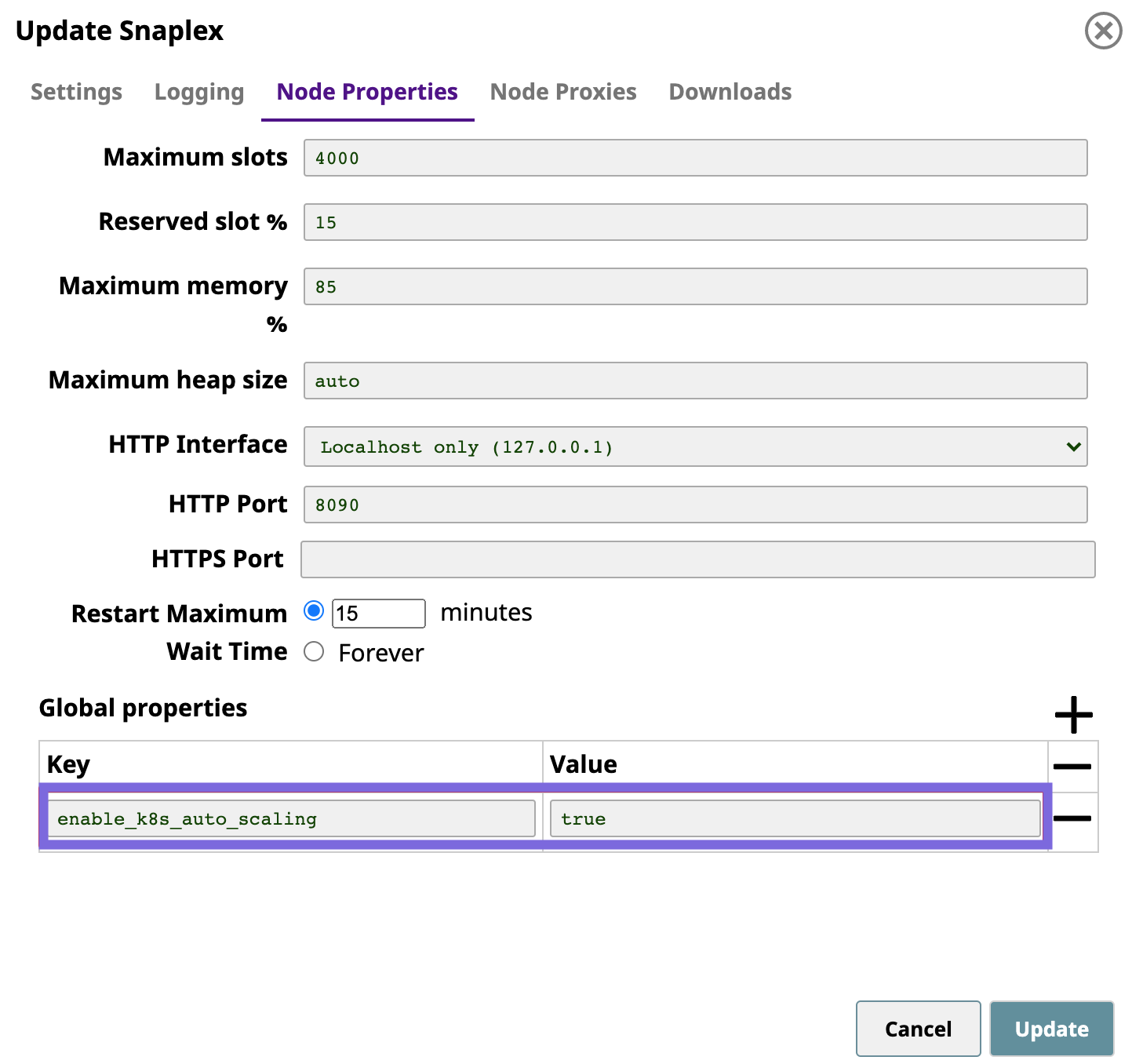

Click the Nodes tab, and add the following entries to the Global Properties:

| Key | Value |

|---|---|

| enable_k8s_auto_scaling | true |

The following screenshot shows the added setting:

You can now set up the Metrics Server software.

$ kubectl get deployment metrics-server -n kube-system

Output for a successful installation:

NAME READY UP-TO-DATE AVAILABLE AGEmetrics-server 1/1 1 1 6d20h

You must install and set up the Prometheus software and adapter to track custom metrics. SnapLogic uses the kube-prometheus-stack package from the following sources:

The following example shows custom_values.yaml chart.

prometheus:

service:

type: LoadBalancer

port: 80

# AWS EKS specific setting

annotations:

service.beta.kubernetes.io/aws-load-balancer-internal: "true"

prometheusSpec:

# Expose additional targets which can be discovered by Prometheus

additionalScrapeConfigs:

- job_name: 'snaplogic-snaplex-hpa-jcc-autoscale-metrics'

scrape_interval: 15s

metrics_path: '/autoscale_metrics' # default is metrics

kubernetes_sd_configs:

- role: pod

namespaces:

names:

- default

# If you install the Snaplex in your own namespace, you have to provide here.

# - snaplogic

scheme: https

tls_config:

insecure_skip_verify: true

relabel_configs:

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: namespace

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod

- source_labels: [__address__]

action: replace

regex: ([^:]+)(?::\d+)?

replacement: ${1}:8081

target_label: __address__

- source_labels: [__meta_kubernetes_pod_label_app_kubernetes_io_type]

action: keep

regex: jcc # Name for the Pod to match

grafana:

# set 'enabled: true' if you want to enable the Grafana dashboard

enabled: false

service:

type: LoadBalancer

port: 80

# AWS EKS specific setting

annotations:

service.beta.kubernetes.io/aws-load-balancer-internal: "true"

alertmanager:

enabled: false |

You can enable the Grafana dashboard in the chart.

Create your own namespace by adding the namespace entry into the following field. prometheusSpec.additionalScrapeConfigs.kubernetes_sd_configs.namespaces.namesIn the custom_values.yaml Helm chart, the namespace is snaplogic; however, it is commented out in the chart. To use SnapLogic as your namespace, uncomment those lines:

# If you install the Snaplex in your own namespace, you have to provide here. # - snaplogic |

Install Prometheus software by deploying the following Helm chart.

NAME_SPACE=monitoring PROMETHEUS_VERSION=14.0.1 PROM_RELEASE_NAME=prometheus # create namespace kubectl create namespace $NAME_SPACE # install Prometheus helm repo add prometheus-community https://prometheus-community.github.io/helm-charts helm install $PROM_RELEASE_NAME prometheus-community/kube-prometheus-stack --version $PROMETHEUS_VERSION --namespace $NAME_SPACE -f custom_values.yaml |

Verify that the Prometheus and Grafana services by running the following command.

$ kubectl get all -n monitoring

NAME READY STATUS RESTARTS AGE pod/alertmanager-prometheus-kube-prometheus-alertmanager-0 2/2 Running 0 19m pod/prometheus-grafana-6f5448f95b-68zdt 2/2 Running 0 19m pod/prometheus-kube-prometheus-operator-8556f58759-tlxb8 1/1 Running 0 19m pod/prometheus-kube-state-metrics-6bfcd6f648-ckzf6 1/1 Running 0 19m pod/prometheus-prometheus-kube-prometheus-prometheus-0 2/2 Running 1 19m pod/prometheus-prometheus-node-exporter-fgc5x 1/1 Running 0 19m pod/prometheus-prometheus-node-exporter-r7dgf 1/1 Running 0 19m pod/prometheus-prometheus-node-exporter-rd8pz 1/1 Running 0 19m NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE service/alertmanager-operated ClusterIP None <none> 9093/TCP,9094/TCP,9094/UDP 19m service/prometheus-grafana LoadBalancer 10.100.153.99 internal-xxxx.us-west-2.elb.amazonaws.com 80:30324/TCP 19m service/prometheus-kube-prometheus-operator ClusterIP 10.100.234.140 <none> 443/TCP 19m service/prometheus-kube-prometheus-prometheus LoadBalancer 10.100.20.88 internal-xxxx.us-west-2.elb.amazonaws.com 80:31644/TCP 19m service/prometheus-kube-state-metrics ClusterIP 10.100.184.76 <none> 8080/TCP 19m service/prometheus-operated ClusterIP None <none> 9090/TCP 19m service/prometheus-prometheus-node-exporter ClusterIP 10.100.216.232 <none> 9100/TCP 19m NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE daemonset.apps/prometheus-prometheus-node-exporter 3 3 3 3 3 <none> 19m NAME READY UP-TO-DATE AVAILABLE AGE deployment.apps/prometheus-grafana 1/1 1 1 19m deployment.apps/prometheus-kube-prometheus-operator 1/1 1 1 19m deployment.apps/prometheus-kube-state-metrics 1/1 1 1 19m NAME DESIRED CURRENT READY AGE replicaset.apps/prometheus-grafana-6f5448f95b 1 1 1 19m replicaset.apps/prometheus-kube-prometheus-operator-8556f58759 1 1 1 19m replicaset.apps/prometheus-kube-state-metrics-6bfcd6f648 1 1 1 19m NAME READY AGE statefulset.apps/alertmanager-prometheus-kube-prometheus-alertmanager 1/1 19m statefulset.apps/prometheus-prometheus-kube-prometheus-prometheus 1/1 19m |

Install the Prometheus adapter the following command:

NAME_SPACE=monitoring ADAPTER_VERSION=2.12.1 ADAPTER_RELEASE_NAME=prometheus-adapter # install Prometheus Adapter helm install $ADAPTER_RELEASE_NAME prometheus-community/prometheus-adapter --version $ADAPTER_VERSION --namespace $NAME_SPACE -f adapter_custom_values.yaml |

You have to create the adapter_custom_values.yaml file and apply it during Helm Chart installation. |

Create the adapter_custom_values.yaml file and apply it during the Helm Chart installation:

prometheus:

# Run `kubectl get svc -n namespace` to get the url of Prometheus

url: http://prometheus-kube-prometheus-prometheus.monitoring.svc

# Prometheus's port. Currently it is LoadBalancer with 80 default.

port: 80

replicas: 1

rules:

# Whether to enable the default rules

default: false

# Add custom metrics API here

custom:

# plex_queue_size API

- seriesQuery: 'plex_queue_size'

resources:

overrides:

namespace: {resource: "namespace"}

pod: {resource: "pod"}

name:

matches: ^(.*)

as: "plex_queue_size"

metricsQuery: <<.Series>>{<<.LabelMatchers>>} |

Verify the Prometheus Adapter installation by running the following command:

$ kubectl get --raw /apis/custom.metrics.k8s.io/v1beta1 | jq .

{

"kind": "APIResourceList",

"apiVersion": "v1",

"groupVersion": "custom.metrics.k8s.io/v1beta1",

"resources": [

{

"name": "pods/plex_queue_size",

"singularName": "",

"namespaced": true,

"kind": "MetricValueList",

"verbs": [

"get"

]

},

{

"name": "namespaces/plex_queue_size",

"singularName": "",

"namespaced": false,

"kind": "MetricValueList",

"verbs": [

"get"

]

}

]

} |

Access the Grafana UI through the IP addresses under the EXTERNAL-IP column from Step 2 of Installing the Prometheus Application.

You can also use the |

targetAvgCPUUtilization: 50

Where 50 is 50%targetAvgMemoryUtilization: 50

Where 50 is 50%$ helm install -n snaplogic <helm_chart_folder>

Verify the Helm Chart installation by running the following command.

$ kubectl get all -n snaplogic NAME READY STATUS RESTARTS AGE pod/<helm_chart_name>-snaplogic-snaplex-feedmaster-84ff4f48c-7rtpd 1/1 Running 0 12s pod/<helm_chart_name>-snaplogic-snaplex-jcc-66ddddcb76-ttdwz 1/1 Running 0 12s NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE service/<helm_chart_name>-snaplogic-snaplex-feed NodePort 10.100.83.252 <none> 8084:30456/TCP 13s service/<helm_chart_name>-snaplogic-snaplex-regular NodePort 10.100.140.124 <none> 8081:30182/TCP 13s NAME READY UP-TO-DATE AVAILABLE AGE deployment.apps/<helm_chart_name>-snaplogic-snaplex-feedmaster 1/1 1 1 13s deployment.apps/<helm_chart_name>-snaplogic-snaplex-jcc 1/1 1 1 13s NAME DESIRED CURRENT READY AGE replicaset.apps/<helm_chart_name>-snaplogic-snaplex-feedmaster-84ff4f48c 1 1 1 13s replicaset.apps/<helm_chart_name>-snaplogic-snaplex-jcc-66ddddcb76 1 1 1 13s NAME REFERENCE TARGETS MINPODS MAXPODS REPLICAS AGE horizontalpodautoscaler.autoscaling/<helm_chart_name>-snaplogic-snaplex-hpa Deployment/<helm_chart_name>-snaplogic-snaplex-jcc 10%/50% 1 5 0 13s |

You can now monitor the scale up and scale down of your Groundplex node resources in Kubernetes. View an example of a completed Helm Chart in the next section.

The following Helm Chart shows example configuration values for Elastic Scaling of Snaplex instances. Download a sample template.

# Default values for snaplogic-snaplex. # This is a YAML-formatted file. # Declare variables to be passed into your templates. # Regular nodes count jccCount: 1 # Feedmaster nodes count feedmasterCount: 1 # Docker image of SnapLogic snaplex image: repository: snaplogic/snaplex tag: latest # SnapLogic configuration link snaplogic_config_link: https://elastic.snaplogic.com/api/1/rest/plex/config/snaplogic/shared/Snaplex_Nimes?expires=1620176089&user_id=k8_admin%40snaplogic.com&_sl_authproxy_key=st3b2AEYY9tdOK4Bb%2B0axweQSDukAGrE5KHHGSMyEnk%3D # SnapLogic Org admin credential #snaplogic_secret: secret # Enhanced encryption secret #enhanced_encrypt_secret: secret # CPU and memory limits/requests for the nodes limits: memory: 8Gi cpu: 2000m requests: memory: 8Gi cpu: 2000m # JCC HPA autoscaling: enabled: true minReplicas:2 maxReplicas: 4 # Average count of Snaplex queued pipelines (e.g. targetPlexQueueSize: 5), leave empty to disable # To enable this metric, Prometheus and Prometheus-Adapter are required to install. targetPlexQueueSize: 2 # Average CPU utilization (e.g. targetAvgCPUUtilization: 50 means 50%), leave empty to disable. # To enable this metric, Kubernetes Metrics Server is required to install. targetAvgCPUUtilization: 80 # Average memory utilization (e.g. targetAvgMemoryUtilization: 50 means 50%), leave empty to disable. # To enable this metric, Kubernetes Metrics Server is required to install. targetAvgMemoryUtilization: 70 # window to consider waiting while scaling up. default is 0s if empty. scaleUpStabilizationWindowSeconds: 0 # window to consider waiting while scaling down. default is 300s if empty. scaleDownStabilizationWindowSeconds: 300 # grace period seconds after JCC termination signal before force shutdown, default is 30s if empty. terminationGracePeriodSeconds: 900 |

To understand the monitoring workflow for elastic scaling, see Monitoring your Snaplexes in a Kubernetes Environment.