Storage Integration (SI)

Storage Integration stores the Identity and Access Management (IAM) of the cloud storage (Amazon S3, Google Cloud Storage (GCS), or Microsoft Azure).

Snowflake object delegates authentication responsibility for external cloud storage (GCS) to a Cloud Storage service account. For accessing Cloud Storage buckets, Snowflake creates a service account that can be granted permissions to access the bucket(s) that store your data files. The SI object allows you to avoid supplying sensitive credentials when loading or unloading data into GCS. This object generates Google Service Account entity for your external GCS along with an optional set of allowed or blocked storage locations. However, the generated Google Service account needs to be granted permissions by Google Cloud platform IAM service.

Configure Storage Integration Object

Log into Snowflake using this URL: https://snaplogic.snowflakecomputing.com/console

Create a Snowflake Storage Integration Object. For example,

create storage integration JOHN_GCS_STORAGE_INTEGRATION1type = external_stagestorage_provider = gcsenabled = truestorage_allowed_locations = ('gcs://johnsnowflake1/', 'gcs://johnsnowflake2/');Describe the Snowflake storage integration object to get the GCP Service Account.

desc integration JOHN_GCS_STORAGE_INTEGRATION;Create a Custom Role.

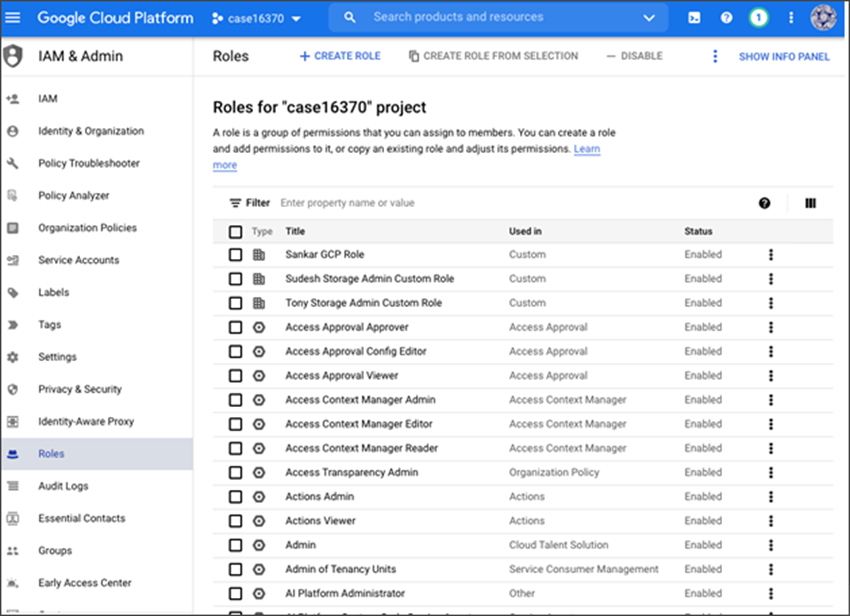

Navigate to IAM & Admin > Roles > +CREATE ROLE

Create a role with an appropriate name and add the following permissions:

firebase.projectsData loading only

storage.buckets.get

storage.

objects.get

storage.

objects.list

Data loading with purge option

storage.buckets.

get

storage.

objects.

delete

storage.

objects.

get

storage.

objects.list

storage.buckets.setIamPolicyData loading and unloading

storage.buckets.

get

storage.objects.create

storage.objects.delete

storage.objects.get

storage.objects.list

storage.objects.setIamPolicy

- storage.objects.update

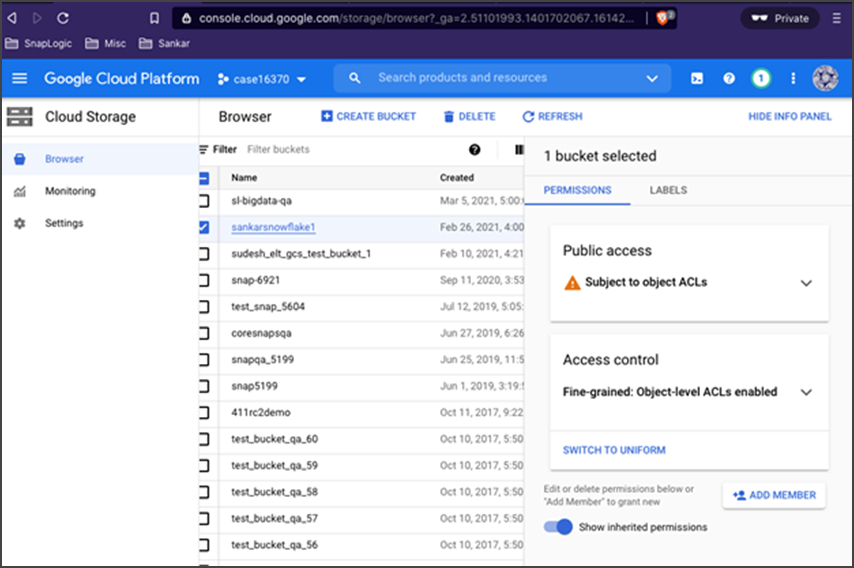

Provide permission on the Google Cloud Storage bucket to the Snowflake GCP Service Account.

Add the GCP service user and the custom role you have just created.

Bulk load the data to Snowflake.

COPY INTO "PUBLIC".JOHN_EMP2FROM 'gcs://johnsnowflake1/data/'FILES = ( 'john_emp1.csv' )FILE_FORMAT = (TYPE = CSV SKIP_HEADER = 1)STORAGE_INTEGRATION = JOHN_GCS_STORAGE_INTEGRATION;Unload the data from Snowflake table to a GCS location:

copy into COPY INTO 'gcs://johnsnowflake1/unload/'from "PUBLIC".JOHN_EMP2storage_integration = JOHN_GCS_STORAGE_INTEGRATIONfile_format = (format_name = snap_csv_format);

...