On this Page

...

Field Name | Field Type | Field Dependency | Description | ||||

|---|---|---|---|---|---|---|---|

Label* Default Value: Snowflake - Bulk Upsert | String | N/A | Specify the name for the instance. You can modify this to be more specific, especially if you have more than one of the same Snap in your Pipeline. | ||||

Schema Name Default Value: N/A | String/Expression/Suggestion | N/A | Specify the database schema name. In case it is not defined, then the suggestion for the Table Name retrieves all tables names of all schemas. The property is suggestible and will retrieve available database schemas during suggest values. The values can be passed using the Pipeline parameters but not the upstream parameter. | ||||

Table Name* Default Value: N/A | String/Expression/Suggestion | N/A | Specify the name of the table to execute bulk load operation on. The values can be passed using the Pipeline parameters but not the upstream parameter. | ||||

Staging Locationlocation Default Value: Internal | Dropdown list/Expression | N/A | Select the type of staging location that is to be used for data loading:

| ||||

File format object Default Value: None Example: | String/Expression/Suggestion | N/A | Specify an existing file format object to use for loading data into the table. The specified file format object determines the format type such as CSV, JSON, XML, AVRO, or other format options for data files. | ||||

File format type Default Value: None | Dropdown list | N/A | Specify a predefined file format object to use for loading data into the table. The available file formats include CSV, JSON, XML, and AVRO. | ||||

File format option Default value: N/A | String/Expression | N/A | Specify the file format option. Separate multiple options by using blank spaces and commas.

| ||||

Encryption type Default Value: None | Dropdown list | N/A | Specify the type of encryption to be used on the data. The available encryption options are:

The KMS Encryption option is available only for S3 Accounts (not for Azure Accounts) with Snowflake. If Staging Location is set to Internal, and when Data source is Input view, the Server Side Encryption and Server-Side KMS Encryption options are not supported for Snowflake snaps: This happens because Snowflake encrypts loading data in its internal staging area and does not allow the user to specify the type of encryption in the PUT API. Learn more: Snowflake PUT Command Documentation. | ||||

KMS key Default Value: N/A | String/Expression | N/A | Specify the KMS key that you want to use for S3 encryption. Learn more about the KMS key: AWS KMS Overview and Using Server Side Encryption. This property applies only when you select Server-Side KMS Encryption in the Encryption Type field above. | ||||

Buffer size (MB) Default Value: 10MB | String/Expression | N/A | Specify the data in MB to be loaded into the S3 bucket at a time. This property is required when bulk loading to Snowflake using AWS S3 as the external staging area. Minimum value: 6 MB Maximum value: 5000 MB S3 allows a maximum of 10000 parts to be uploaded so this property must be configured accordingly to optimize the bulk load. Refer to Upload Part for more information on uploading to S3. | ||||

Key columns* Default Value: None | Specify the column to use for existing entries in the target table.

| ||||||

Delete Upsert Condition Default: NoneN/A | String | N/A | Delete Upsert Condition when true, causes the case to be executed. | ||||

Preserve case sensitivity Default Value: Deselected | Checkbox | N/A | Select this check box to preserve the case sensitivity of the column names.

| ||||

Manage Queued Queries Default Value: Continue to execute queued queries when the Pipeline is stopped or if it fails | Dropdown list | N/A | Select this property to determine whether the Snap should continue or cancel the execution of the queued Snowflake Execute SQL queries when you stop the pipeline. If you select Cancel queued queries when the Pipeline is stopped or if it fails, then the read queries under execution are canceled, whereas the write type of queries under execution are not canceled. Snowflake internally determines which queries are safe to be canceled and cancels those queries. | ||||

Load empty strings Default value: Selected | Checkbox | N/A | If selected, empty string values in the input documents are loaded as empty strings to the string-type fields. Otherwise, empty string values in the input documents are loaded as null. Null values are loaded as null regardless. | ||||

Additional Options | |||||||

On Error Default Value: ABORT_STATEMENT | Dropdown list | N/A | Select an action to perform when errors are encountered in a file. The available actions are:

| ||||

Error Limit Default Value: 0 | Integer | Appears when you select SKIP_FILE_*error_limit* for On Error. | Specify the error limit to skip file. When the number of errors in the file exceeds the specified error limit or when SKIP_FILE_number is selected for On Error. | ||||

Error Percentage Limit Default Value: 0 | Integer | Appears when you select SKIP_FILE_*error_percent_limit*% | Specify the percentage of errors to skip file. If the file exceeds the specified percentage when SKIP_FILE_number% is selected for On Error. | ||||

Snap Execution Default Value: Execute only | Dropdown list | N/A | Select one of the three modes in which the Snap executes. Available options are:

| ||||

...

Error | Reason | Resolution |

|---|---|---|

Cannot lookup a property on a null value. | The value referenced in the Key Column field is null. This Snap does not support values from an upstream input document in the Key columns field when the expression button is enabled. | Update the Snap settings to use an input value from Pipeline parameters and run the Pipeline again. |

Example

Upserting Records

This example Pipeline demonstrates how you can efficiently update and delete data (rows) using the Key Column ID field and Upsert Delete condition. We use Snowflake - Bulk Upsert Snap to accomplish this task.

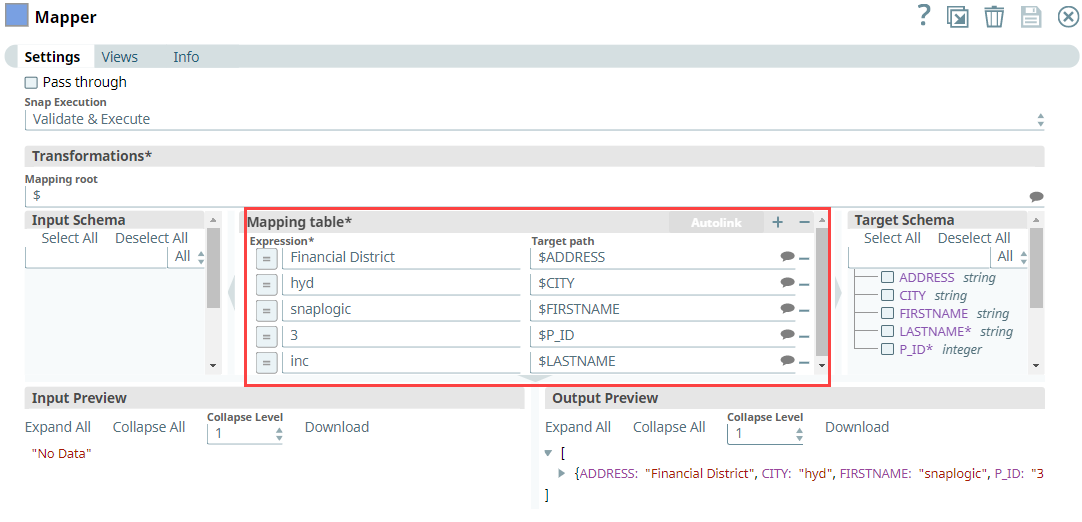

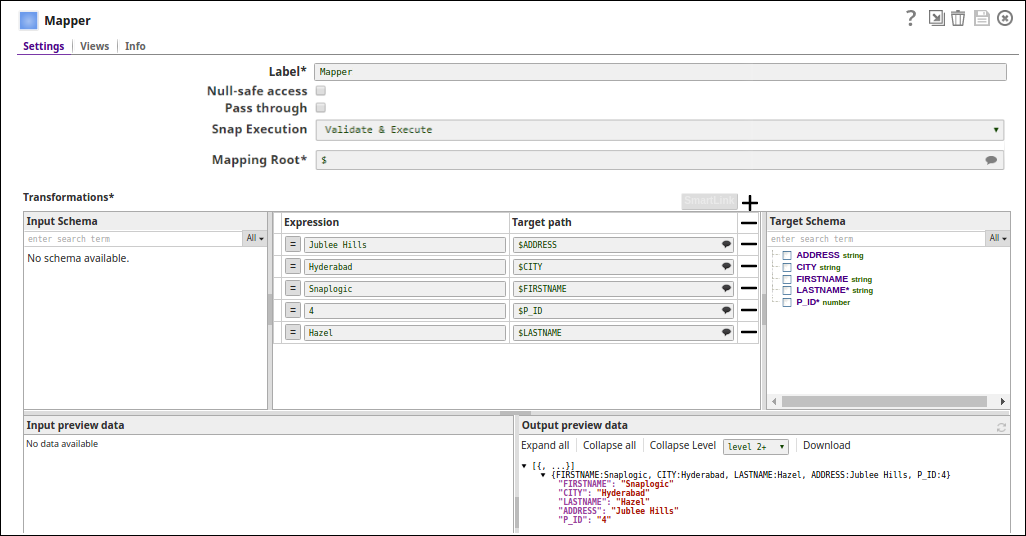

First, we configure the Mapper Snap with the required details to pass them as inputs to the downstream Snap.

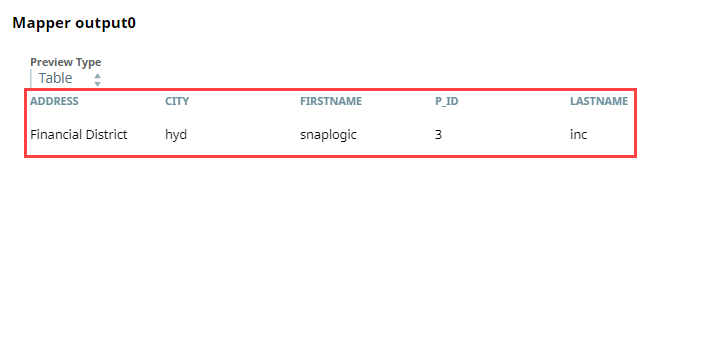

After validation, the Mapper Snap prepares the output as shown below to pass to the Snowflake Bulk - Upsert Snap.

Next, we configure the Snowflake - Bulk Upsert Snap to:

Upsert the existing row for P_ID column, (so, we provide P_ID in the Key column field).

Delete the rows where the FIRSTNAME is snaplogic in the target table, (so, we specify FIRSTNAME = 'snaplogic' in the Delete Upsert Condition field).

...

After execution, this Snap inserts a new record into the existing row for the P_ID key column in Snowflake.

Inserted Records Output in JSON | Inserted Records in Snowflake |

|---|---|

Upon execution, if the Delete Upsert condition is true, the Snap deletes the records in the target table as shown below.

Output in JSON | Deleted Record in Snowflake |

|---|---|

Bulk Loading Records

In the following example, we update a record using the Snowflake Bulk Upsert Snap. The invalid records which cannot be inserted will be routed to an error view.

...

The Mapper (Data) Snap maps the record details to the input fields of Snowflake Upsert Snap:

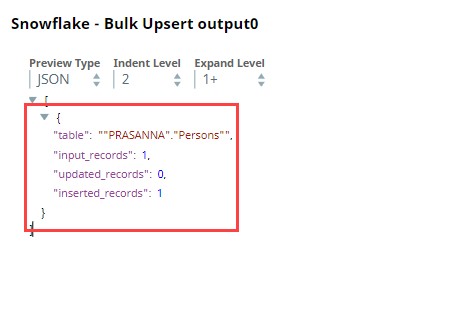

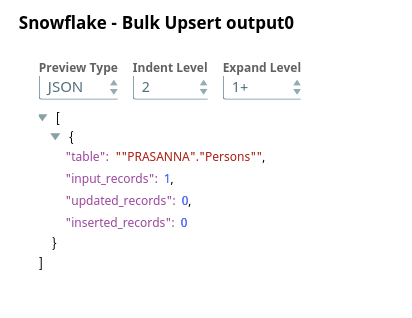

Snowflake Upsert Snap updates the record using the "PRASANNA"."Persons" table object:

Successful execution of the pipeline displays the following data preview (Note that one input has been made and two records with the name P_ID have been updated within the Persons table.):

...

.png?version=1&modificationDate=1489733387721&cacheVersion=1&api=v2)