On this Page

| Table of Contents | ||||

|---|---|---|---|---|

|

...

Snap type: | Write | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|

Description: | This Snap converts documents into the ORC format and writes the data to HDFS, S3, or the local file system.

| |||||||||

| Prerequisites: | [None]

| |||||||||

| Support and limitations: | Ultra pipelines: Supported for use

| |||||||||

| Account: | Accounts are not used with this Snap. The ORC Writer works with the following accounts: | |||||||||

| Views: |

| |||||||||

Settings | ||||||||||

Label

| Required. The name for the Snap. You can modify this to be more specific, especially if you have more than one of the same Snap in your pipeline. Default Value: ORC Writer | |||||||||

Directory

| Required.The URL for HDFS directory. It should start with hdfs or webhdfs file protocol in the form of: The path to a directory from which you want the ORC Reader Snap to read data. All files within the directory must be ORC formatted. Basic directory URI structure

The Directory property is not used in the pipeline execution or preview, and used only in the Suggest operation. When you press the Suggest icon, it will display the Snap displays a list of subdirectories under the given directory. It generates the list by applying the value of the Filter property.

| The glob pattern is used to display a list of directories or files when the Suggest icon is pressed in the Directory or File property. The complete glob pattern is formed by combining the value of the Directory property and the Filter property. If the value of the Directory property does not end with "/", the Snap appends one so that the value of the Filter property is applied to the directory specified by the Directory property. The following rules are used to interpret glob patterns:

|

Default value: hdfs://<hostname>:<port>/ | |||||||

Filter |

| |||||||||

File | Required for standard mode. Filename or a relative path to a file under the directory given in the Directory property. It should not start with a URL separator "/". The File property can be a JavaScript expression which will be evaluated with values from the input view document. When you press the Suggest icon, it will display a list of regular files under the directory in the Directory property. It generates the list by applying the value of the Filter property. Use Hive tables if your input documents contains complex data types, such as maps and arrays. Example:

Default value: [None] | |||||||||

File action | Required. Select an action to take when the specified file already exists in the directory. Please note the Append file action is supported for SFTP, FTP, and FTPS protocols only. Default value: [None] | |||||||||

File permissions for various users | Set the user and desired permissions. Default value: [None]

| |||||||||

Hive Metastore URL

| This setting is used to assist in setting the schema along with the database and table setting. If the data being written has a Hive schema, then the Snap can be configured to read the schema instead of manually entering it. Set Set the value to a Hive Metastore url URL where the schema is defined. Default value: [None] | |||||||||

Database | The Hive Metastore database where the schema is defined. See the Hive Metastore URL setting for more information.

| |||||||||

Table | The table to read from which the schema from in the Hive Metastore's databsedatabase must be read. See the Hive Metastore URL setting for more information. | |||||||||

Compression | Required. What The compression type of compression to use be used when writing the file. | |||||||||

Column paths | Paths where the column values appear in the document. ExampleThis property is required if the Hive Metastore URL property is empty. Examples:

Default value: [None]

| |||||||||

Execute during preview | Enables you to execute the Snap during the Save operation so that the output view can produce the preview data. Default value: Not selected

| |||||||||

Troubleshooting

- Use Hive tables if your input documents contains complex data types such as maps and arrays.

- The Snap can only write data into HDFS.

- When executed in SnapReduce mode, the value of the File setting specifies the output directory of the MapReduce job.

|

| |||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

Troubleshooting

| Insert excerpt | ||||||

|---|---|---|---|---|---|---|

|

Multiexcerpt include macro name Temporary Files page Join

Examples

...

| Expand | ||

|---|---|---|

| ||

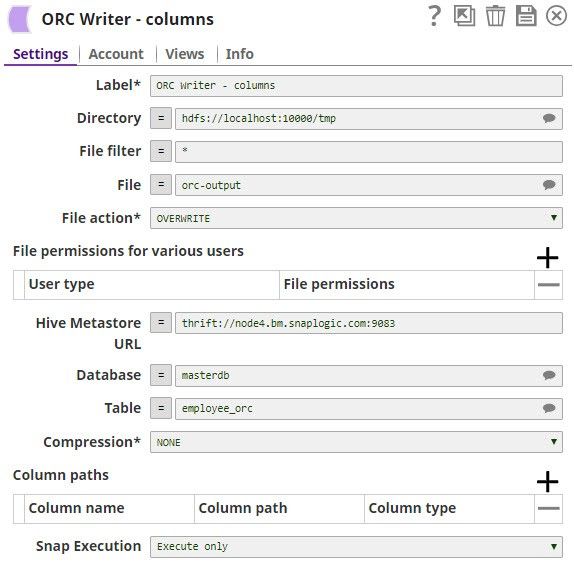

ORC Writer Writing to an HDFS InstanceHere is an example of a ORC Writer configured to write to a local instance of HDFS. The output is written to /tmp/orc-output. The Hive Metastore used reads the schema from the employee_orc table from the masterdb database. No column paths or compression are used. For an example of the Schema, see the documentation on the Schema setting. |

| Expand | ||

|---|---|---|

| ||

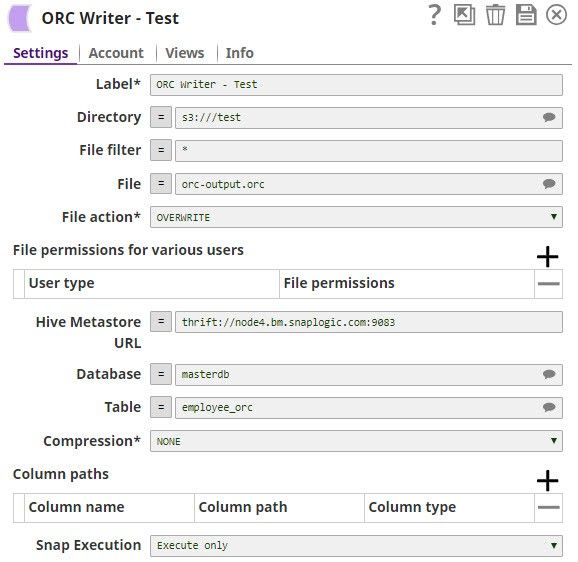

ORC Writer Writing to an S3 InstanceHere is an example of a ORC Writer configured to write to a local instance of |

...

S3. The output is written to /tmp/orc-output. The Hive Metastore used reads the schema from the employee_orc table from the masterdb database. No column paths or compression are used. For an example of the Schema, see the documentation on the Schema setting. |

...

...

...

See Also

Related Information

Read more about ORC at the Apache project's website, https://orc.apache.org/

...

| title | Snap History |

|---|

...

| Insert excerpt | ||||||

|---|---|---|---|---|---|---|

|