...

On this Page

| Table of Contents | ||||

|---|---|---|---|---|

|

Overview

...

Snap type:

Read

...

Description:

...

You can use the Snowflake - Unload Snap to unload the result of a query to a file or files stored on the Snowflake stage,

...

Google Cloud Storage, an external S3 workspace, or on Azure Storage Blob

...

if required. The target Snowflake table is not modified. Once the data is unloaded to the storage,

...

you can get the file either using Snowflake GET or an S3

...

Expected upstream Snaps: Any Snap with a document output view

Expected downstream Snaps: Any Snap with a document input view, such as JSON Formatter, Mapper, and so on. The CSV Formatter Snap cannot be connected directly to this Snap since the output document map data is not flat.

Expected input: Key-value map data to evaluate expression properties of the Snap

Expected output: Document with Snowflake response, query command and the location Url for the unloaded file.

| Note |

|---|

| The Snap behaves the same on a Groundplex as it does in a Cloudplex. |

...

File Reader Snap.

| Info |

|---|

Snowflake provides the option to use the Cross Account IAM into the external staging. You can adopt the cross-account access through Storage Integration option . With this setup, you don’t need to pass any credentials and access the storage only using the named stage or integration object. Learn more: Configuring Cross Account IAM Role Support for Snowflake Snaps. |

...

Snap Type

The Snowflake - Unload Snap is a Read-type Snap.

Prerequisites

| Insert excerpt | ||||||

|---|---|---|---|---|---|---|

|

Security Prerequisites

...

You should have the following permission in your Snowflake account to execute this Snap:

Usage (DB and Schema): Privilege to use database, role,

...

and schema.

The following commands enable minimum privileges in the Snowflake Console:

| Code Block |

|---|

grant usage on database <database_name> to role <role_name>;

grant usage on schema <database_name>.<schema_name>; |

For more information on Snowflake privileges, refer to Access Control Privileges.

The below are mandatory when using an external staging location:

When using an Amazon S3 bucket for storage:

The Snowflake account should contain S3 Access-key ID, S3 Secret key, S3 Bucket and S3 Folder.

The Amazon S3 bucket where the Snowflake will write the output files must reside in the same region as your cluster.

When using a Microsoft Azure storage blob:

A working Snowflake Azure database account.

Internal SQL Commands

This Snap uses the COPY INTO command internally. It

...

enables unloading data from a table (or query) into one or more files in one of the following locations:

Named internal stage (or table/user stage).

Named external stage, that references external location, such as, Amazon S3, Google Cloud Storage, or Microsoft Azure.

External location, such as, Amazon S3, Google Cloud Storage, or Microsoft Azure.

...

- Works in Ultra Task Pipelines.

- Snowflake provides the option to use the Cross Account IAM into the external staging. You can adopt the cross account access through option Storage Integration. With this setup, you don’t need to pass any credentials around and access the storage only using the named stage or integration object. For more details: Configuring Cross Account IAM Role Support for Snowflake Snaps.

...

| Info |

|---|

Requirements for External Storage LocationThe following are mandatory when using an external staging location: When using an Amazon S3 bucket for storage:

When using a Microsoft Azure storage blob:

When using a Google Cloud Storage:

|

Support for Ultra Pipelines

Works in Ultra Pipelines. However, we recommend that you not use this Snap in an Ultra Pipeline.

Known Issues

Because of performance issues, all Snowflake Snaps now ignore

...

the Cancel queued queries when pipeline is stopped or if it fails

...

option for Manage Queued Queries, even when selected. Snaps behave as though the

...

default Continue to execute queued queries when the Pipeline is stopped or if it

...

fails option were selected.

...

This Snap uses account references created on the Accounts page of SnapLogic Manager to handle access to this endpoint. This Snap requires a Snowflake Account with S3 or Microsoft Azure properties. See Snowflake Account for information on setting up this type of account.

...

| Input | This Snap has at most one document input view. |

|---|---|

| Output | This Snap has at most one document output view. |

| Error | This Snap has at most one document error view and produces zero or more documents in the view. |

...

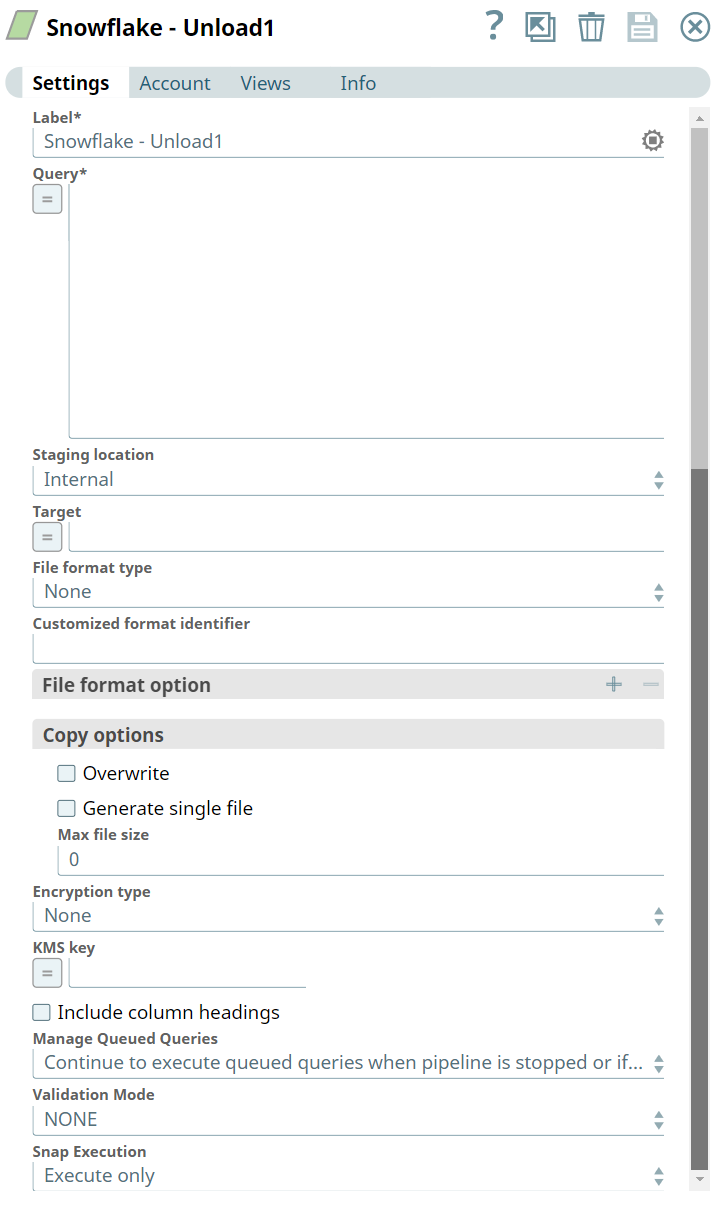

Settings

Label

...

Snap Views

Type | Format | Number of Views | Examples of Upstream and Downstream Snaps | Description |

|---|---|---|---|---|

Input | Document |

|

| A JSON document containing parameterized inputs for the Snap’s configuration, if needed. |

Output | Document |

|

| A JSON document containing the unload request details and the result of the unload operation. |

Error | Error handling is a generic way to handle errors without losing data or failing the Snap execution. You can handle the errors that the Snap might encounter while running the Pipeline by choosing one of the following options from the When errors occur list under the Views tab. The available options are:

Learn more about Error handling in Pipelines. | |||

Snap Settings

| Info |

|---|

|

Field Name | Field Type | Description |

|---|---|---|

Label* Default Value: Snowflake - Unload | String | Specify the name for the Snap. You can modify this to be more specific, especially if you have more than one of the same Snap in your |

...

Pipeline. |

Query |

|---|

...

* Default Value: N/A | String/Expression | Specify a SELECT query. The results of the query are unloaded. In most cases, it is worthwhile to unload data in sorted order by specifying an ORDER BY clause in the query; this approach will save the time Required. to sort the data when it is reloaded. |

|---|

Example: SELECT * FROM public.company ORDER BY id

Default value: None

Staging Location

...

Staging location

| Dropdown list/Expression | Specify the staging location for the unload. The expected input for this should be a path to a file or filename-prefix. The options available include:

|

|---|---|---|

Target Default |

...

Target

...

Value: N/A | String/Expression | Specify the staging area where the unloaded file(s) are placed. If the staging location is external, it will be put under the S3 Bucket or Microsoft Azure Storage Blob specified for the Account. If the staging location is internal, the files will be placed in the user’s home folder. |

|---|

...

File Format Type Default |

|---|

...

Value: |

|---|

...

The pre-defined storage integration is used to authenticate the external stages.

Default value: None

None | Dropdown list | The format type for the unloaded file. |

|---|

...

The options available are None, CSV, and CUSTOMIZED. |

...

If CUSTOMIZED is chosen, then the |

...

customized format identifier must be specified. |

Customized format identifier Default |

|---|

...

Value: None |

|---|

Customized format identifier

...

String | Specify the file format object to use for unloading data from the table. The field is valid only when the File format type is specified as Customized. Otherwise, this will be ignored. |

File format option

|

|---|

...

Value: None |

|---|

...

Example: CSV | String | Specify the file format option. Separate multiple options by using blank spaces and commas. |

|---|

...

You can use various file format options including binary format which passes through in the same way as other file formats. See File Format Type Options for more information. Before loading binary data into Snowflake, you must specify the binary encoding format, so that the Snap can decode the string type to binary types before loading into Snowflake. This can be done by specifying the following binary file format: BINARY_FORMAT=xxx (Where XXX = HEX|BASE64|UTF-8) However, the file you upload and download must be in similar formats. For instance, if you load a file in HEX binary format, you should specify the HEX format for download as well. |

...

Copy options | ||

|---|---|---|

Overwrite Default Value: Deselected | Checkbox | If selected, the UNLOAD overwrites the existing files, if any, in the location where files are stored. If |

...

Deselected, the option does not remove the existing files or overwrite. |

Generate single file Default |

|---|

...

Value: |

|---|

...

Deselected |

|---|

...

Checkbox | If selected, the UNLOAD will generate a single file. If it is not selected, the filename prefix needs to be included in the path. |

Max file size

|

|---|

...

Max file size

...

Value: 0 | Integer | Specify the maximum size (in bytes) of each file to be generated in parallel per thread. The number should be greater than 0, If it is less than or equals 0, the Snap will use the default size for snowflake: 16000000 (16MB). |

|---|---|---|

Encryption type Default |

...

Value: |

|---|

...

None |

|---|

...

Encryption type

Example: Server Side Encryption | Dropdown list | Specify the type of encryption to be used on the data. The available encryption options are:

|

|---|

Default value: No default value.

...

The KMS Encryption option is available only for S3 Accounts (not for Azure Accounts) with Snowflake. |

KMS key |

|---|

...

Default Value: N/A | String/Expression | Specify the KMS key that you want to use for S3 encryption. For more information about the KMS key, see AWS KMS Overview and Using Server Side Encryption. |

|---|

Default value: No default value.

This property applies only when you select Server-Side KMS Encryption in the Encryption Type field above. | ||

Include column headings Default Value: Deselcted | Checkbox | If selected, the table column heading will be included in the generated files. If multiple files are generated, the heading will be included in every file. |

|---|

Default value: Not selected

Validation Mode

...

Validation Mode

| Dropdown list | Select this mode for visually verifying the data before unloading it. If |

|---|

...

you select NONE, validation mode is disabled or, the unloaded data will not be written to the file. Instead, it will be sent as a response to the output. The options available include:

|

Default value: NONE

Manage Queued Queries Default Value: Continue to execute queued queries when the pipeline is stopped or if it fails | Dropdown list | Select this property to decide whether the Snap should continue or cancel the execution of the queued Snowflake Execute SQL queries when you stop the pipeline. |

|---|

...

If you select Cancel queued queries when the pipeline is stopped or if it fails, then |

...

the read queries under execution are canceled, whereas the write queries under execution are not canceled. |

...

Snowflake internally determines which queries are safe to be canceled and cancels those queries. |

...

Default Value: Execute only | Dropdown list |

|

|---|

The Snap behaves the same on a Groundplex as it does in a Cloudplex.

Troubleshooting

The preview on this Snap will not execute the Snowflake UNLOAD operation. Connect a JSON Formatter and a File Writer Snaps to the error view and then execute the pipeline. If there is any error, you will be able to preview the output file in the File Writer Snap for the error information.

Examples

Unloading Data (Including Binary Data Types) From Snowflake Database

The following example Pipeline demonstrates how you can unload binary data as a file and load it into an S3 bucket.

...

First, we configure the Snowflake - Unload Snap by providing the following query:

select * from

...

EMP2 – this query unloads data from EMP2 table.

Note that we set the File format option as BINARY_FORMAT='UTF-8' to enable the Snap to pass binary data.

...

Upon validation, the Snap shows the unloadRequest and unloadDestination in its preview.

...

We

...

connect the JSON Formatter

...

Snap to Snowflake - Unload Snap to

...

transform the binary data to JSON format, and finally write this output to a file in S3 bucket using

...

the File Writer Snap. Upon validation, the File Writer Output Snap writes the output (unload request)- the output preview is as shown below.

...

...

The following example illustrates the usage of the Snowflake Unload Snap.

...

...

In this example, we run the Snowflake SQL query using the Snowflake Unload Snap. The Snap selects the data from the table, @ADOBEDATA2 and writes the records to the table, adobedatanullif using the File Writer.

...

Connect the JSON formatter Snap to convert the predefined CSV file format to JSON and write the data using the File Writer Snap.

...

Successful execution of the pipeline gives the below output preview:

...

Downloads

| Multiexcerpt include macro | ||||

|---|---|---|---|---|

|

| Attachments | ||

|---|---|---|

|

Snap Pack History

| Expand | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|

|