In this article

| Table of Contents |

|---|

Overview

Apache™ Hadoop® is an open-source software framework for the storage and processing of large datasets.

Use Snaps in this Snap Pack to read data from and write data to the Hadoop File System (HDFS).

| Note |

|---|

A Groundplex needs to be configured as a Hadoop client for this integration to work. What JAR files and property files need to be installed for this depends on the version and vendor of your Hadoop File System. Refer to your vendor's documentation for that information. |

Supported Versions

This Snap Pack is tested against:

- CDH 5.8

- CDH 5.10

- CDH 5.16.1

CDH 5.16

CDH 6.1.1

- HDP 2.6.1

- HDP 2.6.3.1

- HDP 2.6.3

| Panel | ||||||

|---|---|---|---|---|---|---|

| ||||||

In this Section

|

Customizing the Location of the Temporary Directory

Snaps in the Hadoop Snap Pack briefly save a temporary file in the system while processing and before passing the contents to a downstream Snap. The temporary file is stored in a default location and automatically deleted after the process is complete.

- You can change the location of the temporary file to a custom location by using the global property jcc.jvm_options.

- You may choose to use one of the two methods in this section, to change the temporary file location.

| Note |

|---|

Modifying the java.io.tmp.dir file does not change the location of the temporary file. |

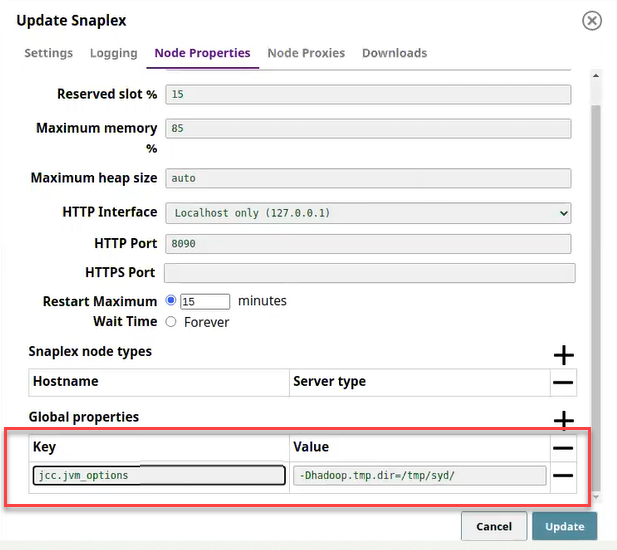

Method 1: Specifying the Temporary Location in the SnapLogic Manager

- In the SnapLogic Manager, Snaplexes tab, select the applicable Snaplex's name.

- In the Update Snaplex dialog, Node Properties tab, under Global properties, add the global property, jcc.jvm_options =

-Dhadoop.tmp.dir=/tmp/syd,where /tmp/sydis the new location to save the temporary file.

3. Click Update and OK to Snaplex Update Notice. This updates the new location for the temporary file and restarts the Snaplex with the new property setting.

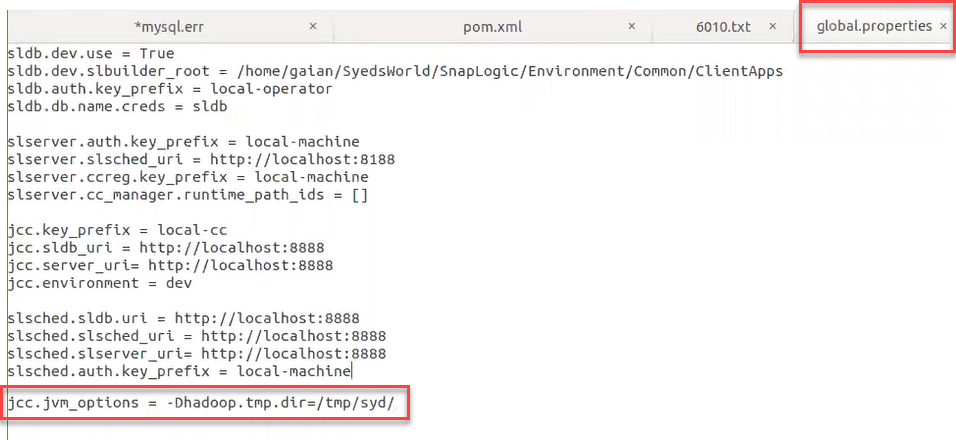

Method 2: Manually Changing the Location of Temporary File in global.properties

You can change from the default location of the temporary file to another location in global.properties in your local SnapLogic environment.

- Access the /etc folder in your SnapLogic installation:

- For a Linux installation, enter the command:

cd $SL_ROOT/etcon the command prompt. - For a Windows installation, enter the command:

cd %SL_ROOT%\etcon the command prompt.

- For a Linux installation, enter the command:

- Open the

global.propertiesfile.

Add the following entry:jcc.jvm_options =-Dhadoop.tmp.dir=tmp/syd/

3. Save the file and restart the Snaplex to update the new location for the temporary file.

| Note |

|---|

A folder, s3a, is created under the temporary location path to store the temporary file. |

Known Issues

- After upgrading your Snaplex to the 4.20 GA version, Pipelines with HDFS Reader Snap that use Kerberos authentication might remain in the start state.

- ORC Writer/Reader Snaps fail on S3 when using the 4.20 Snaplex with the previous Snap Pack version (hadoop8270 and snapsmrc528) displaying this error:

Unable to read input stream, Reason: For input string: "100M" error. - ORC Reader/Writer and Parquet Reader/Writer Snaps fail in 4.20 when executing a Pipeline on S3 with this error:

org.apache.hadoop.fs.StorageStatistics.

Snap Pack History

| title | Click to view/expand |

|---|

In this article

| Table of Contents |

|---|

Overview

Apache™ Hadoop® is an open-source software framework for the storage and processing of large datasets.

Use Snaps in this Snap Pack to read data from and write data to the Hadoop File System (HDFS).

Prerequisites

A Groundplex needs to be configured as a Hadoop client for this integration to work. The JAR files and property files that must be installed for this depends on the version and vendor of your Hadoop File System. Refer to your vendor's documentation for that information.

Supported Versions

This Snap Pack is tested against:

- CDH 5.8

- CDH 5.10

- CDH 5.16.1

CDH 5.16

CDH 6.1.1

- HDP 2.6.1

- HDP 2.6.3.1

- HDP 2.6.3

| Panel | ||||||

|---|---|---|---|---|---|---|

| ||||||

In this Section

|

Customizing the Location of the Temporary Directory

Snaps in the Hadoop Snap Pack briefly save a temporary file in the system while processing and before passing the contents to a downstream Snap. The temporary file is stored in a default location and is automatically deleted after the process is complete.

- You can change the location of the temporary file to a custom location by using the global property jcc.jvm_options.

- You may choose to use one of the two methods in this section, to change the temporary file location.

| Note |

|---|

Modifying the java.io.tmp.dir file does not change the location of the temporary file. |

Method 1: Specifying the Temporary Location in the SnapLogic Manager

- In the SnapLogic Manager, Snaplexes tab, select the applicable Snaplex's name.

- In the Update Snaplex dialog, Node Properties tab, under Global properties, add the global property, jcc.jvm_options =

-Dhadoop.tmp.dir=/tmp/syd,where /tmp/sydis the new location to save the temporary file.

3. Click Update and OK to Snaplex Update Notice. This updates the new location for the temporary file and restarts the Snaplex with the new property setting.

Method 2: Manually Changing the Location of Temporary File in global.properties

You can change from the default location of the temporary file to another location in global.properties in your local SnapLogic environment.

- Access the /etc folder in your SnapLogic installation:

- For a Linux installation, enter the command:

cd $SL_ROOT/etcon the command prompt. - For a Windows installation, enter the command:

cd %SL_ROOT%\etcon the command prompt.

- For a Linux installation, enter the command:

- Open the

global.propertiesfile.

Add the following entry:jcc.jvm_options =-Dhadoop.tmp.dir=tmp/syd/

3. Save the file and restart the Snaplex to update the new location for the temporary file.

| Note |

|---|

A folder, s3a, is created under the temporary location path to store the temporary file. |

Known Issues

- After upgrading your Snaplex to the 4.20 GA version, Pipelines with HDFS Reader Snap that use Kerberos authentication might remain in the start state.

- ORC Writer/Reader Snaps fail on S3 when using the 4.20 Snaplex with the previous Snap Pack version (hadoop8270 and snapsmrc528) displaying this error:

Unable to read input stream, Reason: For input string: "100M" error. - ORC Reader/Writer and Parquet Reader/Writer Snaps fail in 4.20 when executing a Pipeline on S3 with this error:

org.apache.hadoop.fs.StorageStatistics.

Snap Pack History

| Excerpt | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|