In this article

Overview

You can use this Snap to notify the Kafka Consumer Snap to commit an offset at specified metadata in each input document.

- This Snap should be used only if the Auto commit field in the Consumer Snap is not selected (set to false).

- This Snap no longer requires a Kafka account.

Prerequisites

- A Confluent Kafka server with a valid account.

- The Kafka Acknowledge Snap in a pipeline must receive the metadata from an upstream Snap, for example, a Kafka Consumer Snap.

Support for Ultra Pipelines

Works in Ultra Pipelines.

Prerequisites

None.

Limitations

None.

Known Issues

None.

Snap Input and Output

| Input/Output | Type of View | Number of Views | Examples of Upstream and Downstream Snaps | Description |

|---|---|---|---|---|

| Input | Document |

|

| Metadata from an upstream Kafka Consumer Snap. The input data schema is as shown below: "metadata": {

"topic": "xyz",

"partition": 2,

"offset": 523,

"consumer_group": "CopyGroup1",

"client_id": "17a9bbc7-da8f-45f8-813e-1ebca9b80383",

"tracker_index": 0,

"batch_size": 500,

"batch_index": 1,

"record_index": 23,

"auto_commit": false

}

|

| Output | Document |

|

| Processed and acknowledged Kafka messages. {

"status": "success",

"original": {

"metadata": {

"consumer_group": "abc",

"topic": "xyz",

"partition": 123,

"offset": 456,

"auto_commit": false

}

}

}

If the Auto commit field is set to true in the input document, the output schema looks as shown below: {

"status": "Auto-commit is on",

"original": {

"metadata": {

"consumer_group": "abc",

"topic": "xyz",

"partition": 123,

"offset": 456,

"auto_commit": true

}

}

|

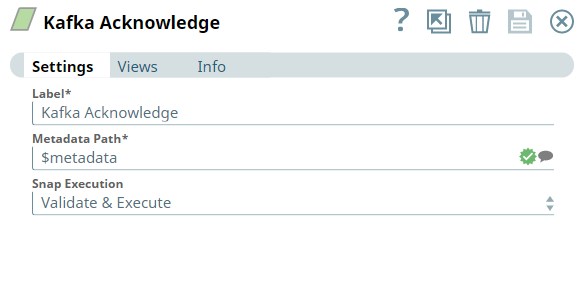

Snap Settings

| Parameter Name | Data Type | Description | Default Value | Example |

|---|---|---|---|---|

| Label | String | Specify a name for the Snap. You can modify this to be more specific, especially if you have more than one of the same Snap in your pipeline. | Kafka Acknowledge | Kafka_Acknowledge |

| Metadata path | String | Required. Specify the JSON path of the metadata within each input document. | metadata | $metadata |

| Snap Execution | Drop-down list | Select one of the three following modes in which the Snap executes:

| Validate & Execute | Validate & Execute |

Troubleshooting

None.

Example

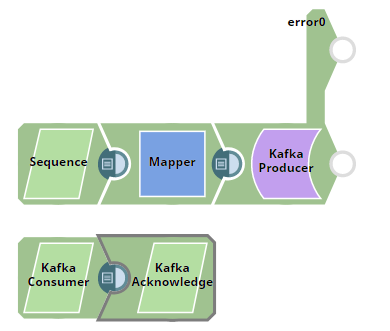

Acknowledging Messages

This example Pipeline demonstrates how we use the:

- Kafka Producer Snap to produce and send messages to a Kafka topic,

- Kafka Consumer Snap to read messages from a topic, and

- Kafka Acknowledge Snap to acknowledge the number of messages read (message count).

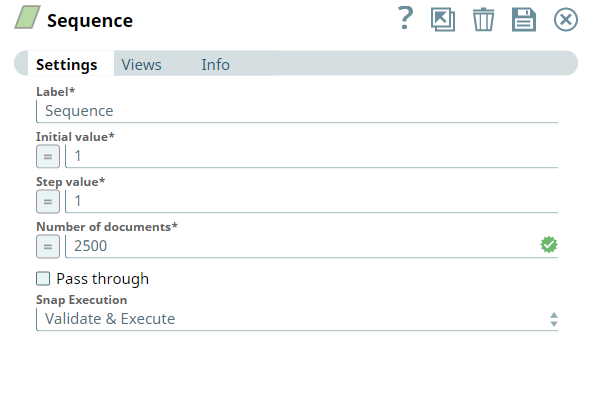

First, we use the Sequence Snap to enable the pipeline to send the documents in large numbers. We configure the Snap to read 2500 documents with the initial value as 1 and hence the Snap numbers all the documents starting from 1 through 2500.

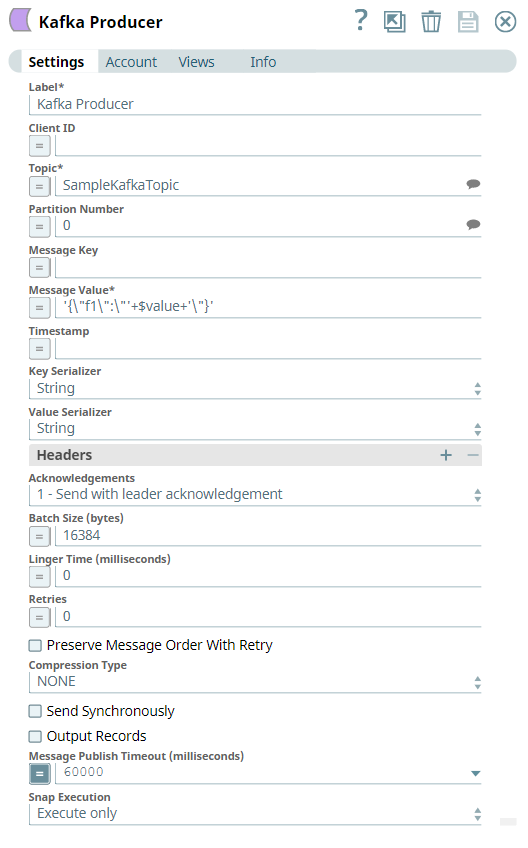

We configure the Kafka Producer Snap to send the documents to the Topic, SampleKafkaTopic and we set the Partition number to 0.

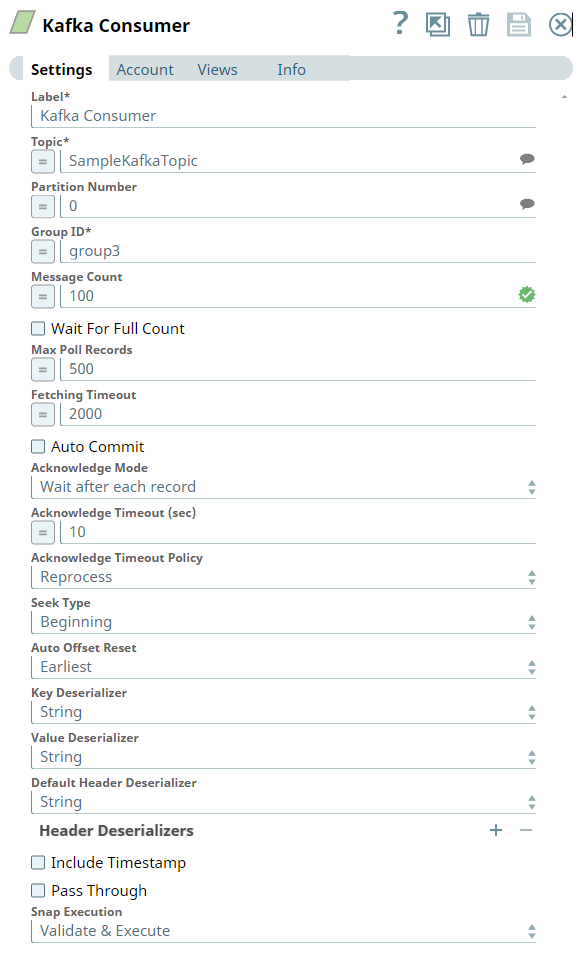

We configure the Kafka Consumer Snap to read the messages from the Topic, SampleKafkaTopic and set the Partition number to 0. The message count is set to 100, which means the Snap consumes 100 messages and sends to the output view.

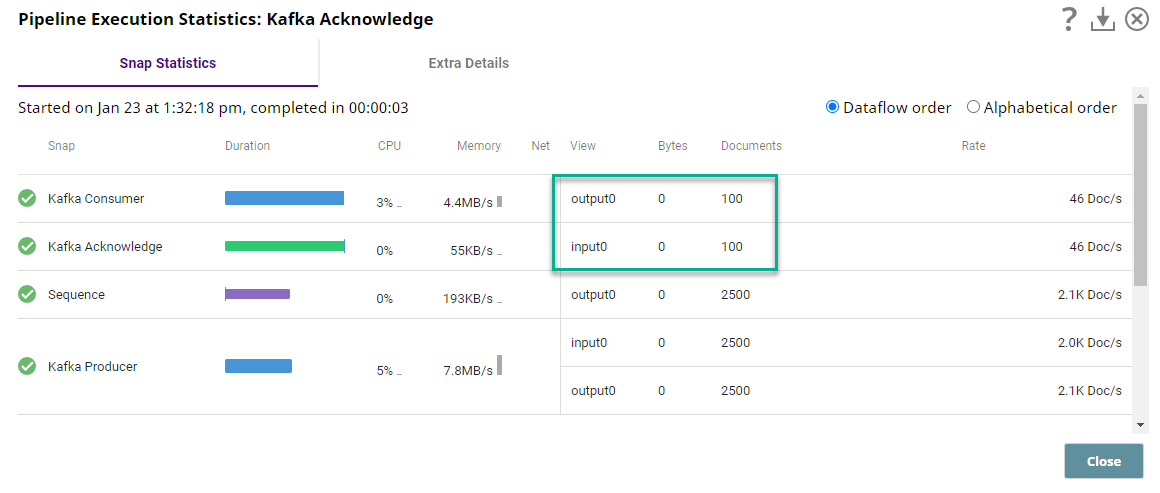

On successful execution of the Pipeline, we can view the consumed and acknowledged messages in the Pipeline Execution statistics as shown below. Note that the Message Count is set to 100 in the Consumer Snap, hence the Acknowledge Snap acknowledges the same count.

Downloads

Important Steps to Successfully Reuse Pipelines

- Download and import the Pipeline into SnapLogic.

- Configure Snap accounts as applicable.

- Provide Pipeline parameters as applicable.

See Also

- Apache Kafka

- Confluent - Schema Registry

- Getting Started with SnapLogic

- Snap Support for Ultra Pipelines

- SnapLogic Product Glossary