On this Page

| Table of Contents | ||||

|---|---|---|---|---|

|

| Snap type: | Write | ||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Description: | Validate input data | ||||||||||||||||

| Note | ||

|---|---|---|

| ||

If this property is not selected, the Snap does not validate the structure of input documents, converts all values to strings, writes the S3 CSV file, and executes the Redshift COPY command. If the COPY command finds error in the input CSV data, it writes errors to the error table, and the Snap routes these errors to the error view (if error view is enabled). However, some errors reported by the COPY command may not be easy to understand. Therefore, it is advisable to enable input data validation during pipeline development and testing, as this may also help troubleshoot the pipeline. |

| Info | ||

|---|---|---|

| ||

Flat map data is a collection of key-value pairs, where the values are all single-class objects unlike a Map or List. |

Default value: Not selected

Update statistics

Default value: Not selected

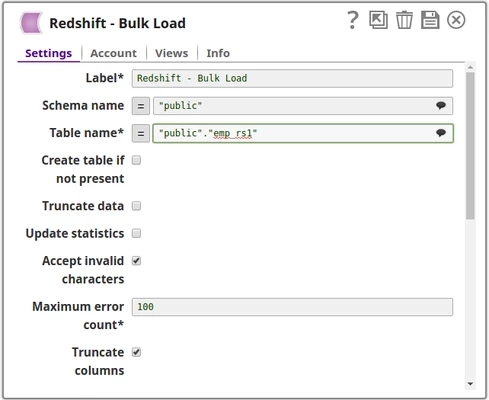

Accept invalid characters in the input. Invalid UTF-8 characters are replaced with a question mark when loading.

Default value: Selected

Required. Maximum number of rows which can fail before the bulk load operation is stopped.

Example: 10 (if you want the pipeline execution to continue as far as the number of failed records is less than 10)

Default: 100

Truncate column values which are larger than the maximum column length in the table

Default value: Selected

Disable compression of data being written to S3. Disabling compression will reduce CPU usage on the Snaplex machine, at the cost of increasing the size of data uploaded to S3.

Default value: Not selected

Default value: Not selected

Additional options to be passed to the COPY command. Check Amazon Redshift – Copy for available options.

Default value: [None]

Defines how many files will be created in S3 per execution. If set to 1 then only one file will be created in S3 which will be used for the copy command. If set to n with n > 1, then n files will be created as part of a manifest copy command, allowing a concurrent copy as part of the Redshift load. The Snap itself will not stream concurrent to S3. It will use a round robin mechanism on the incoming documents to populate the n files. The order of the records is not preserved during the load.

Default value: [None]

Server-side encryption

This Snap executes a Redshift bulk load. The input data is first written to a staging file on S3. Then the Redshift copy command is used to insert data into the target table.

Table Creation

If the table does not exist when the Snap tries to do the load, and the Create table property is set, the table will be created with the columns and data types required to hold the values in the first input document. If you would like the table to be created with the same schema as a source table, you can connect the second output view of a Select Snap to the second input view of this Snap. The extra view in the Select and Bulk Load Snaps are used to pass metadata about the table, effectively allowing you to replicate a table from one database to another.

The table metadata document that is read in by the second input view contains a dump of the JDBC DatabaseMetaData class. The document can be manipulated to affect the CREATE TABLE statement that is generated by this Snap. For example, to rename the name column to full_name, you can use a Mapper (Data) Snap that sets the path "$.columns.name.COLUMN_NAME" to full_name. The document contains the following fields:

columns - Contains the result of the getColumns() method with each column as a separate field in the object. Changing the COLUMN_NAME value will change the name of the column in the created table. Note that if you change a column name, you do not need to change the name of the field in the row input documents. The Snap will automatically translate from the original name to the new name. For example, when changing from name to full_name, the name field in the input document will be put into the "full_name" column. You can also drop a column by setting the COLUMN_NAME value to null or the empty string. The other fields of interest in the column definition are:

TYPE_NAME - The type to use for the column. If this type is not known to the database, the DATA_TYPE field will be used as a fallback. If you want to explicitly set a type for a column, set the DATA_TYPE field.

_SL_PRECISION - Contains the result of the getPrecision() method. This field is used along with the _SL_SCALE field for setting the precision and scale of a DECIMAL or NUMERIC field.

_SL_SCALE - Contains the result of the getScale() method. This field is used along with the _SL_PRECISION field for setting the precision and scale of a DECIMAL or NUMERIC field.

primaryKeyColumns - Contains the result of the getPrimaryKeys() method with each column as a separate field in the object.

declaration - Contains the result of the getTables() method for this table. The values in this object are just informational at the moment. The target table name is taken from the Snap property.

importedKeys - Contains the foreign key information from the getImportedKeys() method. The generated CREATE TABLE statement will include FOREIGN KEY constraints based on the contents of this object. Note that you will need to change the PKTABLE_NAME value if you changed the name of the referenced table when replicating it.

indexInfo - Contains the result of the getIndexInfo() method for this table with each index as a separated field in the object. Any UNIQUE indexes in here will be included in the CREATE TABLE statement generated by this Snap.

ETL Transformations & Data Flow

This Snap executes a Load function with the given properties. The documents that are provided on the input view will be inserted into the provided table on the provided database.

Input & Output:

Input: This Snap can have an upstream Snap that can pass a document output view. Such as Structure or JSON Generator.

Output: The Snap outputs one document specifying the status, with the records count that are being inserted into the table and also the failure record count. Any error occurring during the process is routed to the error view.

- Expected Upstream Snaps: The columns of the selected table need to be mapped upstream using a Mapper Snap. The Mapper Snap will provide the target schema, which reflects the schema of the table that is selected for the Redshift Bulk Load Snap.

- Expected Downstream Snaps: The Snap will output a single document for the entire bulk load operation which contains the count of records inserted into the targeted table as well as the count of failed records.

| Multiexcerpt include macro | ||||

|---|---|---|---|---|

|

- Does not work in Ultra Task Pipelines.

- The Snap will not automatically fix some errors encountered during table creation since they may require user intervention to be resolved correctly. For example, if the source table contains a column with a type that does not have a direct mapping in the target database, the Snap will fail to execute. You will then need to add a Mapper (Data) Snap to change the metadata document to explicitly set the values needed to produce a valid CREATE TABLE statement.

- If string values in the input document contain the '\0' character (string terminator), the Redshift COPY command, which is used by the Snap internally, fails to handle them properly. Therefore, the Snap skips the '\0' characters when it writes CSV data into the temporary S3 files before the COPY command is executed.

This Snap uses account references created on the Accounts page of SnapLogic Manager to handle access to this endpoint. The S3 Bucket, S3 Access-key ID and S3 Secret key properties are required for the Redshift-Bulk Load Snap. The S3 Folder property may be used for the staging file. If the S3 Folder property is left blank, the staging file will be stored in the bucket. See Configuring Redshift Accounts for information on setting up this type of account.

Account & Access

This Snap uses account references created on the Accounts page of SnapLogic Manager to handle access to this endpoint. The S3 Bucket, S3 Access-key ID and S3 Secret key properties are required for the Redshift-Bulk Load Snap. The S3 Folder property may be used for the staging file. If the S3 Folder property is left blank, the staging file will be stored in the bucket. See Configuring Redshift Accounts for information on setting up this type of account.

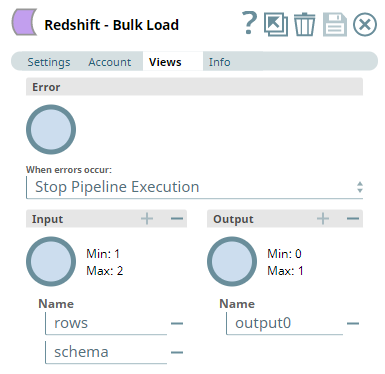

Views:

| Input | This Snap has one document input views by default. A second view can be added for metadata for the table as a document so that the target absent table can be created in the database with a similar schema as the source table. This schema is usually from the second output of a database Select Snap. If the schema is from a different database, there is no guarantee that all the data types would be properly handled. |

|---|---|

| Output | This Snap has at most one output view. |

| Error | This Snap has at most one error view and produces zero or more documents in the view. If you open an error view and expect to have all failed records routed to the error view, you must increase the Maximum error count property. If the number of failed records exceeds the Maximum error count, the pipeline execution will fail with an exception thrown and the failed records will not be routed to the error view. |

Settings

Required. The name for the Snap. You can modify this to be more specific, especially if you have more than one of the same Snap in your pipeline.

The database schema name. In case it is not defined, then the suggestion for the Table Name will retrieve all tables names of all schemas. The property is suggestible and will retrieve available database schemas during suggest values.

| Note |

|---|

The values can be passed using the pipeline parameters but not the upstream parameter. |

Example: SYS

Default value: [None]

Required. The table on which to execute the bulk load operation.

| Note |

|---|

The values can be passed using the pipeline parameters but not the upstream parameter. |

Example: people

Default value: [None]

| name | ME_Create_Table_Automatically_2_Inputs |

|---|

ETL Transformations & Data Flow

This Snap executes a Load function with the given properties. The documents that are provided on the input view will be inserted into the provided table on the provided database.

Input & Output:

Input: This Snap can have an upstream Snap that can pass a document output view. Such as Structure or JSON Generator.

Output: The Snap outputs one document specifying the status, with the records count that are being inserted into the table and also the failure record count. Any error occurring during the process is routed to the error view.

- Expected Upstream Snaps: The columns of the selected table need to be mapped upstream using a Mapper Snap. The Mapper Snap will provide the target schema, which reflects the schema of the table that is selected for the Redshift Bulk Load Snap.

- Expected Downstream Snaps: The Snap will output a single document for the entire bulk load operation which contains the count of records inserted into the targeted table as well as the count of failed records.

| Multiexcerpt include macro | ||||

|---|---|---|---|---|

|

- Does not work in Ultra Tasks.

- The Snap will not automatically fix some errors encountered during table creation since they may require user intervention to be resolved correctly. For example, if the source table contains a column with a type that does not have a direct mapping in the target database, the Snap will fail to execute. You will then need to add a Mapper (Data) Snap to change the metadata document to explicitly set the values needed to produce a valid CREATE TABLE statement.

- If string values in the input document contain the '\0' character (string terminator), the Redshift COPY command, which is used by the Snap internally, fails to handle them properly. Therefore, the Snap skips the '\0' characters when it writes CSV data into the temporary S3 files before the COPY command is executed.

Multiexcerpt include macro name Redshift limitation with PostgreSQL driver templateData [] page Redshift - Execute addpanel false

This Snap uses account references created on the Accounts page of SnapLogic Manager to handle access to this endpoint. The S3 Bucket, S3 Access-key ID and S3 Secret key properties are required for the Redshift-Bulk Load Snap. The S3 Folder property may be used for the staging file. If the S3 Folder property is left blank, the staging file will be stored in the bucket. See Configuring Redshift Accounts for information on setting up this type of account.

Account & Access

This Snap uses account references created on the Accounts page of SnapLogic Manager to handle access to this endpoint. The S3 Bucket, S3 Access-key ID and S3 Secret key properties are required for the Redshift-Bulk Load Snap. The S3 Folder property may be used for the staging file. If the S3 Folder property is left blank, the staging file will be stored in the bucket. See Configuring Redshift Accounts for information on setting up this type of account.

Multiexcerpt include macro name IAM Role templateData eJyLjgUAARUAuQ== page Redshift Snap Pack addpanel false

Views:

| Input | This Snap has one document input view by default. You can add a second view for metadata for the table as a document so that the target absent table can be created in the database with a similar schema as the source table. This schema is usually from the second output of a database Select Snap. If the schema is from a different database, the data types might not be properly handled. |

|---|---|

| Output | This Snap has at most one output view. |

| Error | This Snap has at most one error view and produces zero or more documents in the view. If you open an error view and expect to have all failed records routed to the error view, you must increase the Maximum error count property. If the number of failed records exceeds the Maximum error count, the pipeline execution will fail with an exception thrown and the failed records will not be routed to the error view. |

Settings

Specify the name for the Snap. You can modify this to be more specific, especially if you have more than one of the same Snap in your pipeline.

Specify the database schema name. In case it is not defined, then the suggestion for the Table Name will retrieve all tables names of all schemas. The property is suggestible and will retrieve available database schemas during suggest values.

| Note |

|---|

The values can be passed using the pipeline parameters but not the upstream parameter. |

Example: SYS

Default value: None

Specify the table on which to execute the bulk load operation.

| Note |

|---|

You can pass the values using the Pipeline parameters but not the upstream parameter. |

Example: people

Default value: None

| Multiexcerpt macro | ||

|---|---|---|

| ||

Select this checkbox to automatically create the target table if it does not exist.

Due to implementation details, a newly created table is not visible to subsequent database Snaps during runtime validation. If you want to immediately use the newly updated data you must use a child Pipeline that is invoked through a Pipeline Execute Snap. |

Default value: Not selected

Specify the source from where the data should load. The available options are Input view and Staged files.

- Input View: If you select this option, leave the Table Columns field empty,

- Staged files: When you select this option, the following fields appear:

- S3 Path: Provide the path to which the records are to be added.

- Column Delimiter: Specify the delimiter to use to separate column values in the staged files; it must be a single-char value. The default value is comma (,).

Select this checkbox to enable the Snap perform input data validation to verify all input documents are flat map data. If any value is a Map or a List object, the Snap writes an error to the error view, and if this condition occurs, no document is written to the output view. See the Troubleshooting section above for information on handling errors caused due to invalid input data.

Default value: Not selected

| Note | ||

|---|---|---|

| ||

If this property is not selected, the Snap does not validate the structure of input documents, converts all values to strings, writes the S3 CSV file, and executes the Redshift COPY command. If the COPY command finds error in the input CSV data, it writes errors to the error table, and the Snap routes these errors to the error view (if error view is enabled). However, some errors reported by the COPY command may not be easy to understand. Therefore, it is advisable to enable input data validation during pipeline development and testing, as this may also help troubleshoot the pipeline. |

| Info | ||

|---|---|---|

| ||

Flat map data is a collection of key-value pairs, where the values are all single-class objects unlike a Map or List. |

Default value: Not selected

Update statistics

Default value: Not selected

Select this checkbox to accept invalid characters in the input. Invalid UTF-8 characters are replaced with a question mark when loading.

Default value: Selected

Specify the maximum number of rows which can fail before the bulk load operation is stopped.

Default: 100

Example: 10 (if you want the Pipeline execution to continue as far as the number of failed records is less than 10)

Select this checkbox to truncate column values which are larger than the maximum column length in the table

Default value: Selected

Select this checkbox to disable compression of data being written to S3. Disabling compression will reduce CPU usage on the Snaplex machine, at the cost of increasing the size of data uploaded to S3.

Default value: Not selected

Default value: Not selected

Specify additional options to be passed to the COPY command. For example, EMPTYASNULL, this command indicates that the Redshift should load empty fields as NULL. Empty fields occur when data contains two delimiters in succession with no characters between the delimiters. Learn more about the available options in Amazon Redshift – Copy documentation.

Default value: N/A

Example: ACCEPTANYDATE

Define the number of files to be created in S3 per execution. If set to 1 then only one file will be created in S3 which will be used for the copy command. If set to n with n > 1, then n files will be created as part of a manifest copy command, allowing a concurrent copy as part of the Redshift load. The Snap itself will not stream concurrent to S3. It will use a round robin mechanism on the incoming documents to populate the n files. The order of the records is not preserved during the load.

Default value: None

Appears when the parallelism value is greater than 1.

Select the type of instance from the following options:

Default

High-performance S3 upload optimized

| Note |

|---|

|

Default Value: Default

Example: High-performance S3 upload optimized

Server-side encryption

Select this checkbox to enable encryption for the data that is loaded. This defines the S3 encryption type to use when temporarily uploading the documents to S3 before the you insert data into the Redshift.

Default value: Not selected

Specifies Specify the type of Key Management Service (KMS) S3 encryption to be used on the data. The available encryption options are:

- None - Files do not get encrypted using KMS encryption

- Server-Side KMS Encryption - If selected, the This option enables the output files on Amazon S3 are to be encrypted using this encryption with the Amazon S3 generated KMS key.

Default value: None

| Note |

|---|

If both the KMS and Client-side encryption types are selected, the Snap gives precedence to the SSE, and displays an error prompting the user to select either of the options only. |

Conditional. This property applies only when the encryption Activates when KMS Encryption type is set to Server-Side Encryption with KMS. This is

Specify the KMS key to use for the S3 encryption. For more information about the KMS key, refer to AWS KMS Overview and Using Server Side Encryption.

Default value: [ None]

| Note |

|---|

Auto-commit needs to be enabled for Vacuum. |

Default value: NONE None

Specifies the threshold above which VACUUM skips the sort phase. If this property is left empty, Redshift sets it to 95% by default.

Default value: [None]

| Multiexcerpt include macro | ||||

|---|---|---|---|---|

|

| Multiexcerpt include macro | ||||

|---|---|---|---|---|

|

Insert excerpt Azure SQL - Bulk Load Azure SQL - Bulk Load nopanel true

Redshift's Vacuum Command

In Redshift, when rows are DELETED or UPDATED against a table they are simply logically deleted (flagged for deletion), not physically removed from disk. This causes the rows to continue consuming disk space and those blocks are scanned when a query scans the table. This results in an increase in table storage space and degraded performance due to otherwise avoidable disk IO during scans. A vacuum recovers the space from deleted rows and restores the sort order. due to otherwise avoidable disk IO during scans. A vacuum recovers the space from deleted rows and restores the sort order.

Troubleshooting

| Multiexcerpt macro | ||||||

|---|---|---|---|---|---|---|

| ||||||

|

Basic Use Case

The following pipeline Pipeline describes how the Snap functions as a standalone Snap in a pipelinePipeline:

Use Case: Replicate a Database Schema in Redshift

MySQL Select to Redshift Bulk Load

In this example, a MySQL Select Snap is used to select data from 'AV_Persons' table belonging to the 'enron' schema. The Mapper Snap maps this data to the target table's schema and is then loaded onto the "bulkload_demo" table in the "prasanna" schema:

Select the data from the MySQL database.

Mapper will be used to map the data to the input schema that is associated with Redshift Bulkload database table.

Loads the input Documents that is coming from the Mapper to a S3 file.

Finally invoke the COPY command to invoke the created S3 file to insert the data into the destination table.

Typical Snap Configurations

Key configuration lies in how the SQL statements are passed to perform bulk load of the records. The statements can be passed:

Without Expression

The values are passed directly to the Snap.

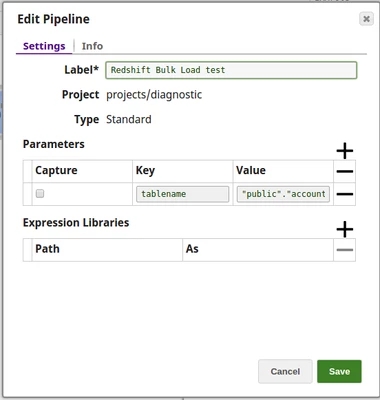

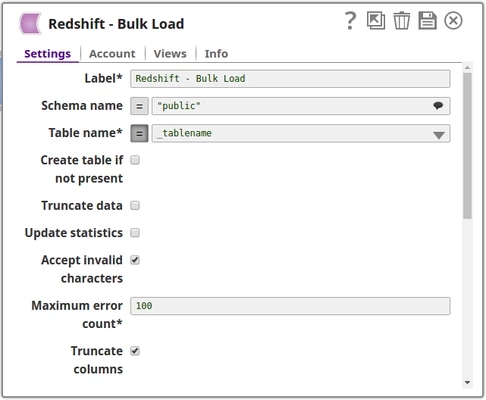

With Expression

Using Pipeline parameters

The Table name is passed as a Pipeline parameter.

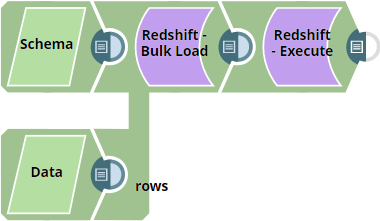

Basic Use Case #2: Use of twin inputs to define schema while creating tables in Redshift

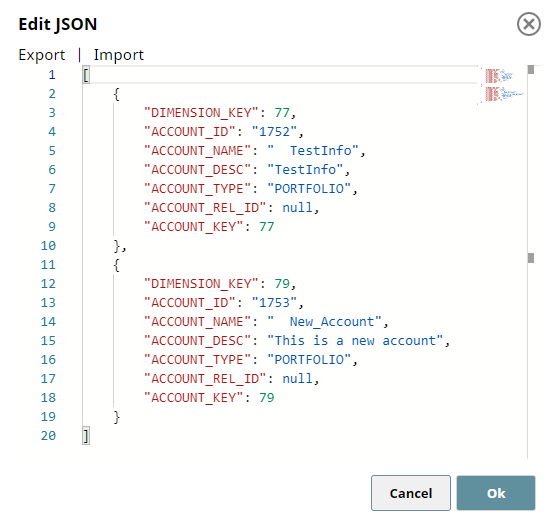

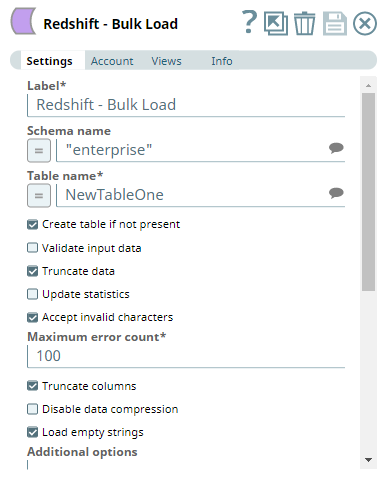

The following Pipeline demonstrates how to use the second input view of Redshift - Bulk Load Snap to define the schema for creating a non-existent table in Redshift data store.

We use two JSON Generator Snaps: one for passing the table schema and another for passing the data rows.

| Input 1: Schema | Input 2: Data |

|---|---|

We use the Redshift Bulk Upload Snap to combine these two inputs — schema and data rows, and create a table, if it does not exist in the Redshift Data store.

| Redshift - Bulk Load Snap Settings | Views |

|---|---|

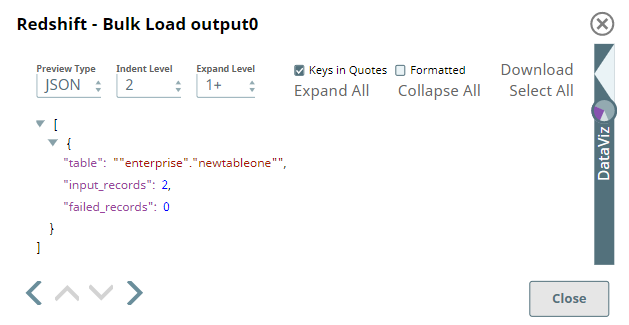

| Output | |

After creating the table and loading the data based on these inputs, the Snap displays the result in the output in terms of the number of records loaded into the specified table and the number of failed records.

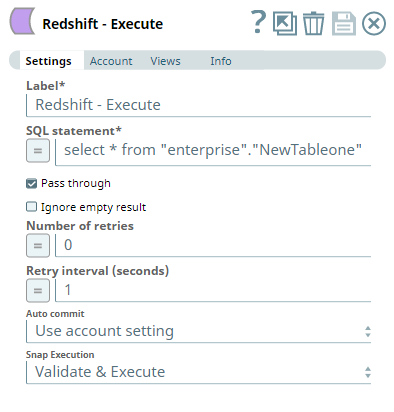

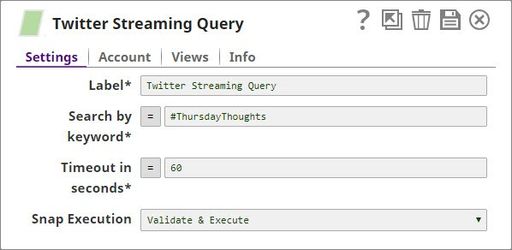

To see the records inserted into the new table, we can connect a Redshift - Execute Snap and run a select query on the newly-created table. it It retrieves the data inserted into each record. It is possible that you see more records in the output, in case the table existed and the new rows are inserted into it.

| Redshift - Execute Snap | Output |

|---|---|

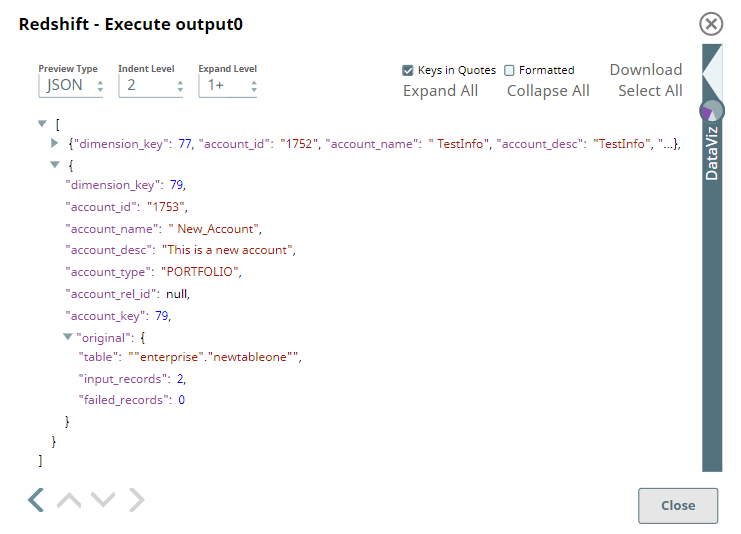

Advanced Use Case

The following Pipeline describes a pipeline that shows how typically in an enterprise environment, a lookup functionality is used . Pipeline download link is in the Downloads section belowin an enterprise environment. In this pipelinePipeline, tweets pertaining to a keyword "#ThursdayThoughts" are extracted using a Twitter Query Snap and loaded into the table "twittersnaplogic" in the public schema using the Redshift Bulk Load Snap. When executed, all the records are loaded into the table.

The pipeline Pipeline performs the following ETL operations:

Extract: Twitter Query Snap selects 25 tweets (a value specified in the Maximum tweets property) pertaining to the Twitter query.

Transform: The Sort Snap, labeled Sort by Username in this pipeline, sorts the records based on the value of the field user.name.

Extract: The Salesforce SOQL Snap extract's records matching the user.name field value. Extracted records are LastName, FirstName, Salutation, Name, and Email.

Load: The Redshift Bulk Load Snap loads the results into the table, twittersnaplogic, on the Redshift instance.

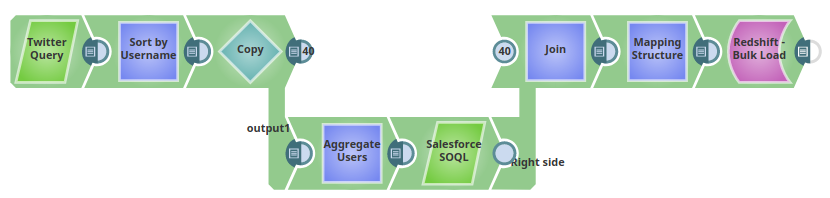

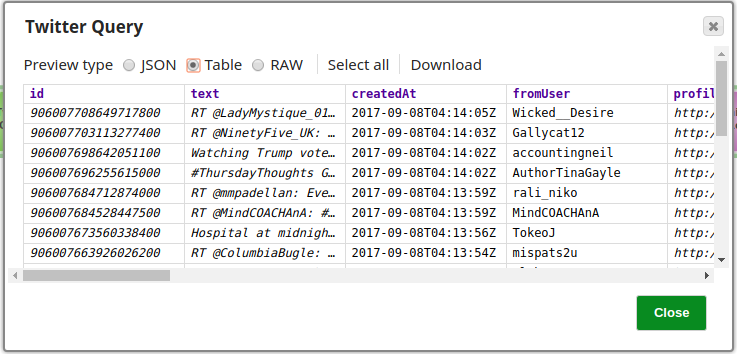

The configuration of the Twitter Query Snap is as shown below:

The Twitter Query Snap is configured to retrieve 25 records from the Account, a preview of the Snap's operation is shown below:

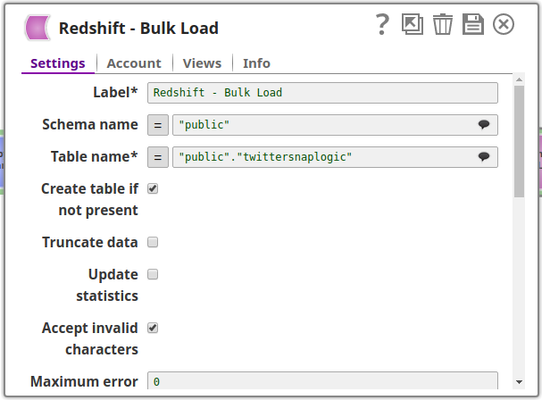

The Redshift Bulk Load Snap is configured as shown in the figure below:

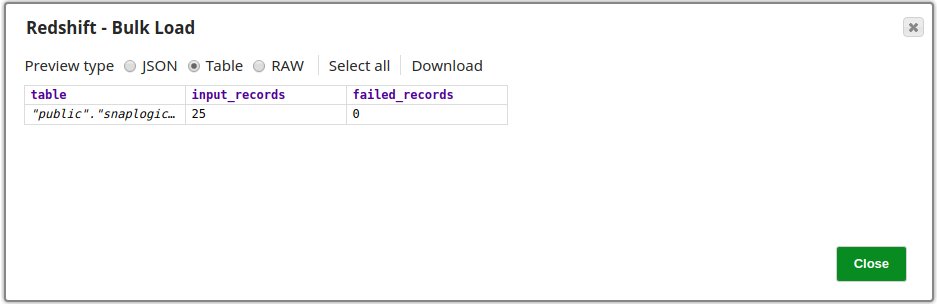

Successful execution of the Snap is denoted by a count of the records loaded into the specified table, note that the number of failed records is also shown provided it does not exceed the limit specified in the Maximum error count property, in which case it would stop the pipeline. The image below shows that all 25 records retrieved in the Twitter Query Snap have been loaded into the table successfully.

Advanced Use Case #2

The following describes a pipeline that shows how typically in an enterprise environment, Pipeline demonstrates how a bulk load functionality is used . Pipeline download link is in the Downloads section belowtypically in an enterprise environment. In this pipelinePipeline, the File Reader Snap reads the data, from which the data will be loaded in to the table using the Redshift Bulk Load Snap. When executed, all the records are loaded into the table.

The pipeline Pipeline performs the following ETL operations:

Extract: File Reader snap will read the data from the file and submit it to the CSV parser.

Transform: CSV Parser will perform the parsing of the provided data which will be written to the MySQL insert snap.

Extract: The entire data will be loaded into the Redshift table, by creating new table with the provided schema.

Downloads

| Multiexcerpt include macro | ||||

|---|---|---|---|---|

|

| Attachments | ||

|---|---|---|

|

| Insert excerpt | ||||||

|---|---|---|---|---|---|---|

|

...

.png?version=1&modificationDate=1510743705391&cacheVersion=1&api=v2&width=490)