In this article

Overview

You can use the Snowflake - Bulkload Snap to load data from input sources or files stored on external object stores like Amazon S3, Google Storage, and Azure Storage Blob into the Snowflake data warehouse.

Snap Type

The Snowflake - Bulk Load Snap is a Write-type Snap that performs a bulk load operation.

Prerequisites

You must have minimum permissions on the database to execute Snowflake Snaps. To understand if you already have them, you must retrieve the current set of permissions. The following commands enable you to retrieve those permissions:

SHOW GRANTS ON DATABASE <database_name> SHOW GRANTS ON SCHEMA <schema_name> SHOW GRANTS TO USER <user_name>

Security Prererequisites

You must have the following permissions in your Snowflake account to execute this Snap:

Usage (DB and Schema): Privilege to use the database, role, and schema.

Create table: Privilege to create a temporary table within this schema.

The following commands enable minimum privileges in the Snowflake console:

grant usage on database <database_name> to role <role_name>; grant usage on schema <database_name>.<schema_name>; grant "CREATE TABLE" on database <database_name> to role <role_name>; grant "CREATE TABLE" on schema <database_name>.<schema_name>;

Learn more about Snowflake privileges: Access Control Privileges.

Internal SQL Commands

This Snap uses the following Snowflake commands internally:

COPY INTO - Enables loading data from staged files to an existing table.

PUT - Enables staging the files internally in a table or user stage.

Requirements for External Storage Location

The following are mandatory when using an external staging location:

When using an Amazon S3 bucket for storage:

The Snowflake account should contain S3 Access-key ID, S3 Secret key, S3 Bucket and S3 Folder.

The Amazon S3 bucket where the Snowflake will write the output files must reside in the same region as your cluster.

When using a Microsoft Azure storage blob:

A working Snowflake Azure database account.

When using a Google Cloud Storage:

Provide permissions such as Public access and Access control to the Google Cloud Storage bucket on the Google Cloud Platform.

Support for Ultra Pipelines

Works in Ultra Pipelines. However, we recommend that you not to use this Snap in an Ultra Pipeline.

Limitations

Special character'~' is not supported if it is there in the temp directory name for Windows. It is reserved for the user's home directory.

Snowflake provides the option to use the Cross Account IAM in the external staging. You can adopt the cross-account access through the option Storage Integration. With this setup, you don’t need to pass any credentials around, and access to the storage only using the named stage or integration object. For more details: Configuring Cross Account IAM Role Support for Snowflake Snaps

Snowflake Bulk Load expects column order should be like a table from upstream snaps; otherwise, it will result in failure of data validation.

If a Snowflake Bulk Load operation fails due to inadequate memory space on the JCC node when the Data source is Input View and the Staging location is Internal Stage, you can store the data on an external staging location (S3, Azure Blob or GCS).

When the bulk load operation fails due to an invalid input and when the input does not contain the default columns, the error view does not display the erroneous columns correctly.

This is a bug in Snowflake and is being tracked under JIRA SNOW-662311 and JIRA SNOW-640676.

Behavior change

Starting from 4.35 GA, if your Snaplex is behind a proxy and the Snowflake Bulk Load Snap uses the default Snowflake driver (3.14.0 JDBC driver), then the Snap might encounter a failure. There are two ways to prevent this failure:

1️⃣ Add the following key-value pair in the Global properties section of the Node Properties tab:Key: jcc.jvm_optionsValue: -Dhttp.useProxy=true

2️⃣ Add the following key-value pairs in the URL properties of the Snap under Advanced properties.

We recommend you use the second approach to prevent the Snap’s failure.

Known Issues

Known Issue: The create table operation fails if it contains a geospatial data type column.

Workaround: Create the table manually ahead of time or use the Snowflake - Execute to create the table.

Because of performance issues, all Snowflake Snaps now ignore the Cancel queued queries when pipeline is stopped or if it fails option for Manage Queued Queries, even when selected. Snaps behave as though the default Continue to execute queued queries when the Pipeline is stopped or if it fails option were selected.

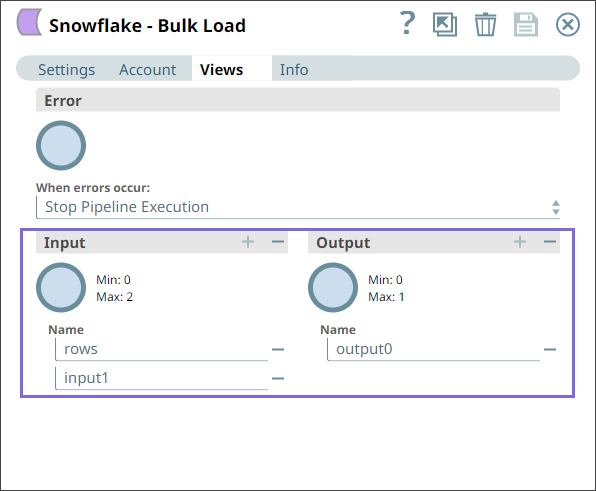

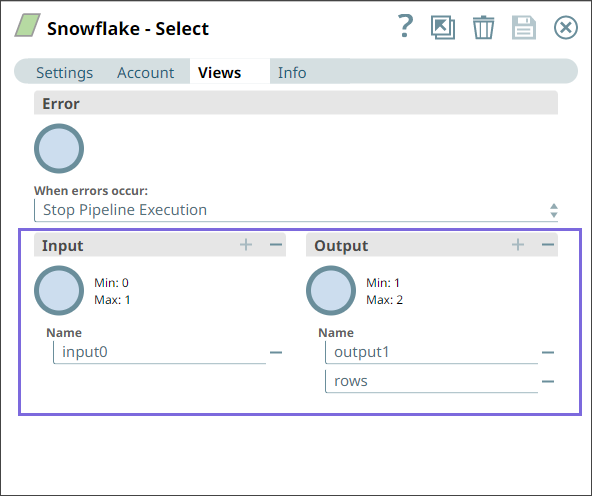

Snap Views

Type | Format | Number of Views | Examples of Upstream and Downstream Snaps | Description |

|---|---|---|---|---|

Input | Document |

|

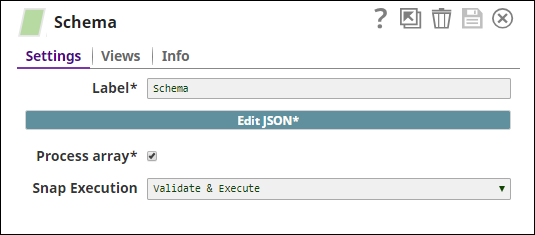

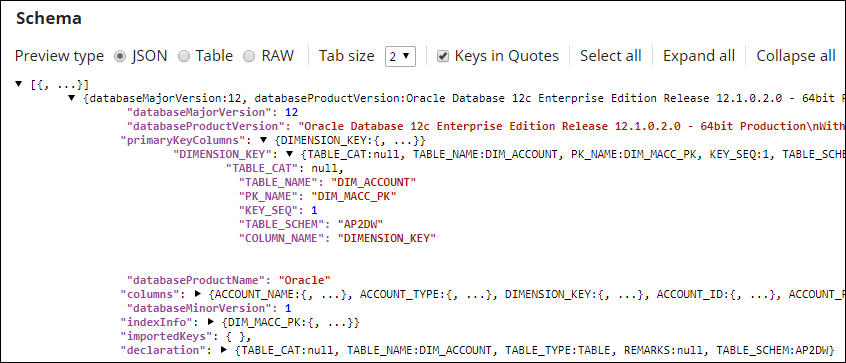

| Documents containing the data to be uploaded to the target location. Second Input ViewThis Snap has one document input view by default. You can add a second input view for metadata for the table as a document so that when the target table is absent, this table metadata can be created in the database with a similar schema as the source table. This schema is usually from the second output of a database Select Snap. If the schema is from a different database, the data types might not be properly handled. Learn more about adding metadata for the table in the second input view from the example Providing Metadata For Table Using The Second Input View. |

Output | Document |

|

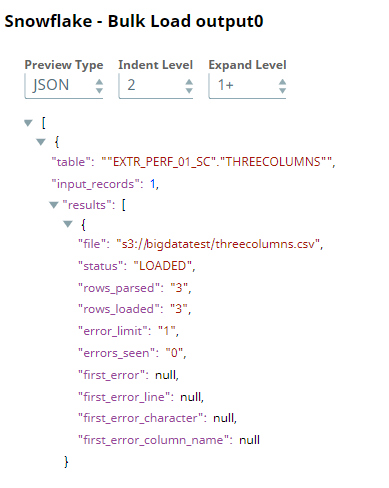

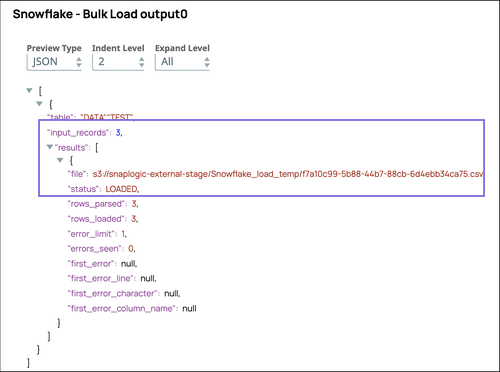

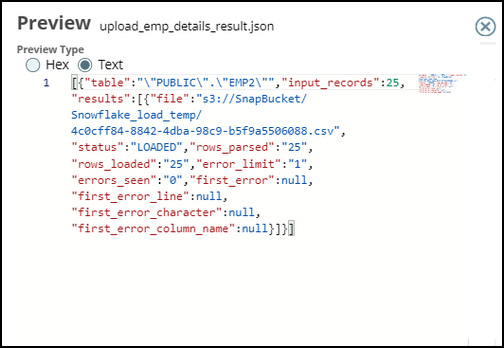

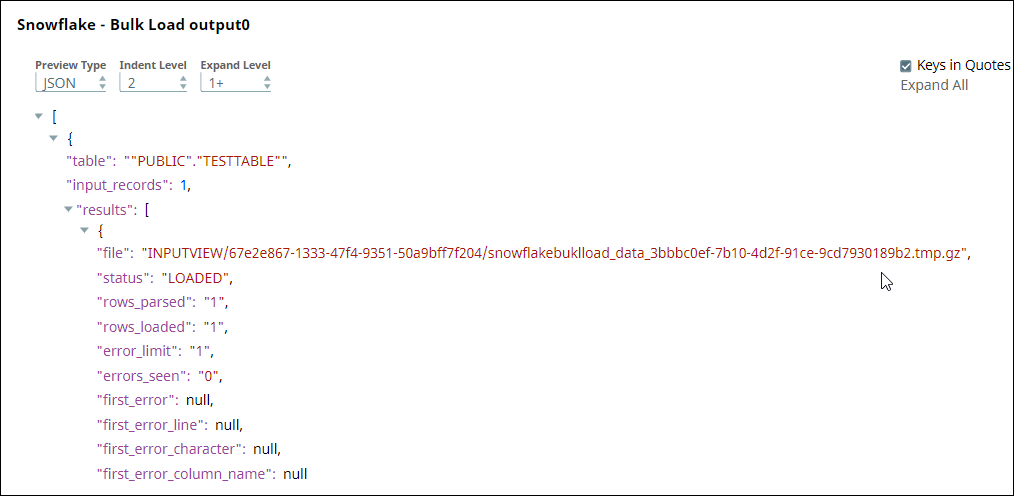

| If an output view is available, then the output document displays the number of input records and the status of the bulk upload as follows: |

Error | Error handling is a generic way to handle errors without losing data or failing the Snap execution. You can handle the errors that the Snap might encounter when running the Pipeline by choosing one of the following options from the When errors occur list under the Views tab:

Learn more about Error handling in Pipelines. | |||

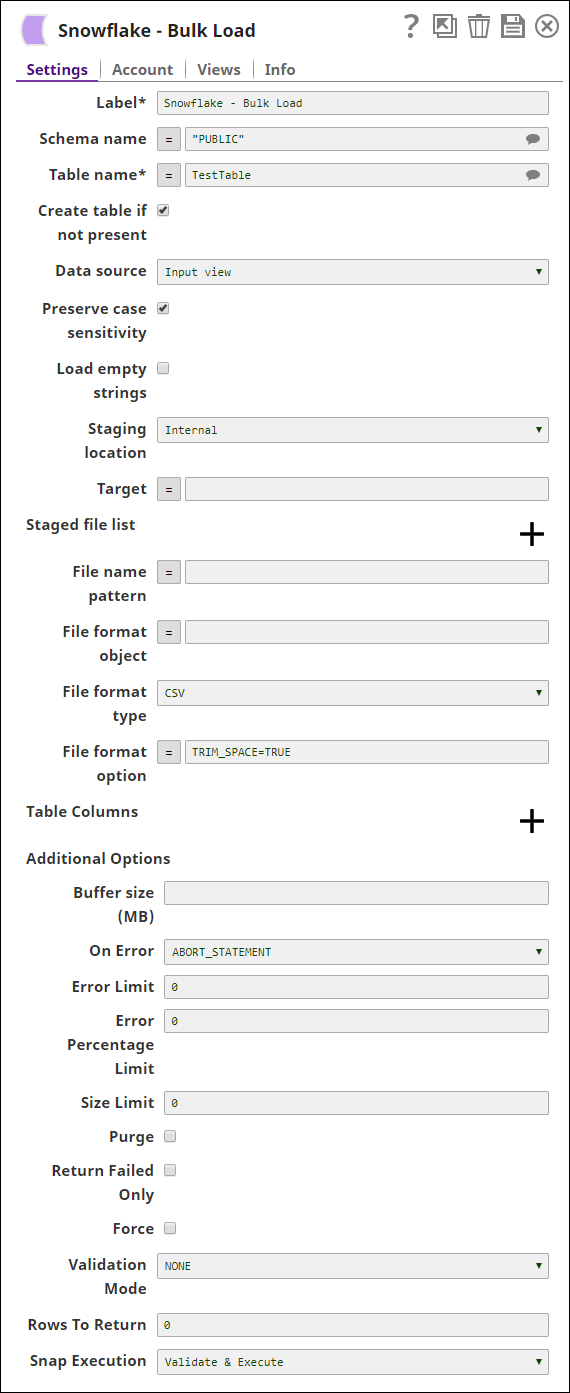

Snap Settings

Asterisk (*): Indicates a mandatory field.

Suggestion icon (

): Indicates a list that is dynamically populated based on the configuration.

): Indicates a list that is dynamically populated based on the configuration.Expression icon (

): Indicates whether the value is an expression (if enabled) or a static value (if disabled). Learn more about Using Expressions in SnapLogic.

): Indicates whether the value is an expression (if enabled) or a static value (if disabled). Learn more about Using Expressions in SnapLogic.Add icon (

): Indicates that you can add fields in the field set.

): Indicates that you can add fields in the field set.Remove icon (

): Indicates that you can remove fields from the field set.

): Indicates that you can remove fields from the field set.

Field Name | Field Type | Field Dependency | Description | |

|---|---|---|---|---|

Label*

| String | N/A | Specify the name for the instance. You can modify this to be more specific, especially if you have more than one of the same Snap in your Pipeline. | |

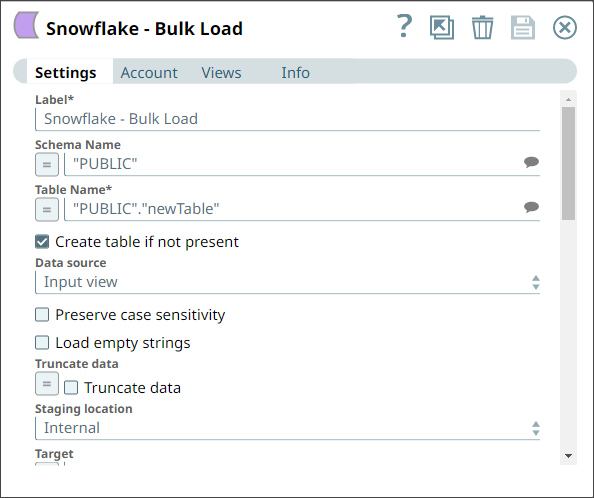

Schema Name Default Value: N/A | String/Expression/Suggestion | N/A | Specify the database schema name. In case it is not defined, then the suggestion for the Table Name retrieves all tables names of all schemas. The property is suggestible and will retrieve available database schemas during suggest values. The values can be passed using the Pipeline parameters but not the upstream parameter. | |

Table Name* Default Value: N/A | String/Expression/Suggestion | N/A | Specify the name of the table to execute bulk load operation on. The values can be passed using the Pipeline parameters but not the upstream parameter. | |

Create table if not present Default Value: Deselected | Checkbox | N/A | Select this checkbox to automatically create the target table if it does not exist.

This should not be used in production since there are no indexes or integrity constraints on any column and the default varchar() column is over 30k bytes. The create table operation fails if it contains a geospatial data type column. | |

Data source Default Value: Input view | Dropdown list | N/A | Specify the source from where the data should load. The available options are Input view and Staged files. When the option 'Input View' is selected, leave the Table Columns field empty, and if the 'Staged files' option is selected, provide the column names for the Table Columns to which the records are to be added. | |

Preserve case sensitivity Default Value: Deselected | Checkbox | N/A | Select this check box to preserve the case sensitivity of the column names.

| |

Load empty strings Default Value: Deselected | Checkbox | N/A | Select this check box to load empty string values in the input documents as empty strings to the string-type fields. Else, empty string values in the input documents are loaded as null. Null values are loaded as null regardless. | |

Truncate data Default Value: Deselected | Checkbox | N/A | Select this checkbox to truncate existing data before performing data load. With the Bulk Update Snap, instead of doing truncate and then update, a Bulk Insert would be faster. | |

Staging location

| Dropdown list/Expression | N/A | Select the type of staging location that is to be used for data loading:

The Snap creates temporary files in JCC when the Staging location is internal and the Data source is input view. These temporary files are removed automatically once the Pipeline completes execution.

| |

Flush chunk size (in bytes) | String/Expression | Appears when you select Input view for Data source and Internal for Staging location. | When using internal staging, data from the input view is written to a temporary chunk file on the local disk. When the size of a chunk file exceeds the specified value, the current chunk file is copied to the Snowflake stage and then deleted. A new chunk file simultaneously starts to store the subsequent chunk of input data. The default size is 100,000,000 bytes (100 MB), which is used if this value is left blank. | |

Target Default Value: N/A | String/Expression | N/A | Specify an internal or external location to load the data. If you select External for Staging Location, a staging area is created in Azure, GCS, or S3 as configured. Otherwise, a staging area is created in Snowflake's internal location. This field accepts the following input:

The value for the expression has to be provided as a Pipeline parameter and cannot be provided from the Upstream Snap for performance reasons when you use expression values. | |

Storage Integration Default Value: N/A | String/Expression | Appears when you select Staged files for Data source and External for Staging location. | Specify the pre-defined storage integration that is used to authenticate the external stages. The value for the expression has to be provided as a Pipeline parameter and cannot be provided from the Upstream Snap for performance reasons when you use expression values. | |

Staged file list | Use this field set to define staged file(s) to be loaded to the target file. | |||

Staged file | String/Expression | Appears when you select Staged files for Data source. | Specify the staged file to be loaded to the target table. | |

File name pattern Default Value: N/A Example: .length | String/Expression | Appears when you select Staged files for Data source. | Specify a regular expression pattern string, enclosed in single quotes with the file names and /or path to match. | |

File format object Default Value: None Example: jsonPath() | String/Expression | N/A | Specify an existing file format object to use for loading data into the table. The specified file format object determines the format type such as CSV, JSON, XML, AVRO, or other format options for data files. | |

File format type Default Value: None | String/Expression/Suggestion | N/A | Specify a predefined file format object to use for loading data into the table. The available file formats include CSV, JSON, XML, and AVRO. This Snap supports only CSV and NONE as file format types when the Datasource is Input view. | |

File format option Default value: N/A | String/Expression | N/A | Specify the file format option. Separate multiple options by using blank spaces and commas. You can use various file format options including a binary format which passes through in the same way as other file formats. Learn more: File Format Type Options. Before loading binary data into Snowflake, you must specify the binary encoding format, so that Snap can decode the string type to binary types before loading it into Snowflake. This can be done by selecting the following binary file format:

However, the file you upload and download must be in similar formats. For instance, if you load a file in HEX binary format, you should specify the HEX format for download as well. When using external staging locations

| |

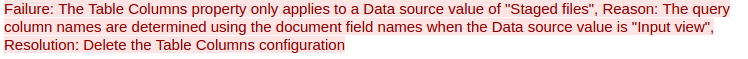

Table Columns | Use this field set to specify the columns to be used in the COPY INTO command. This only applies when the Data source is Staged files. | |||

Columns | String/Expression/Suggestion | N/A | Specify the table columns to use in the Snowflake COPY INTO query. This configuration is valid when the staged files contain a subset of the columns in the Snowflake table. For example, if the Snowflake table contains columns A, B, C, and D, and the staged files contain columns A and D then the Table Columns field would have two entries with values A and D. The order of the entries should match the order of the data in the staged files. If the Data source is Input view, the snap displays the following error: | |

Select Query Default Value: N/A | String/Expression | Appears when the Data source is Staged files. | Specify the The SELECT statement transform option enables querying the staged data files by either reordering the columns or loading a subset of table data from a staged file. For example, (OR)

We recommend you not use a temporary stage while loading your data. | |

Encryption type Default Value: None | Dropdown list | N/A | Specify the type of encryption to be used on the data. The available encryption options are:

The KMS Encryption option is available only for S3 Accounts (not for Azure Accounts and GCS) with Snowflake. If Staging Location is set to Internal, and when Data source is Input view, the Server Side Encryption and Server-Side KMS Encryption options are not supported for Snowflake snaps: This happens because Snowflake encrypts loading data in its internal staging area and does not allow the user to specify the type of encryption in the PUT API. Learn more: Snowflake PUT Command Documentation. | |

KMS key Default Value: N/A | String/Expression | N/A | Specify the KMS key that you want to use for S3 encryption. Learn more about the KMS key: AWS KMS Overview and Using Server Side Encryption. | |

Buffer size (MB) Default Value: 10MB | String/Expression | N/A | Specify the data in MB to be loaded into the S3 bucket at a time. This property is required when bulk loading to Snowflake using AWS S3 as the external staging area. Minimum value: 5 MB Maximum value: 5000 MB S3 allows a maximum of 10000 parts to be uploaded so this property must be configured accordingly to optimize the bulk load. Refer to Upload Part for more information on uploading to S3. | |

Manage Queued Queries Default Value: Continue to execute queued queries when the Pipeline is stopped or if it fails | Dropdown list | N/A | Select this property to determine whether the Snap should continue or cancel the execution of the queued Snowflake Execute SQL queries when you stop the pipeline. If you select Cancel queued queries when the Pipeline is stopped or if it fails, then the read queries under execution are canceled, whereas the write type of queries under execution are not canceled. Snowflake internally determines which queries are safe to be canceled and cancels those queries. | |

Additional Options | ||||

On Error Default Value: ABORT_STATEMENT | Dropdown list | N/A | Select an action to perform when errors are encountered in a file. The available actions are:

| |

Error Limit Default Value: 0 | Integer | Appears when you select SKIP_FILE_*error_limit* for On Error. | Specify the error limit to skip file. When the number of errors in the file exceeds the specified error limit or when SKIP_FILE_number is selected for On Error. | |

Error Percentage Limit Default Value: 0 | Integer | Appears when you select SKIP_FILE_*error_percent_limit*% | Specify the percentage of errors to skip file. If the file exceeds the specified percentage when SKIP_FILE_number% is selected for On Error. | |

Size Limit Default Value: 0 | Integer | N/A | Specify the maximum size (in bytes) of data to be loaded. At least one file is loaded regardless of the value specified for SIZE_LIMIT unless there is no file to be loaded. A null value indicates no size limit. | |

Purge Default value: Deselected | Checkbox | Appears when the Staging location is External. | Specify whether to purge the data files from the location automatically after the data is successfully loaded. | |

Return Failed Only Default Value: Deselected | Checkbox | N/A | Specify whether to return only files that have failed to load while loading. | |

Force Default Value: Deselected | Checkbox | N/A | Specify if you want to load all files, regardless of whether they have been loaded previously and have not changed since they were loaded. | |

Truncate Columns Default Value: Deselected | Checkbox | N/A | Select this checkbox to truncate column values that are larger than the maximum column length in the table. | |

Validation Mode Default Value: None | Dropdown list | N/A | Select the validation mode for visually verifying the data before unloading it. The available options are:

| |

Validation Errors Type Default Value: Full error | Dropdown list | Appears when you select NONE for Validation Mode. | Select one of the following methods for displaying the validation errors:

| |

Rows to Return Default Value: 0 | Integer | Appears when you select RETURN_n_ROWS, RETURN_ERRORS, and RETURN_ALL_ERRORS for Validation Mode. | Specify the number of rows not loaded into the corresponding table. Instead, the data is validated to be loaded and returns results based on the validation option specified. It can be one of the following values: RETURN_n_ROWS | RETURN_ERRORS | RETURN_ALL_ERRORS | |

Snap Execution

| Dropdown list | N/A | Select one of the three modes in which the Snap executes. Available options are:

| |

Instead of building multiple Snaps with interdependent DML queries, we recommend you use the Stored Procedure or the Multi Execute Snaps.

In a scenario where the downstream Snap depends on the data processed on an upstream database Bulk Load Snap, use the Script Snap to add delay for the data to be available.

For example, when performing a create, insert, and delete function sequentially on a pipeline, the Script Snap helps to create a delay between the insert and delete function. Otherwise, the delete function may get triggered before inserting the records into the table.

Troubleshooting

Error | Reason | Resolution |

|---|---|---|

Data can only be read from Google Cloud Storage (GCS) with the supplied account credentials (not written to it). | Snowflake Google Storage Database accounts do not support external staging when the Data source is the Input view. Data can only be read from GCS with the supplied account credentials (not written to it). | Use internal staging if the data source is the input view or change the data source to staged files for Google Storage external staging. |

Examples

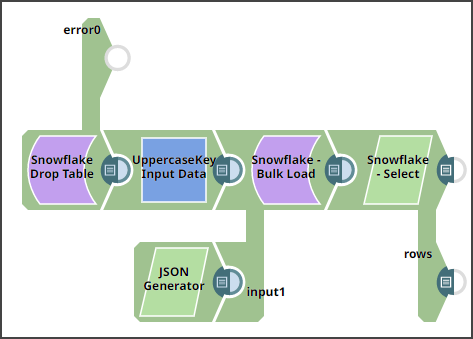

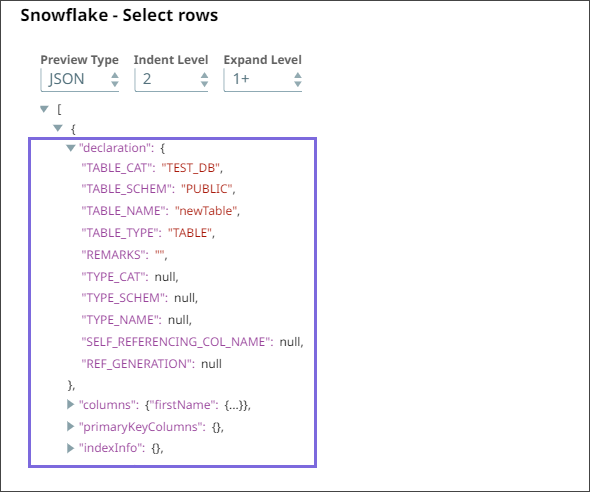

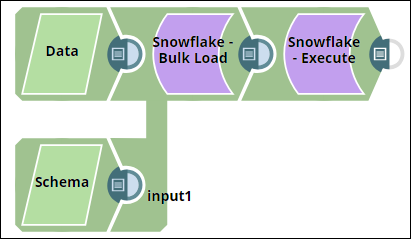

Providing Metadata For Table Using The Second Input View

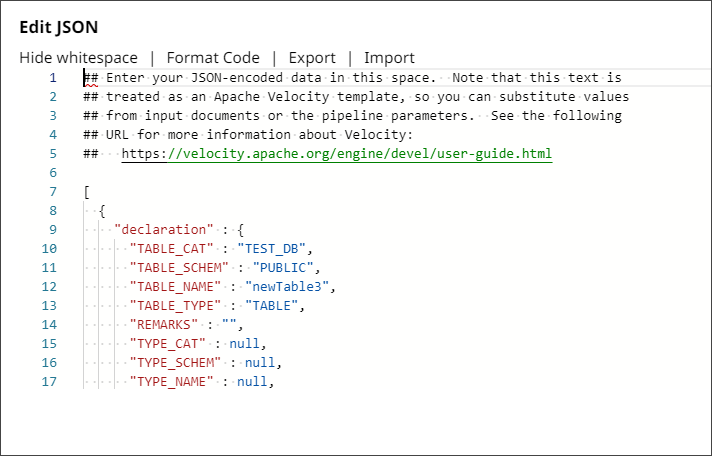

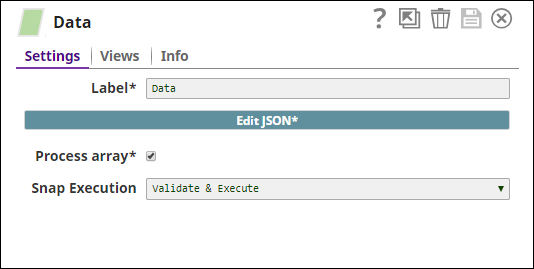

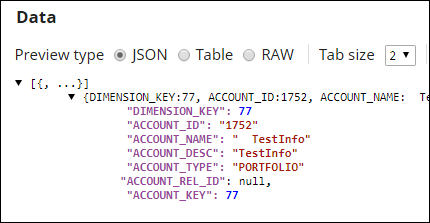

This example Pipeline demonstrates how to provide metadata for the table definition through the second input view, to enable the Bulk Load Snap to create a table according to the definition.

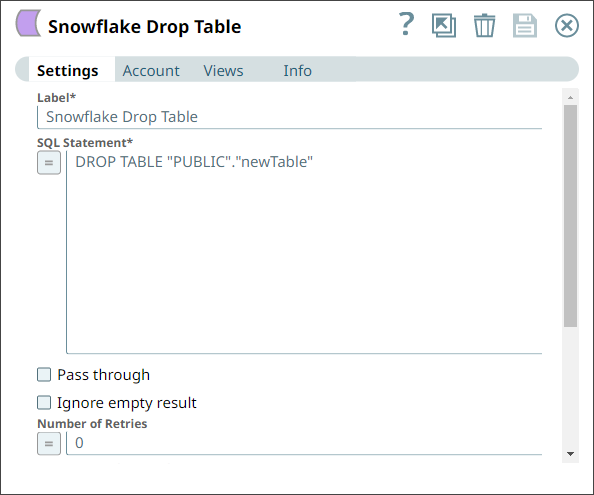

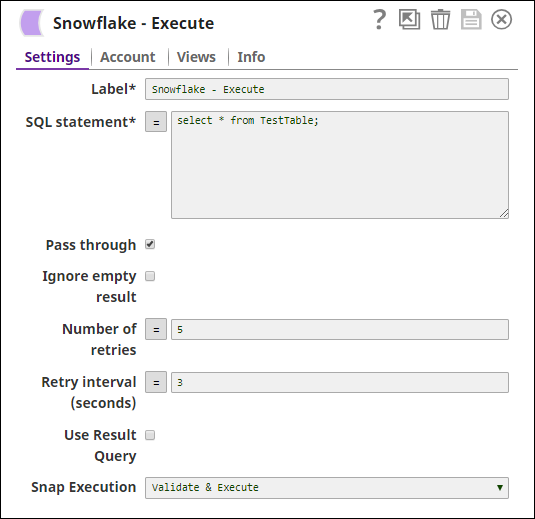

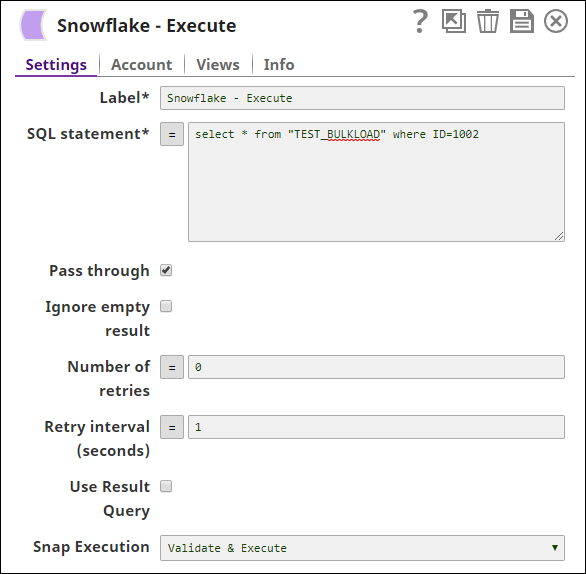

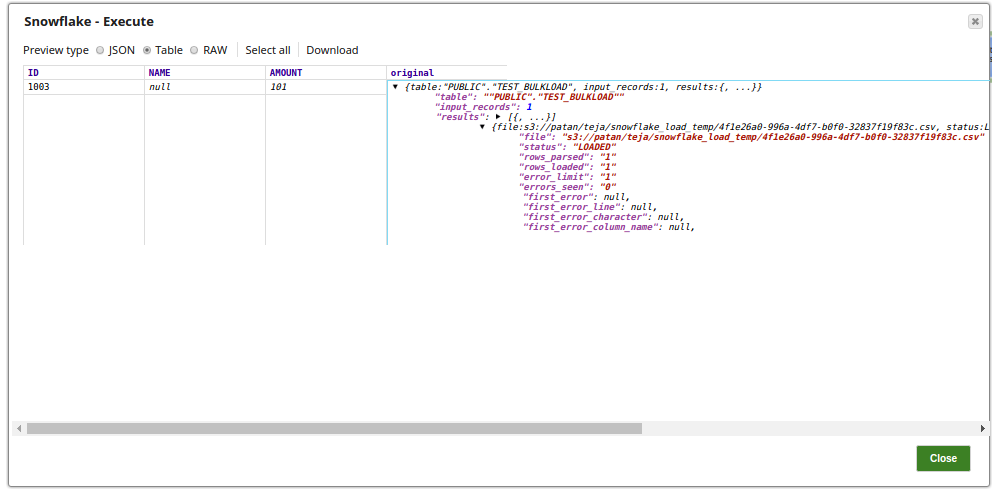

Configure the Snowflake Execute Snap as follows to drop the newTable with the DROP TABLE query.

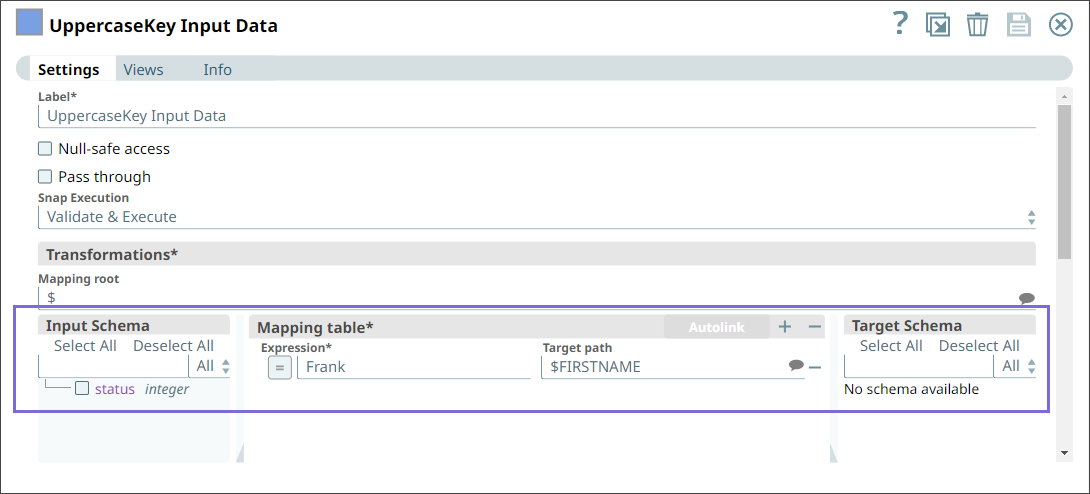

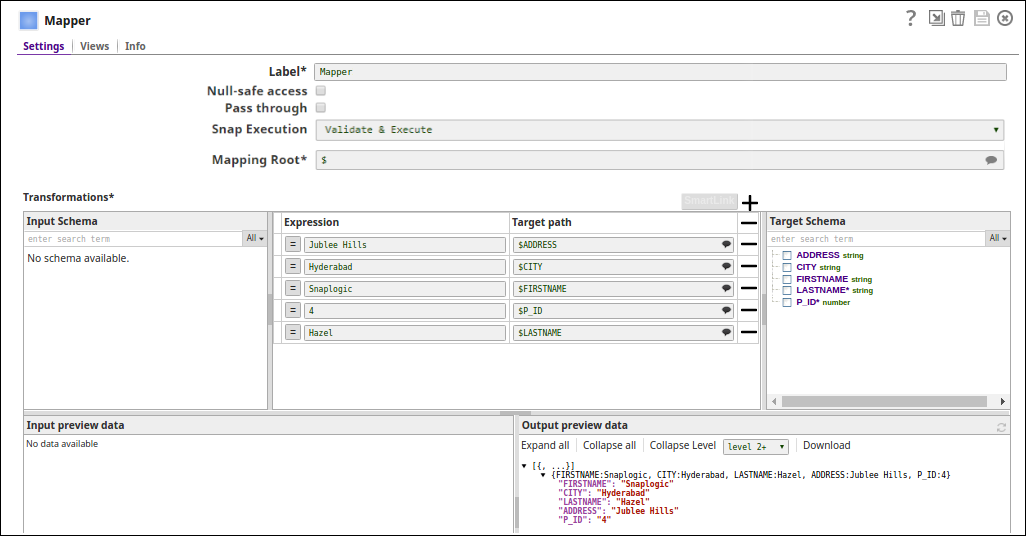

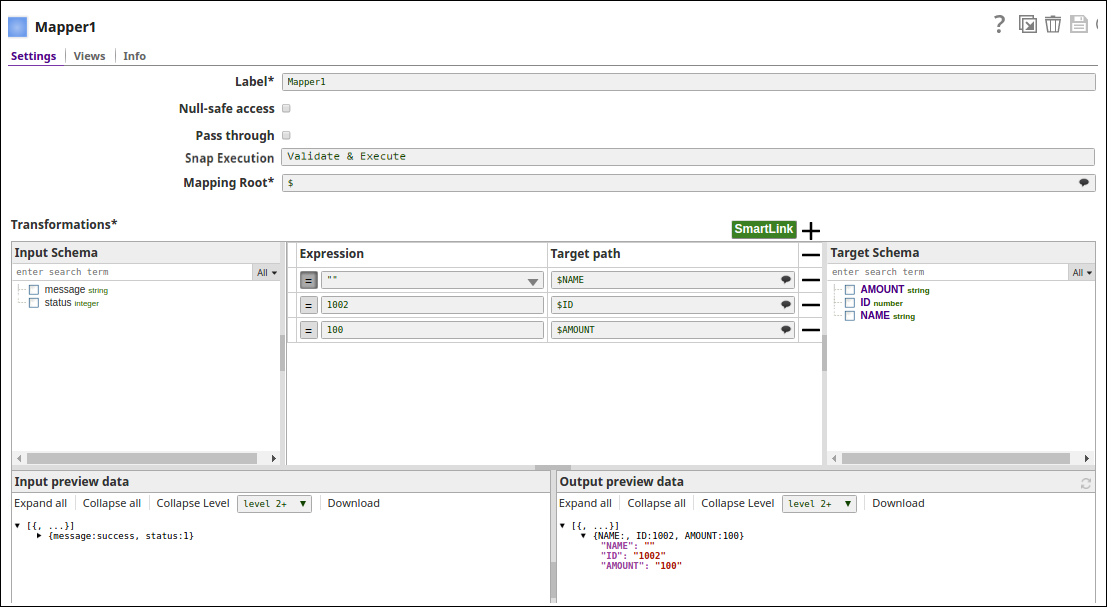

2. Configure the Mapper Snap as follows to pass the input data.

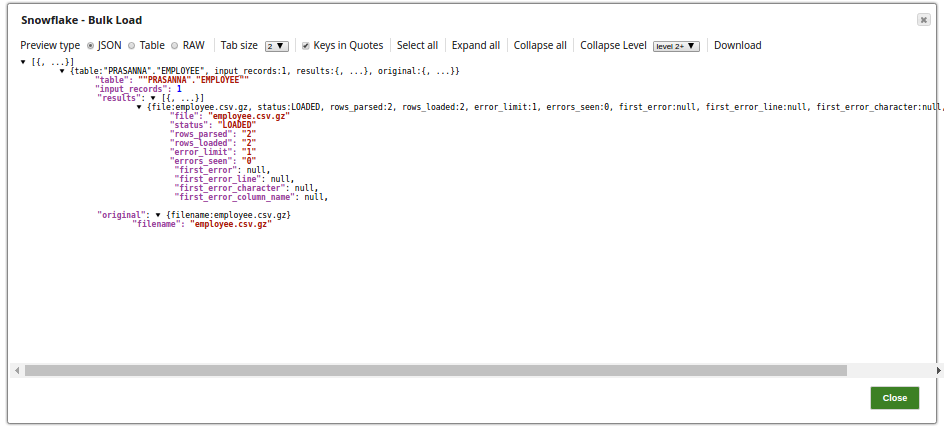

3. Configure the Snowflake Bulk Load Snap with two input views:

a. First input view: Input data from the upstream Mapper Snap.

b. Second input view: Table metadata from JSON Generator. If the target table is not present, a table is created in the database based on the schema from the second input view.

JSON Generator Configuration: Table metadata to pass to the second input view.

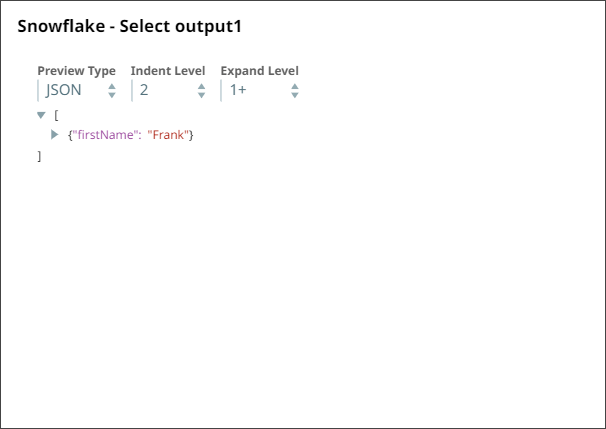

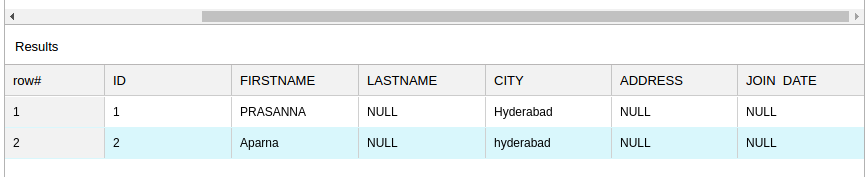

4. Finally, configure the Snowflake Select Snap with two output views.

Output from the first input view. | Output from the second input view: This schema of the target table is from the second output (rows) of the Snowflake Select Snap. |

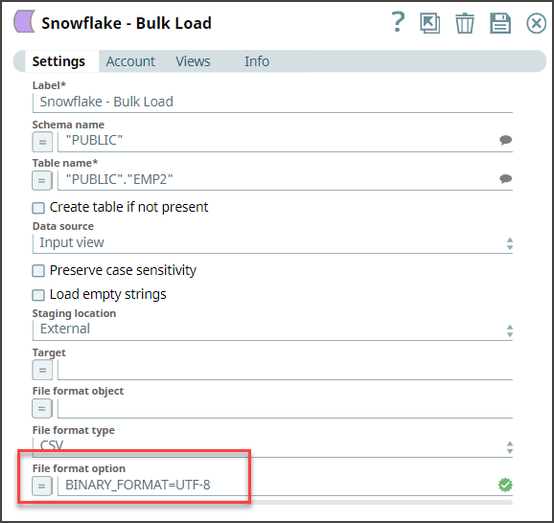

Loading Binary Data Into Snowflake

The following example Pipeline demonstrates how you can convert the staged data into binary data using the binary file format before loading it into the Snowflake database.

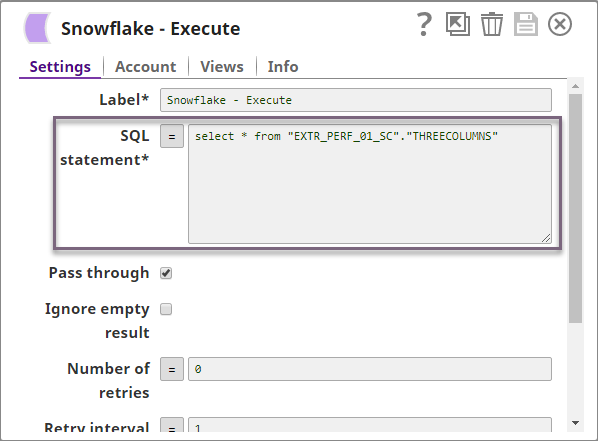

To begin with, configure the Snowflake Execute Snap with this query: select * from "PUBLIC"."EMP2" limit 25——this query reads 25 records from the Emp2 table.

Next, configure the Mapper Snap with the output from the upstream Snap by mapping the employee details to the columns in the target table. Note that the Bio column is the binary data type and the Text column is varbinary data type. Upon validation, the Mapper Snap passes the output with the given mappings (employee details) in the table.

Next, configure the Snowflake - Bulk Load Snap to load the records into Snowflake. We set the File format option as BINARY_FORMAT=UTF-8 to enable the Snap to encode the binary data before loading.

Upon validation, the Snap loads the database with 25 employee records.

Output Preview | Data in Snowflake |

|---|---|

Finally, connect the JSON Formatter Snap to the Snowflake - Bulk Load Snap to transform the binary data to JSON format, and finally write this output in S3 using the File Writer Snap.

Transforming Data Using Select Query Before Loading Into Snowflake

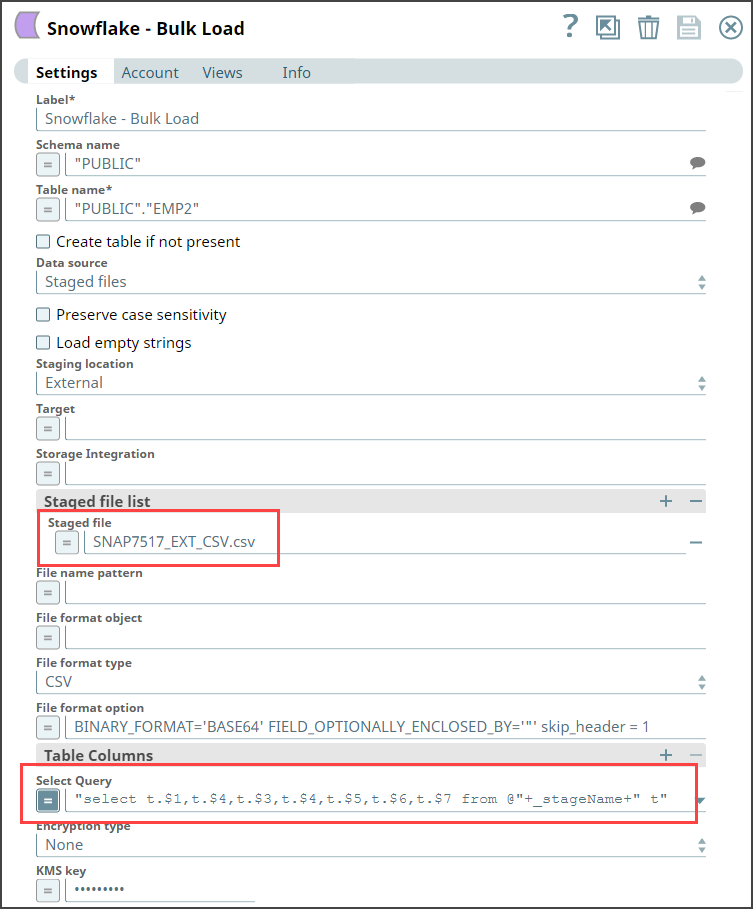

The following example Pipeline demonstrates how you can reorder the columns using the SELECT statement transform option before loading data into Snowflake database. We use the Snowflake - Bulk Load Snap to accomplish this task.

Prerequisite: You must create an internal or external stage in Snowflake before you transform your data. This stage is used for loading data from source files into the tables of Snowflake database.

To begin with, we create a stage using a query in the following format. Snowflake supports both internal (Snowflake) and external (Microsoft Azure and AWS S3) stages for this transformation.

"CREATE STAGE IF NOT EXISTS "+_stageName+" url='"+_s3Location+"' CREDENTIALS = (AWS_KEY_ID='string' AWS_SECRET_KEY='string') "

This query creates an external stage in Snowflake pointing to S3 location with AWS credentials (Key ID and Secrete Key).

We recommend that you do not use a temporary stage to prevent issues while loading and transforming your data.

Now, add the Snowflake - Bulk Load Snap to the canvas and configure it to transform the data in the staged file SNAP7517_EXT_CSV.csv by providing the following query in the Select Query field:

"select t.$1,t.$4,t.$3,t.$4,t.$5,t.$6,t.$7 from @"+_stageName+" t"

You must provide the stage name along with schema name in the Select Query, else the Snap displays an error. For instance,

SELECT t.$1,t.$4,t.$3,t.$4,t.$5,t.$6,t.$7 from @mys3stage t", displays an error.

SELECT t.$1,t.$4,t.$3,t.$4,t.$5,t.$6,t.$7 from @<Schema Name>.<stagename> t", executes correctly.

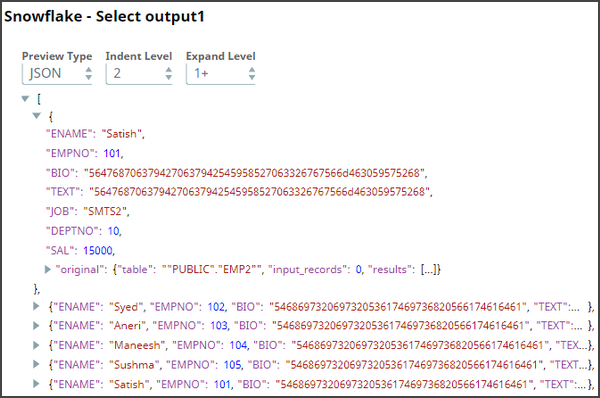

Next, connect a Snowflake Select Snap with the Snowflake - Bulk Load Snap to select the data from the Snowflake database. Upon validation you can view the transformed data in the output view.

Downloads

Important steps to successfully reuse Pipelines

- Download and import the Pipeline into SnapLogic.

- Configure Snap accounts as applicable.

- Provide Pipeline parameters as applicable.

Snap Pack History

Related Content

.png?version=1&modificationDate=1489735611683&cacheVersion=1&api=v2&width=900&height=363)