In this article

Overview

Salesforce Read is a Read-type Snap that provides the ability to retrieve all records for all fields of the Salesforce object from Salesforce by defining the Salesforce object name.

Prerequisites

None.

Support for Ultra Pipelines

Works in Ultra Task Pipelines.

Limitations

- When using Primary Key (PK) Chunking mode in this Snap, the output document schemas in preview mode and execution mode may differ. For more information, see the Note under PK Chunking.

When you run more than 10 Salesforce Snaps simultaneously, the following error is displayed

“Cannot get input stream from next records URL" or "INVALID_QUERY_LOCATOR"

Workaround: You must ensure not to use more than 10 Salesforce Snaps simultaneously. because this might lead to opening of more than 10 query cursors in Salesforce. See Salesforce Knowledge Article for more information.

Known Issues

None.

Snap Views

| View Type | View Format | Number of Views | Examples of Upstream and Downstream Snaps | Description |

|---|---|---|---|---|

| Input | Document | Min: 0 Max: 1 | Mapper Copy | This Snap has at most one input view. |

| Output | Document | Min: 1 Max: 2 | File Writer | The snap allows you to add an optional second output view that exposes the schema of the target object as the output document. |

| Error | Document | This Snap has exactly one error view and produces zero or more documents in the view. | N/A | The error view contains error, reason, resolution and stack trace. For more information, see Handling Errors with an Error Pipeline |

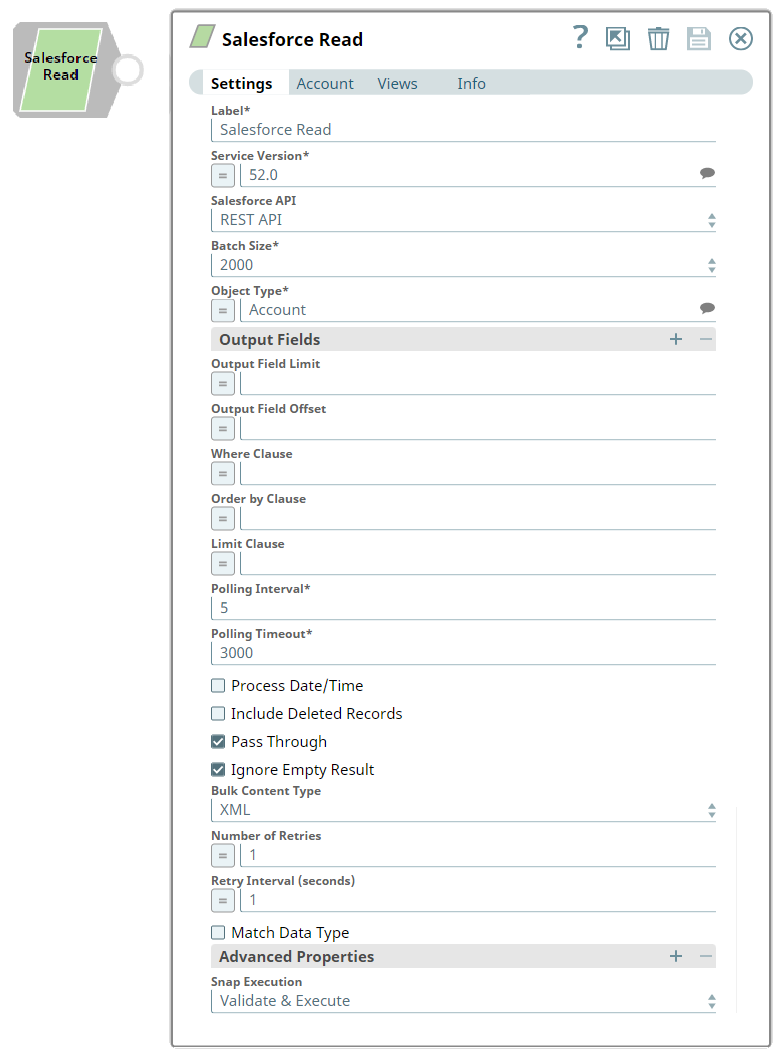

Snap Settings

Field | Field Type | Description | |||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

Label* | String | Specify the name for the Snap. You can modify this to be more specific, especially if you have more than one of the same Snap in your Pipeline. | |||||||||||||||||||||

Salesforce API* | String/Expression/Suggestion | The Salesforce API mode to use during the Pipeline execution. | |||||||||||||||||||||

Service Version* | Dropdown list | Specify the version number associated with the Salesforce service that you want to connect to. Alternatively, click the Suggestion icon to fetch the list of versions and select the desired version. | |||||||||||||||||||||

Batch Size* | Dropdown list | The number of records to process in each batch for downloading large query results. Each batch read requires an API call against Salesforce to retrieve the set of records.

Default Value: 2000 The Snap validates using the Bulk API, even if you configure the Snap to use Bulk API with Primary Key (PK) Chunking. | |||||||||||||||||||||

Object Type* | String/Expression/Suggestion | This property enables you to define the name of the Salesforce object, such as Account. | |||||||||||||||||||||

Output Fields | Use this field set to enter a list of field names for the SOQL SELECT statement. If empty, the Snap selects all fields. | ||||||||||||||||||||||

Output Field Limit | String/Expression | The number of output fields to return from the Salesforce object. | |||||||||||||||||||||

Output Field Offset | String/Expression | Defines a starting field index for the output fields. This is where the result set should start. Default Value: N/A If you enter an offset value that is greater than 1, the first field "Id" is always returned, but the following fields start from the offset position. This is because the first field is the only unique identifier. | |||||||||||||||||||||

Where Clause | String/Expression | Enter the WHERE clause for the SOQL SELECT statement. Do not include the word WHERE. Default Value: N/A Using quotes in field names values

| |||||||||||||||||||||

| Order By Clause | String/Expression | Enter the ORDER BY clause that you want to use with your SOQL SELECT Query. PK Chunking does not support the ORDER BY clause. Default Value: N/A | |||||||||||||||||||||

| Limit Clause | String/Expression | Enter the LIMIT BY clause that you want to use with your SOQL SELECT Query. PK Chunking does not support the LIMIT clause. Default Value N/A | |||||||||||||||||||||

Polling Interval* | String | Define the polling interval in seconds for the Bulk API read execution. At each polling interval, the Snap checks the status of the Bulk API read batch processing. | |||||||||||||||||||||

Polling Timeout* | String | This property allows you to define the polling timeout in seconds for the Bulk API read batch execution. If the timeout occurs while waiting for the completion of the read batch execution, the Snap throws a Snap Execution Exception. | |||||||||||||||||||||

Process Date/time | Checkbox | All date/time fields from Salesforce.com are retrieved as string type. If this property is unchecked, the Snap sends these fields without any conversion. | |||||||||||||||||||||

Include Deleted Records | Checkbox | If checked, the query result includes deleted records. This feature is supported in REST API version 29.0 or later and Bulk API version 39.0 or later. | |||||||||||||||||||||

Pass Through | Checkbox | Select this checkbox to pass the input document to the output view under the key ' | |||||||||||||||||||||

Ignore Empty Results | Checkbox | Select this checkbox to ignore empty results; no document will be written to the output view when the operation does not produce any result. If this property is not selected and Pass Through is selected, the input document will be passed through to the output view. This property is ignored in Bulk API mode. | |||||||||||||||||||||

Bulk Content Type | Dropdown list | Select the content type for Bulk API: JSON or XML. The numeric type field values will be read as numbers in JSON content type, and as strings in XML content type, in the output documents. JSON content type for Bulk API is available in Salesforce API version 36.0. In REST API, the number-type field values will always be read as numbers. If the Bulk API has been selected along with 100,000/ 250,000 as batch size value, the content-type will always be CSV regardless of the value set in this property.

| |||||||||||||||||||||

| Number Of Retries | String/Expression | Specify the maximum number of retry attempts in case of a network failure.

Default Value: 1 Minimum value: 0

| |||||||||||||||||||||

| Retry Interval (seconds) | String/Expression | Specifies the minimum number of seconds for which the Snap must wait before attempting recovery from a network failure. Default Value: 1 Minimum value: 0 | |||||||||||||||||||||

Match Data Type | Checkbox | If checked, the data types of the Bulk API results are the same as in REST API. This property applies if the content type is not JSON in Bulk API. In Bulk API, Salesforce.com returns all values as strings if the Bulk content type is XML or Batch size is for PK Chunking. If this property is checked, the Snap attempts to convert strings values to the corresponding data types if the original data type is one of boolean, integer, double, currency, and percent. Default Value: Not selected | |||||||||||||||||||||

Advanced Properties | Use this field set to define additional advanced properties that you want to add to the Snap's settings. Additional advanced properties are not required by default, and the field-set represents an empty table property. Click to add an advanced property. This field set contains the following fields:

| ||||||||||||||||||||||

Properties | Dropdown list | You can use one or more of the following properties in this field:

| |||||||||||||||||||||

| Values | String | The value that you want to associate with the property selected in the corresponding Properties field. The default values for the expected properties are:

| |||||||||||||||||||||

Snap Execution | Dropdown list | Page lookup error: page "Anaplan Read" not found. If you're experiencing issues please see our Troubleshooting Guide. Default Value: Validate & Execute | |||||||||||||||||||||

Temporary Files

During execution, data processing on Snaplex nodes occurs principally in-memory as streaming and is unencrypted. When larger datasets are processed that exceeds the available compute memory, the Snap writes Pipeline data to local storage as unencrypted to optimize the performance. These temporary files are deleted when the Snap/Pipeline execution completes. You can configure the temporary data's location in the Global properties table of the Snaplex's node properties, which can also help avoid Pipeline errors due to the unavailability of space. For more information, see Temporary Folder in Configuration Options.PK Chunking

PK chunking splits bulk queries on very large tables into chunks based on the record IDs, or primary keys, of the queried records. Each chunk is processed as a separate batch that counts toward your daily batch limit. PK chunking is supported for the following objects: Account, Campaign, CampaignMember, Case, Contact, Lead, LoginHistory, Opportunity, Task, User, and custom objects.

PK chunking works by adding record ID boundaries to the query with a WHERE clause, limiting the query results to a smaller chunk of the total results. The remaining results are fetched with additional queries that contain successive boundaries. The number of records within the ID boundaries of each chunk is referred to as the chunk size. The first query retrieves records between a specified starting ID and the starting ID plus the chunk size, the next query retrieves the next chunk of records, and so on. Since Salesforce.com appends a WHERE clause to the query in the PK Chunking mode, if SOQL Query has LIMIT clause in it, the Snap will submit a regular bulk query job without PK Chunking.

For more details on the PK Chunking, refer to PK Chunking Header.

If 100,000 or 250,000 is selected for Batch size, the value is used as a chunk size in the PK Chunking. Please note the chunk size applies to the number of records in the queried table rather than the number of records in the query result. For example, if the queried table has 10 million records and 250,000 is selected for Batch size, 40 batches are created to execute the bulk query job. One additional control batch is also created and it does not process any record. The status of the submitted bulk job and its batches can be monitored by logging onto the your Salesforce.com account in a web browser and going to Setup > Administration Setup > Monitoring > Bulk Data Load Jobs.

PK Chunking requires Service version 28.0 or later. Therefore, if 100,000 or 250,000 is selected for Batch size and Service version is older than 28.0, the Snap will submit a regular bulk query job without PK Chunking.

The output document schemas in preview mode (validation) and execution mode for this Snap may differ when using Primary Key (PK) Chunking mode. This is because the Snap intentionally generates the output preview using regular Bulk API instead of PK Chunking, to reduce the costs involved in the PK Chunking operation.

Salesforce recommends the use of PK Chunking if the target Salesforce object (table) is relatively large (for example, more than few 100,000 records).

Examples

Pipeline: Salesforce.com Data to a File: This Pipeline reads data using a Salesforce read and writes it to a file.

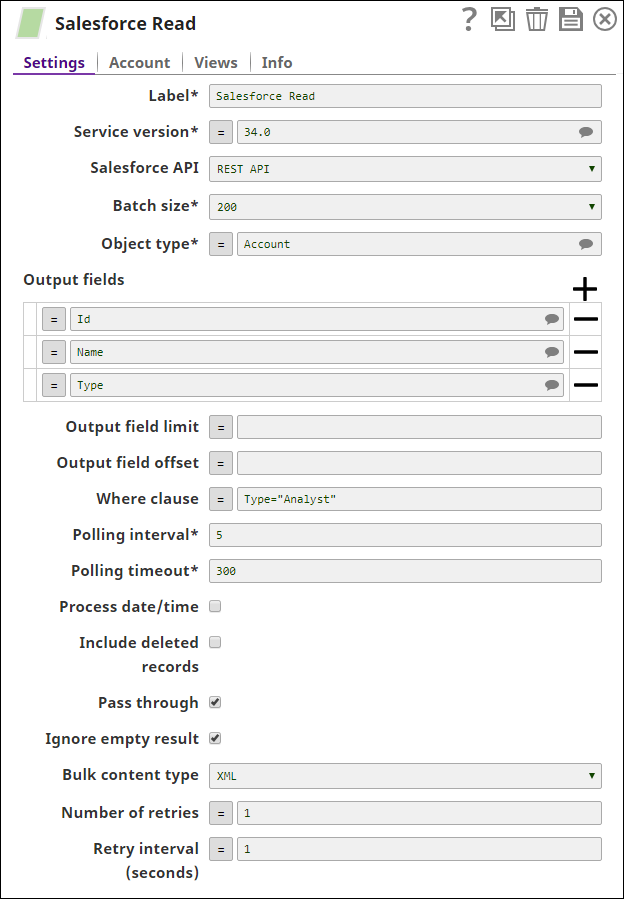

Reading Records from an Object

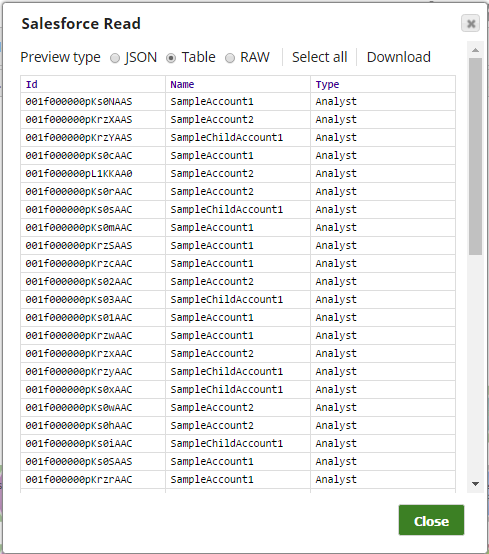

The following Salesforce Read Snap shows how the Snap is configured and how the object records are read. The Snap reads records from the Account object, and retrieves values for the fields, Id, name, & type where the type field value is Analyst:

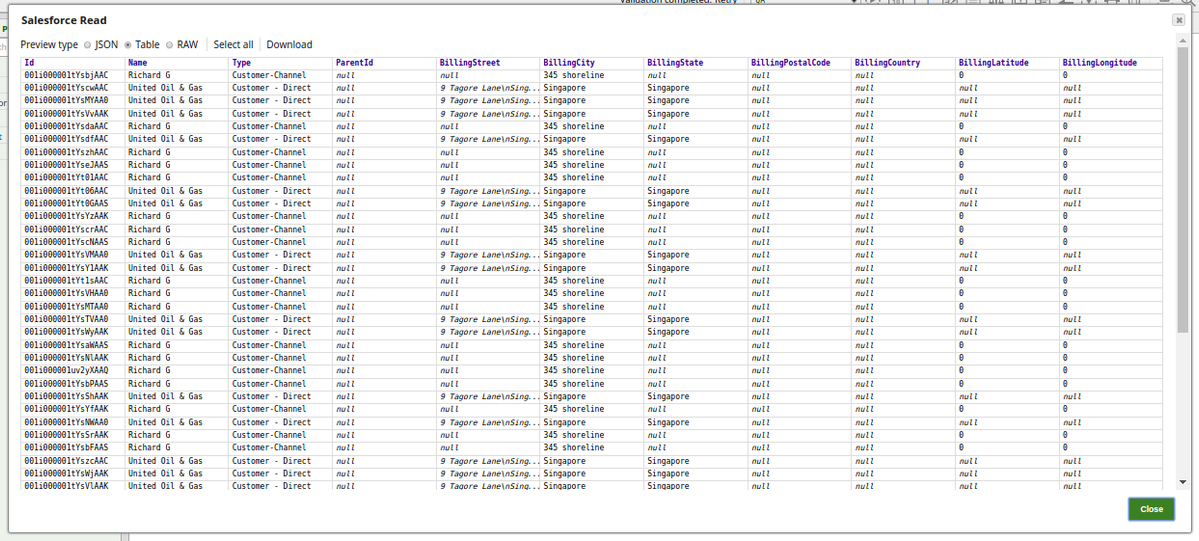

Successful execution of the Snap gives the following preview:

Reading Records in Bulk

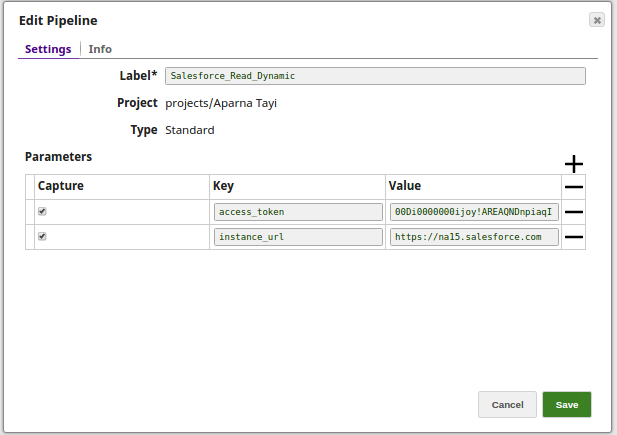

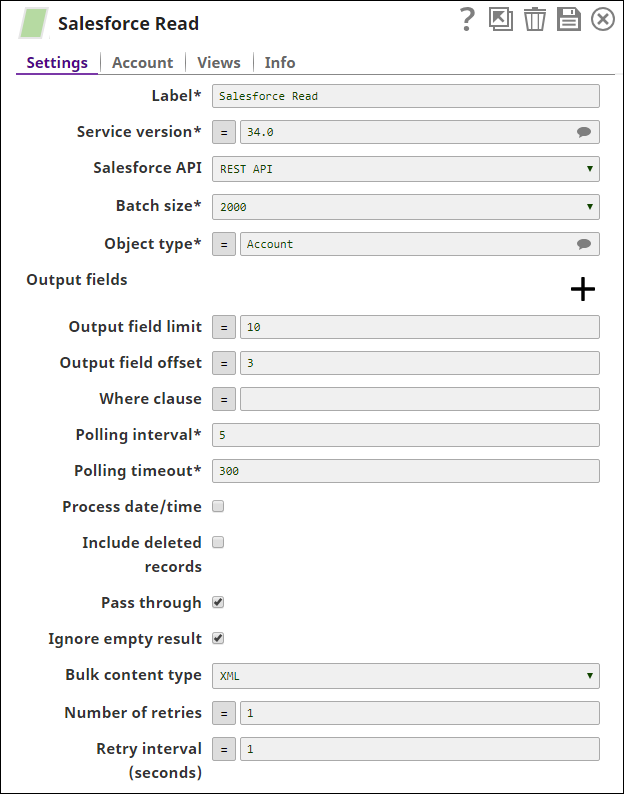

The Salesforce Read Snap reads the records from the Standard Object, Account, and retrieves values for the 10 output fields (Output field limit) starting from the 3rd field (Output field offset). Additionally, we are passing the values dynamically for the Access token and the Instance URL fields in the Account settings of the Snap by defining the respective values in the pipeline parameters.

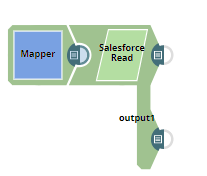

1. The Salesforce Read pipeline.

2. The Key and Value parameters are assigned using the Edit Pipeline property on the designer.

For this Pipeline, define the two pipeline parameters:

- access_Token

- instance_URL

3. The Salesforce Read Snap reads the records from the Standard object, Account, to the extent of 10 output fields starting from the 3rd record(by defining the properties- Output field limit and Output field offset with the values 10 and 3 respectively).

4. Create a dynamic account and toggle (enable) the expressions for Access Token and Instance URL properties in order to pass the values dynamically.

Set Access token to _access_token and Instance URL to _instance_url. Note that the values are to be passed manually and are not suggestible.

5. Successful execution of the pipeline displays the below output preview:

Using Second Output View

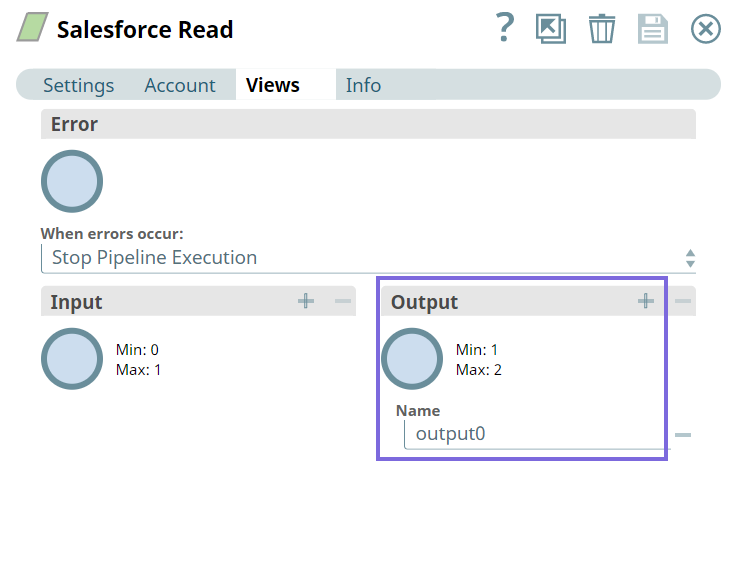

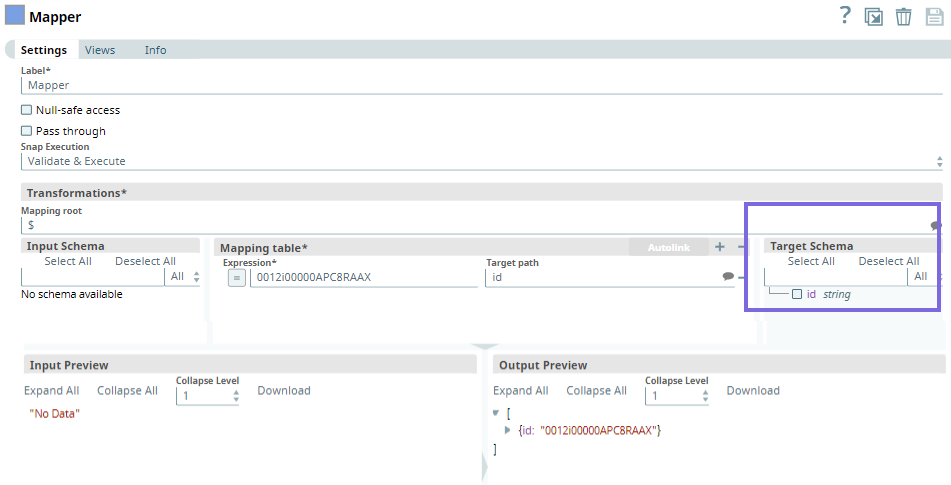

This example Pipeline demonstrates how you can add an optional second output view that exposes the schema of the target object as the output document. To this end, we configure the Pipeline using the Mapper and Salesforce Read Snaps.

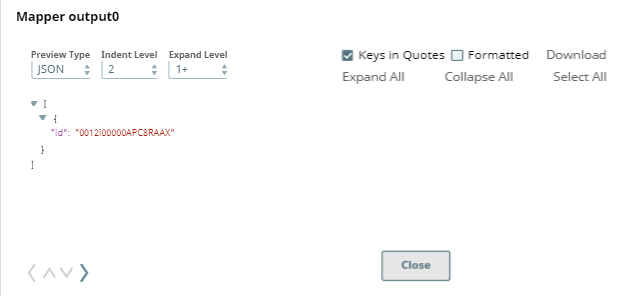

First, we configure the Mapper Snap as follows. Upon validating the Snap, the target schema is populated in the Mapper Snap. Once this is available, we define the target path variables from the target schema.

Upon validation, the following output is generated in the Snap's preview.

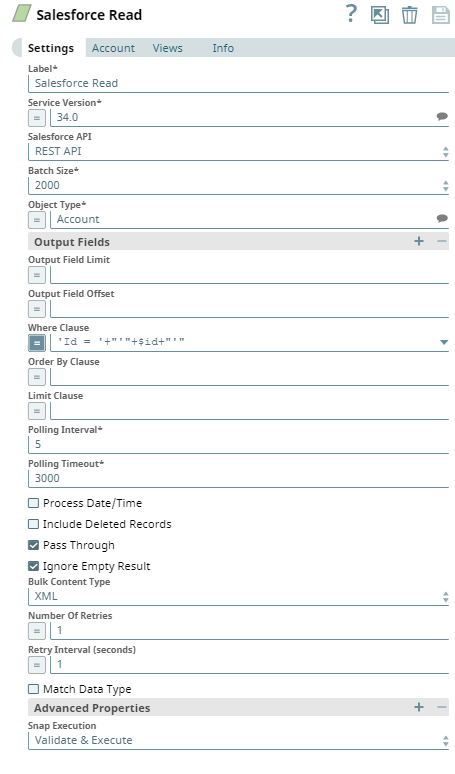

Next, we configure the Salesforce Read Snap to read the specified records.

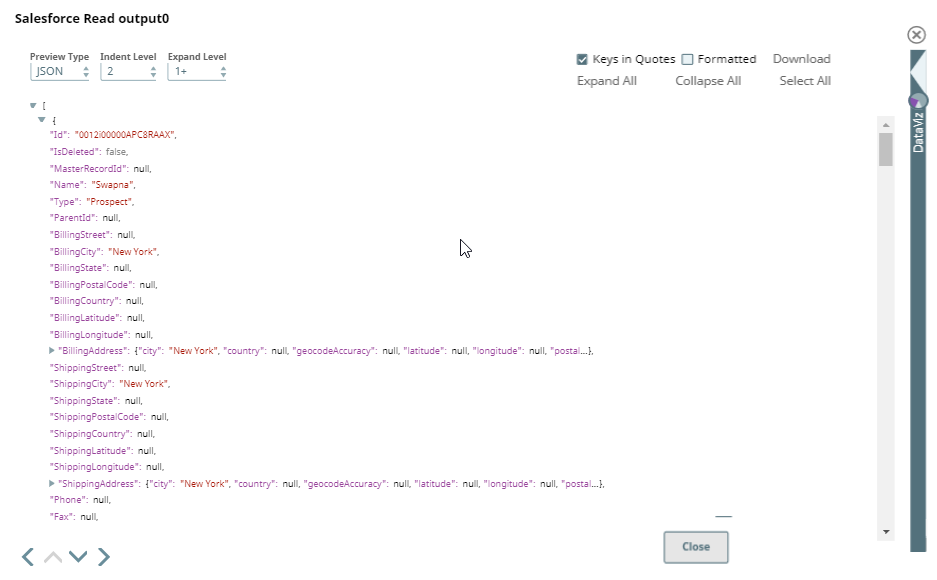

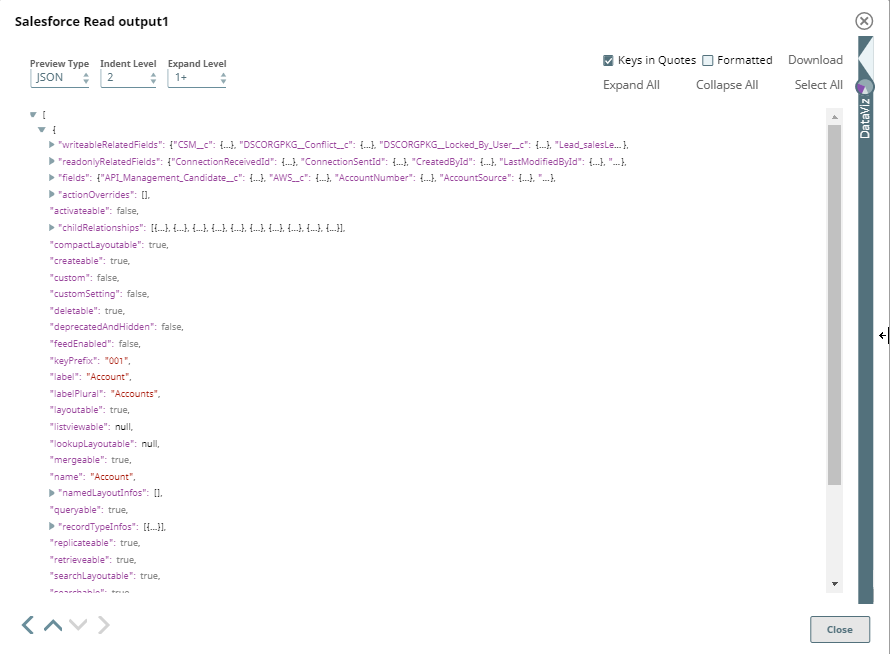

Upon validation, we can see the following outputs in both the output views of the Snap.

Output0 (default)

Output 1

.png?version=1&modificationDate=1489678060000&cacheVersion=1&api=v2)

.png?version=1&modificationDate=1489678265342&cacheVersion=1&api=v2&width=650&height=438)