Kickstarter Project Success Prediction

On this Page

Problem Scenario

In these days, crowdfunding platforms are very popular for innovators and people with cool ideas to raise funds from the public. Only on Kickstarter (one of the most popular online crowdfunding platforms), more than 14,000,000 backers (people who invest in projects) have funded almost 150,000 projects. At each moment, almost 4,000 projects are live to receive funding from the public. There are a lot of crowdfunding projects that succeed and fail. The success rate of a project depends on a lot of factors. It will be great to find a way to estimate and improve the success rate of future projects.

Description

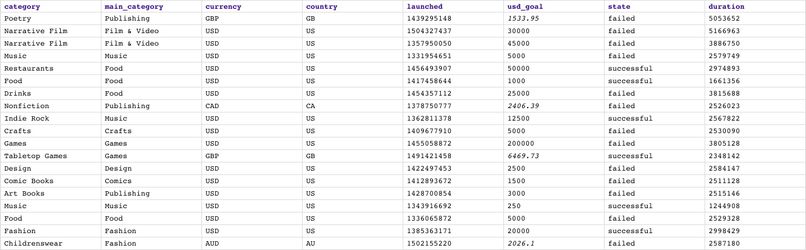

Out of curiosity, we want to try to use Machine Learning algorithms to predict whether the projects are going to succeed or fail. If we succeed in doing this, we should be able to figure out the best way to improve the success rate of future projects. We chose Kickstarter because of the large number of projects spanning over years. There are a lot of open datasets you can find on the internet and we got one from here. This dataset contains over 300,000 projects; however, it only contains general information about projects including title, category, currency, country, goal, pledge, important dates, and state. There is a lot more useful information you can add to improve the accuracy such as description, keywords, activities, competitors, patents, team, and company reputation. For demonstration purpose, we only considered 20,000 projects. The screenshot below shows the preview of this dataset.

Objectives

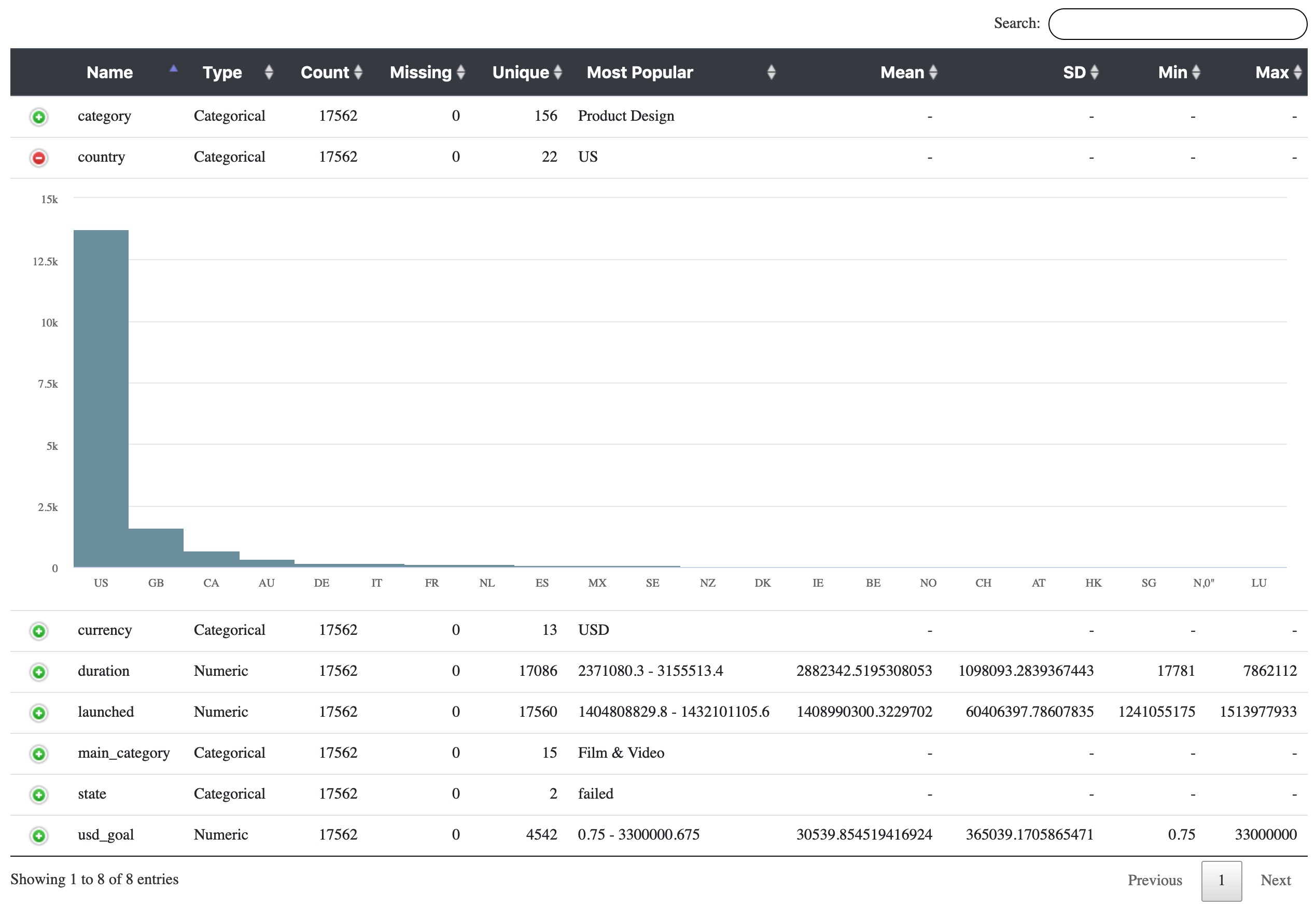

- Profiling: Use Profile Snap from ML Analytics Snap Pack to get statistics of this dataset.

- Data Preparation: Perform data preparation on this dataset using Snaps in ML Data Preparation Snap Pack.

- AutoML: Use AutoML Snap from ML Core Snap Pack to build models and pick the one with the best performance.

- Cross Validation: Use Cross Validator (Classification) Snap from ML Core Snap Pack to perform 10-fold cross validation on various Machine Learning algorithms. The result will let us know the accuracy of each algorithm in the success rate prediction.

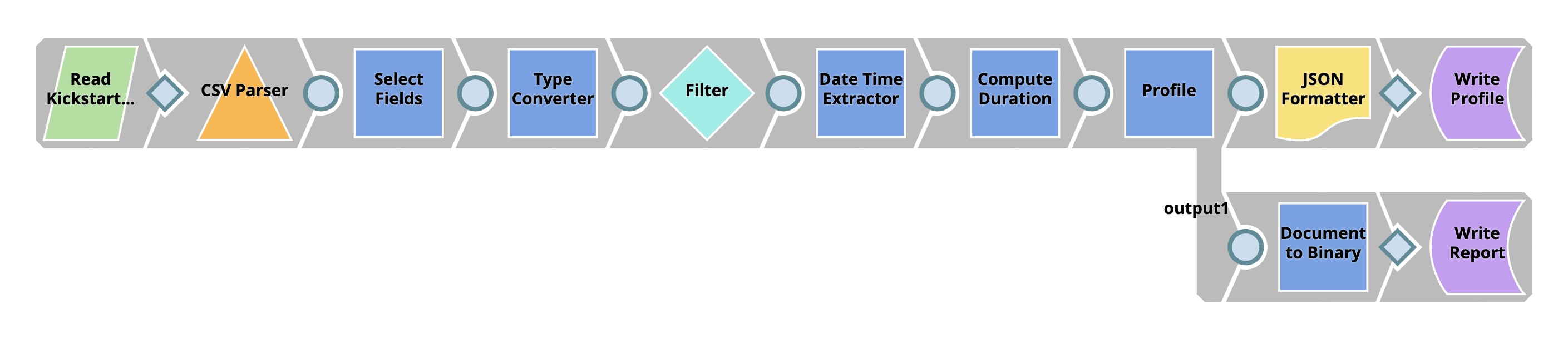

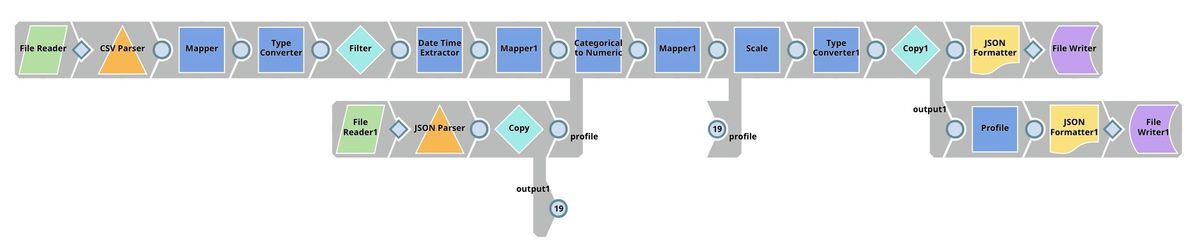

We are going to build 5 Pipelines: Profiling, Data Preparation, Data Modelling and 2 Pipelines for Cross Validation with various algorithms. Each of these Pipelines is described in the Pipelines section below.

Pipelines

Profiling

In order to get useful statistics, we need to transform the data a little bit.

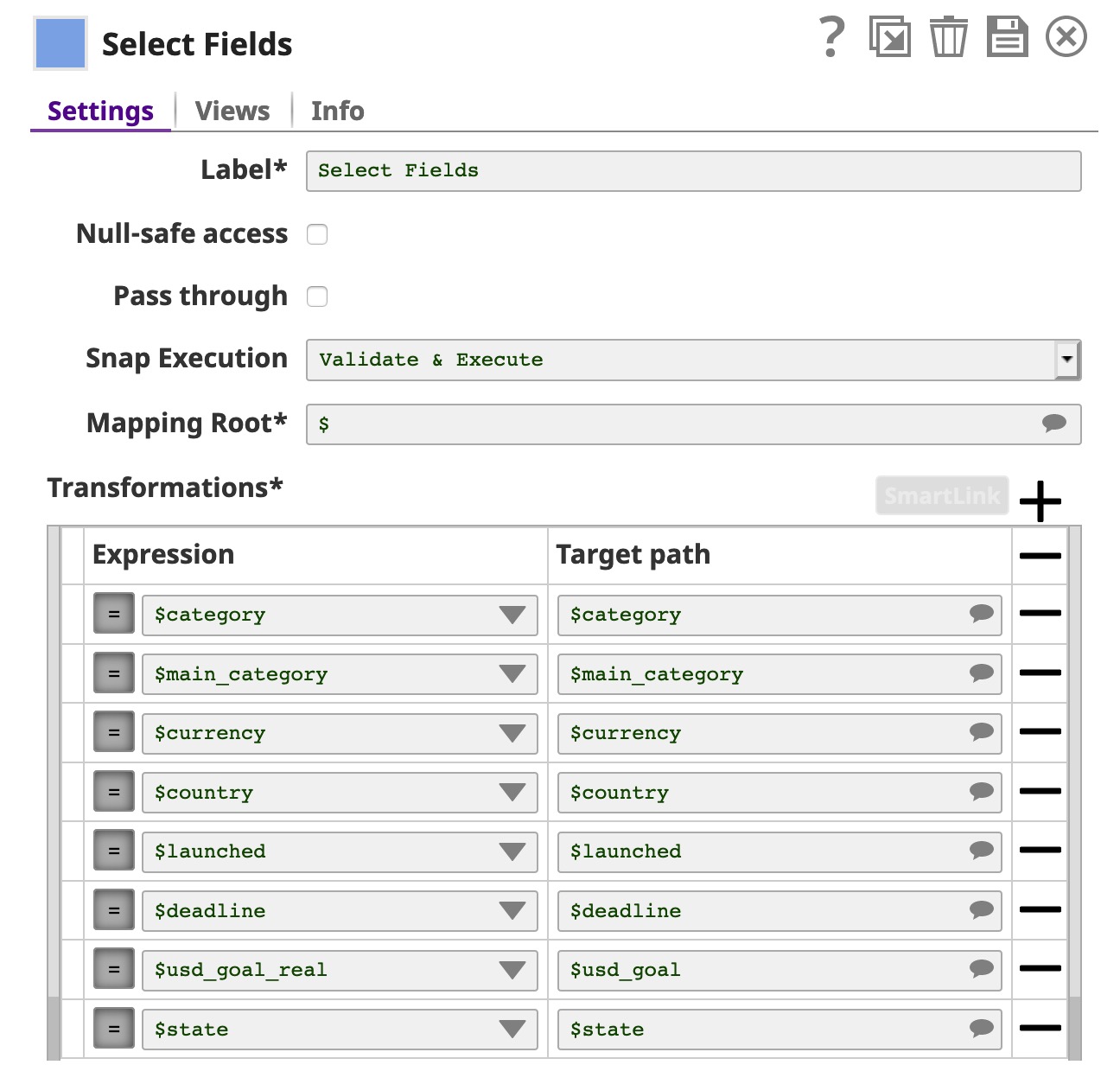

We use the first Mapper Snap (Select Fields) to select and rename fields.

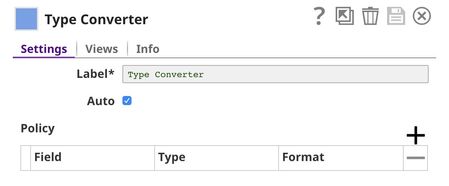

Then, we use Type Converter Snap to automatically derive types of data.

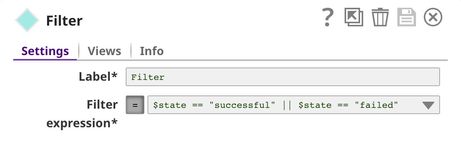

Since we only focus on successful and failed projects, we use Filter Snap to filter out live, canceled, and projects with another status.

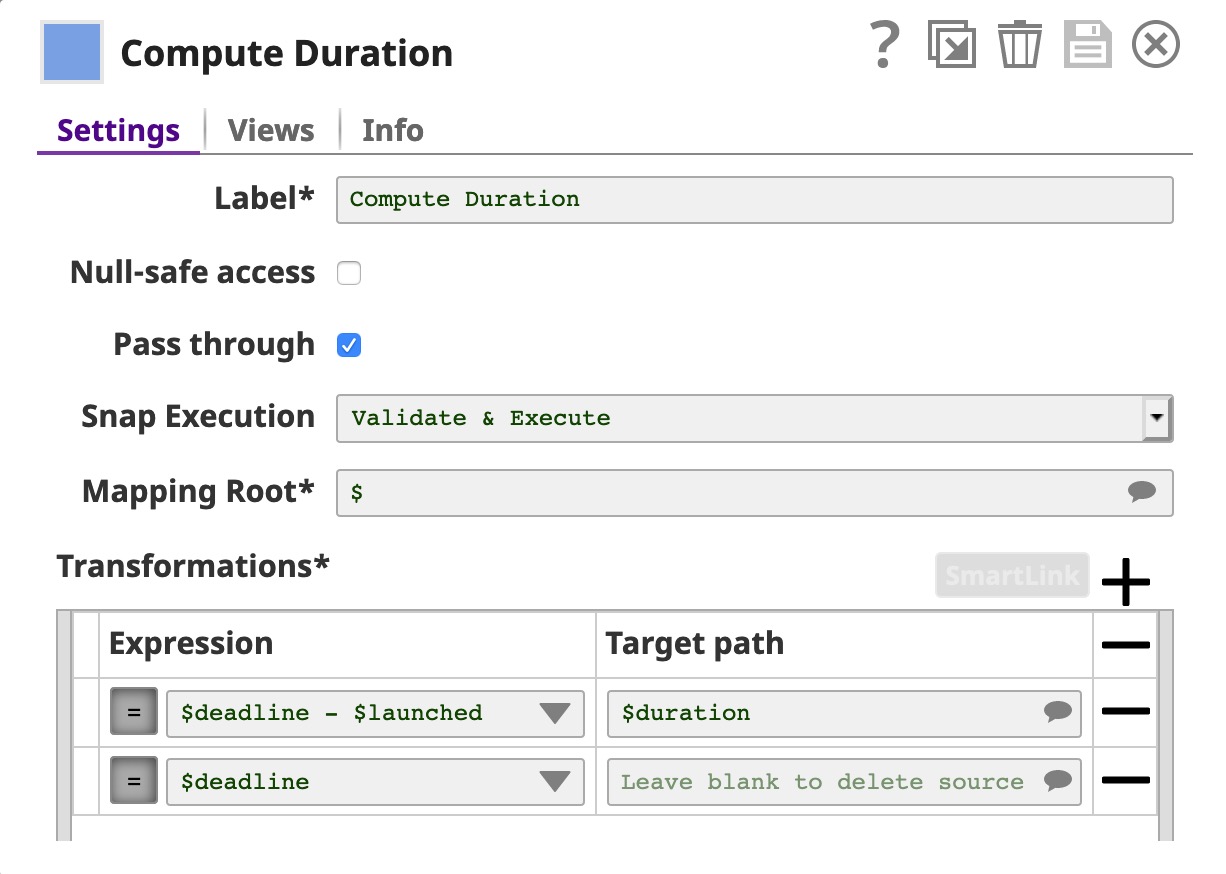

The Date Time Extractor Snap is used to convert the launch date and the deadline to an epoch which are used to compute the duration in the Mapper Snap (Compute Duration). With the Pass through in the Mapper Snap, all input fields will be sent to the output view along with the $duration. However, we drop $deadline here.

At this point, the dataset is ready to be fed into the Profile Snap.

Finally, we use Profile Snap to compute statistics and save on SLFS in JSON format.

The Profile Snap has 2 output views. The first one is converted into JSON format and saved as a file. The second one is an interactive report which is shown below.

Click here to see the statistics generated by the Profile Snap in JSON format.

Data Preparation

In this Pipeline, we want to transform the raw dataset into a format that is suitable for applying Machine Learning algorithms.

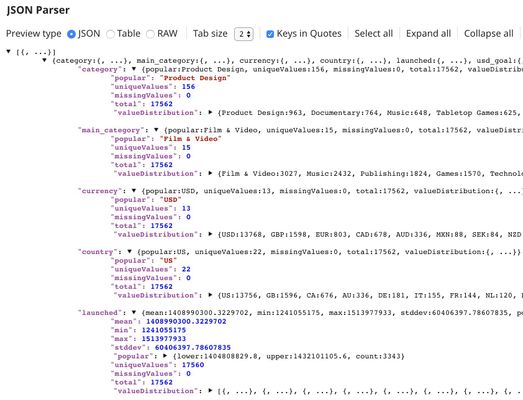

The first 7 Snaps in the top flow are the same as in the previous Pipeline. We use File Reader1 Snap together with JSON Parser to read the profile (data statistics) generated in the previous Pipeline. The picture below shows the output of JSON Parser Snap. Product design is the category with the most number of projects. USD is clearly the most popular currency. The country with the most number of projects is the USA.

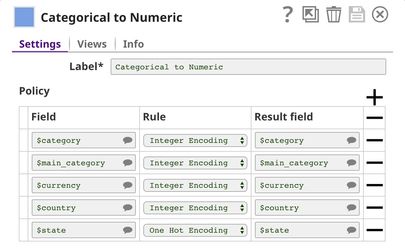

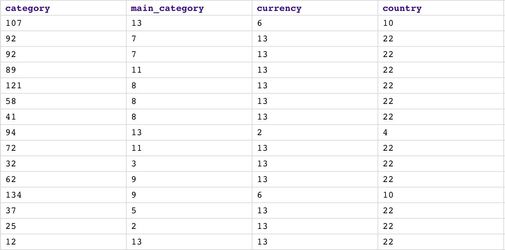

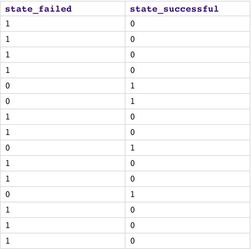

We then use Categorical to Numeric Snap to encode categorical fields into numeric using integer encoding and one hot encoding. As you can see, each category is now represented by a number. For the state, we use one hot encoding so we get 2 fields: one for success and another one for failed.

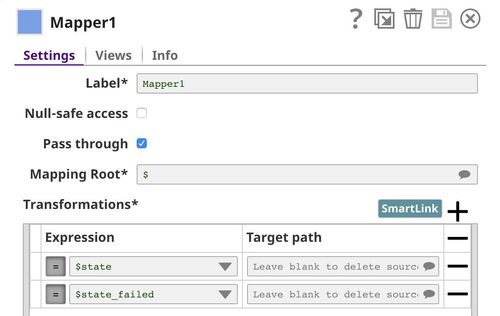

The Mapper1 Snap is used to remove $state and $state_failed.

Then, we use Scale Snap to scale numeric fields into the range from 0 to 1. Having data in the same range will help in analysis, visualization, etc.

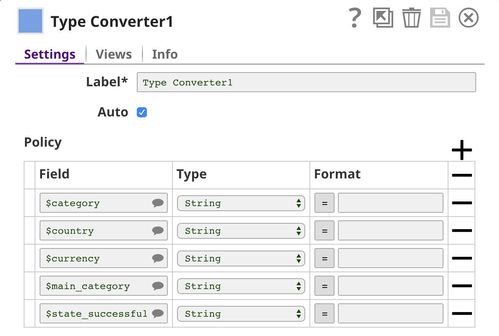

In the end, we want to make sure that categorical fields are represented as text (String data type). This is necessary since machine learning algorithms threat categorical and numeric fields differently. Profile Snap also generates a different set of statistics for categorical and numeric fields.

Click here to see the statistics generated by the Profile Snap based on the processed dataset. As you can see, the min and max of all numeric fields are 0 and 1 because of the Scale Snap.

AutoML

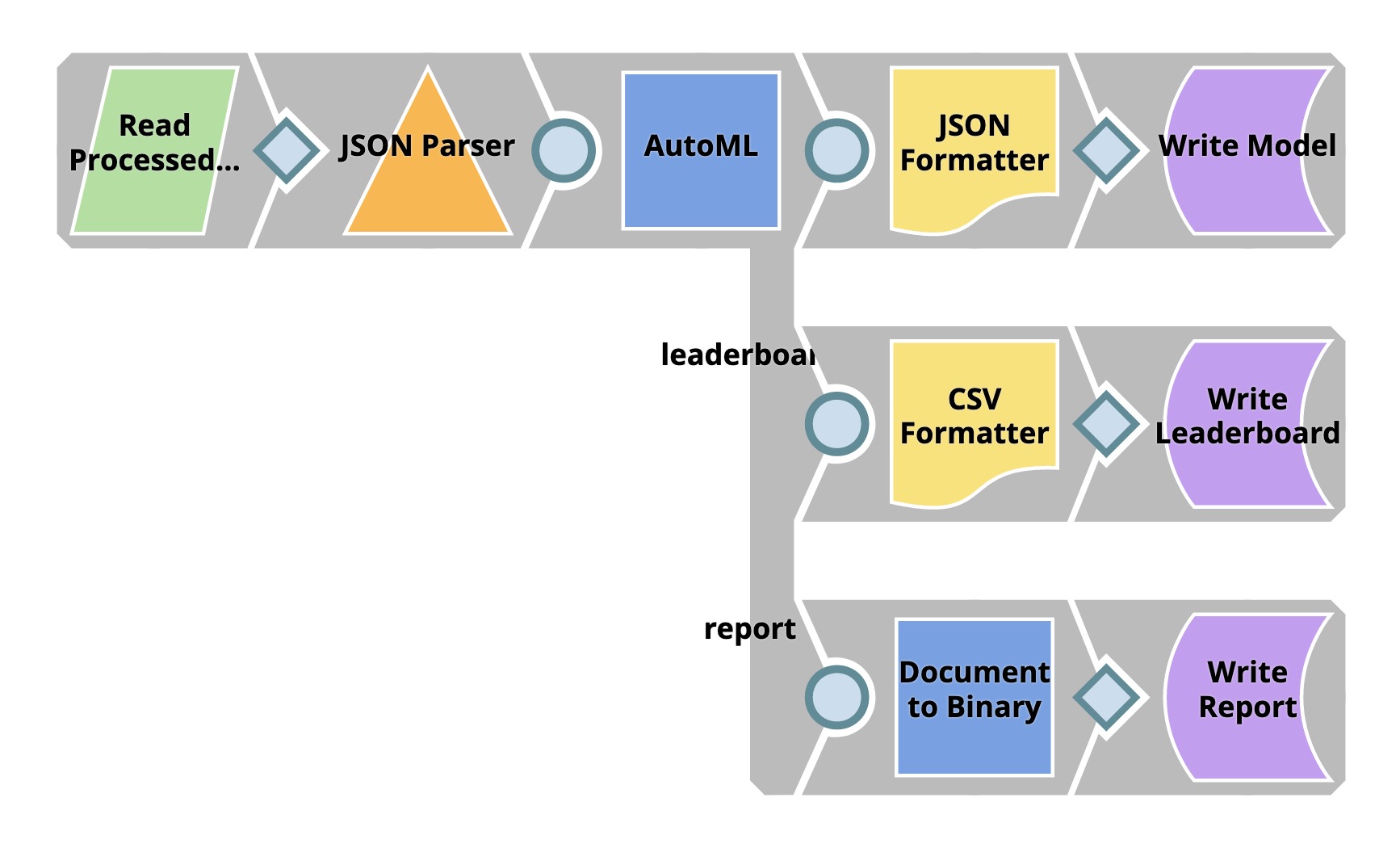

This Pipeline builds several models within the resource limit specified in the AutoML Snap. There are 3 outputs: the model with the best performance, leaderboard, and report.

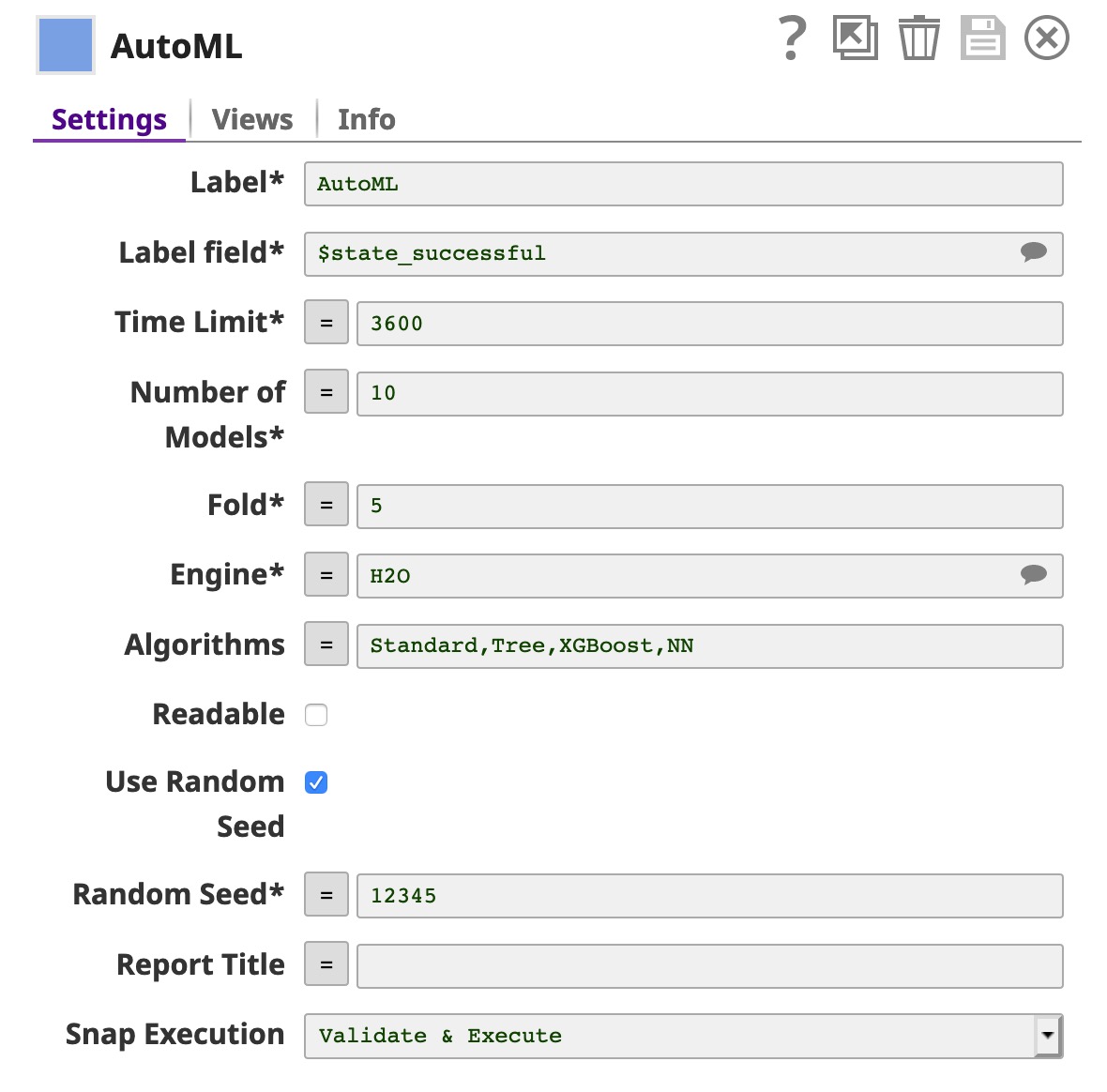

The File Reader Snap reads the processed data which we prepared using the Data Preparation Pipeline. Then, the AutoML Snap builds up to 10 models within the 3,600 seconds time limit. You can increase the number of models and time-limit based on the resources you have. You can also select the engine, this Snap supports Weka engine and H2O engine. Moreover, you can specify the algorithm you want this Snap to try.

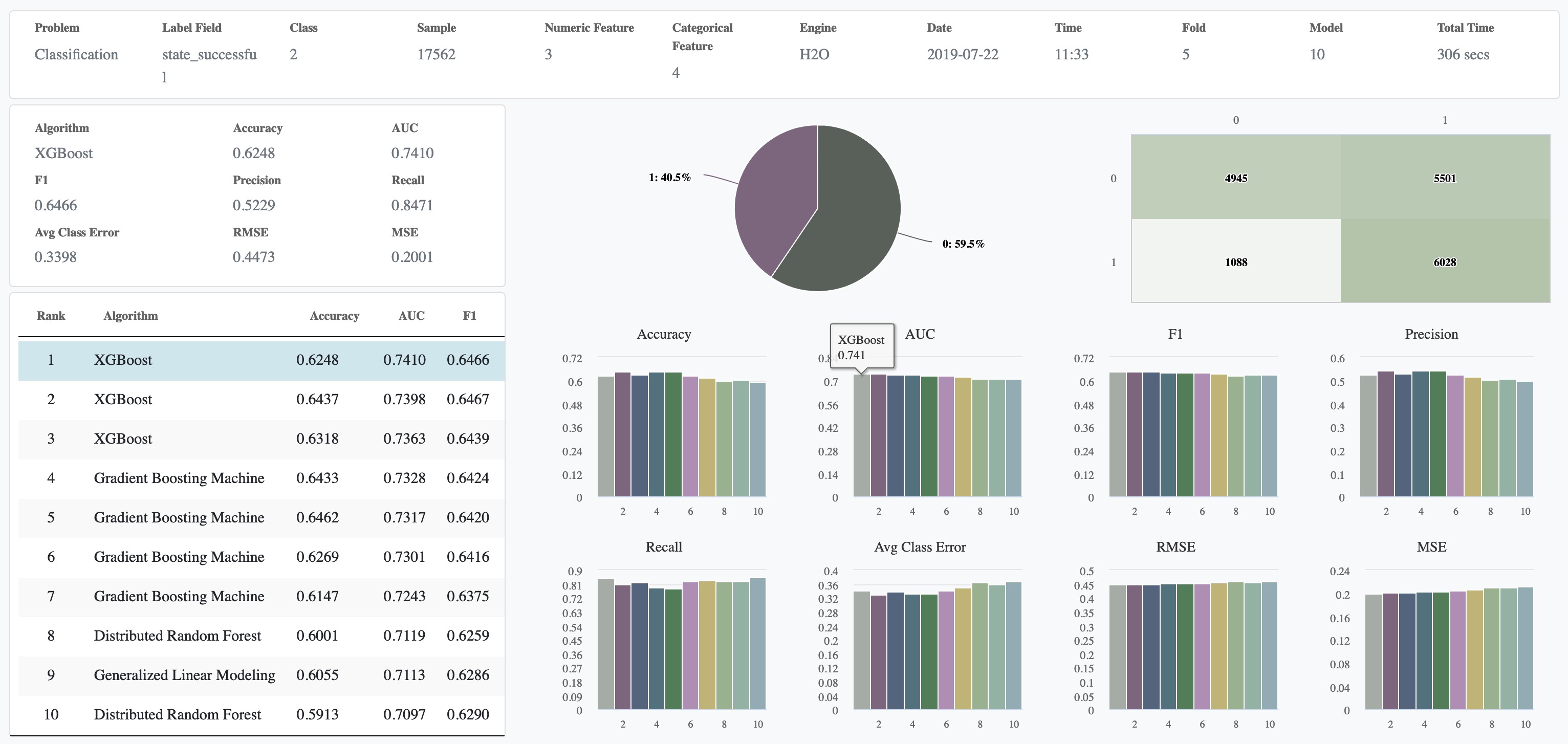

Below is the report from the third output view of the AutoML Snap. As you can see, the XGBoost model performs the best in this dataset with the AUC of 0.74.

Cross Validation

Overall, the AutoML Snap is sufficient; however, if you prefer to specify the algorithm and parameters yourself, you can use the Cross Validator Snaps. This Pipeline performs 10-fold cross validation with various classification algorithms. Cross validation is a technique to evaluate how well a specific machine learning algorithm performs on a dataset. To keep things simple, we will use accuracy to indicate the quality of the predictions. The baseline accuracy is 50% because we have two possible labels: successful (1) and failed (0). However, based on the profile of our dataset, there are 7116 successful projects and 10446 failed projects. The baseline accuracy should be 10446 / (10446 + 7116) = 59.5% in case we predict that all projects will fail.

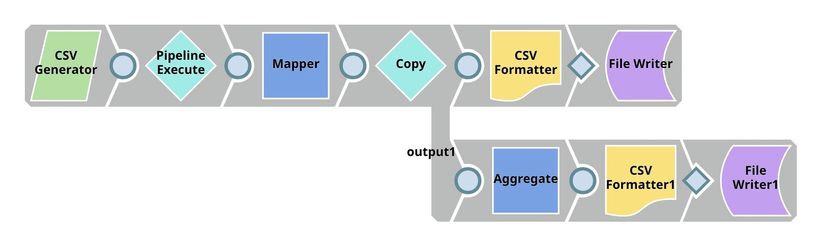

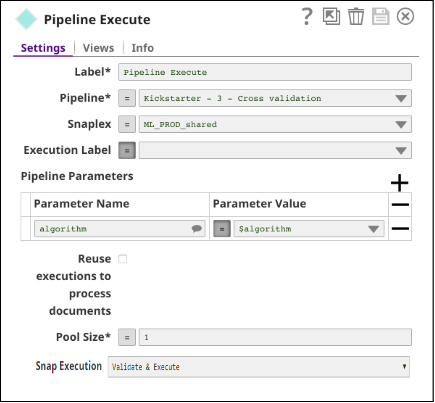

We have 2 Pipelines: parent and child. The parent Pipeline uses Pipeline Execute Snap to execute child Pipeline with different parameters. The parameter is the algorithm name which will be used by child Pipeline to perform 10-fold cross validation. The overall accuracy and other statistics will be sent back to the parent Pipeline which will write the results out to SLFS and also use Aggregate Snap to find the best accuracy.

Child Pipeline

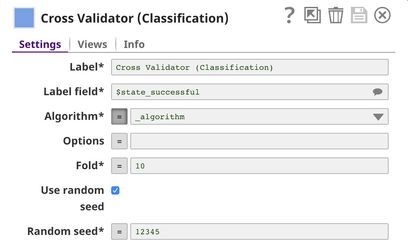

The File Reader Snap reads the processed dataset generated by the data preparation Pipeline and feeds into the Cross Validator (Classification) Snap. The output of this Pipeline is sent to the parent Pipeline.

In Cross Validator (Classification) Snap, Label field is set to $state_successful which is the one we want to predict. Normally, you can select Algorithm from the list. However, we will automate trying multiple algorithms with Pipeline Execute Snap so we set the Algorithm to the Pipeline parameter.

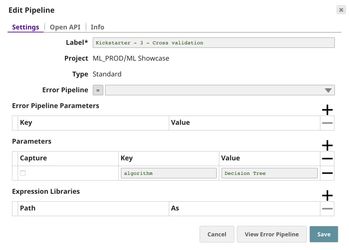

Parent Pipeline

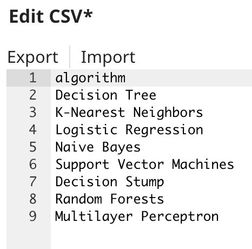

Below is the content of CSV Generator Snap. It contains a list of algorithms we want to try.

The $algorithm from CSV Generator will be passed into the child Pipeline as Pipeline parameter. For each algorithm, a child Pipeline instance will be spawned and executed. You can execute multiple child Pipelines at the same time by adjusting Pool Size. The output of the child Pipeline will be the output of this Snap. Moreover, the input document of the Pipeline Execute Snap will be added to the output as $original.

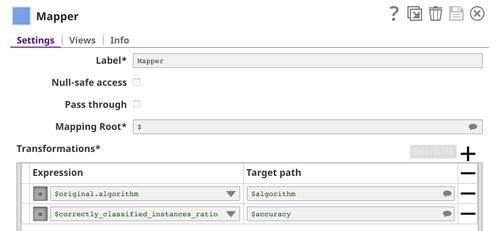

The Mapper Snap is used to extract the algorithm name and accuracy from the output of the Pipeline Execute Snap.

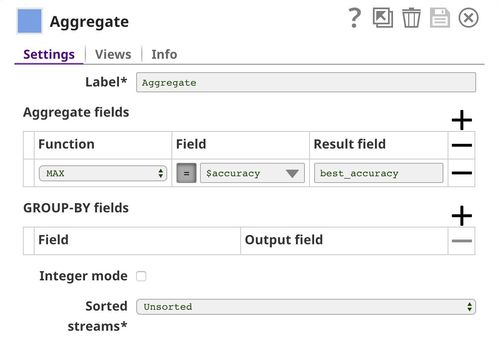

The Aggregate Snap is used to find the best accuracy among all algorithms.

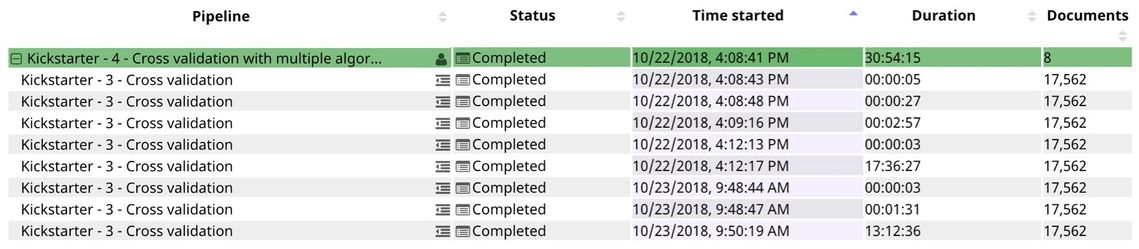

As you can see in the runtime below, 8 child Pipeline instances were created and executed. The 5th algorithm (Support Vector Machines) took over 17 hours to run. The last algorithm (Multilayer Perceptron) also took a long time. The rest of the algorithms can be completed within seconds or minutes. The duration depends on the number of factors. Some of them are the data type, number of unique values, and distribution.

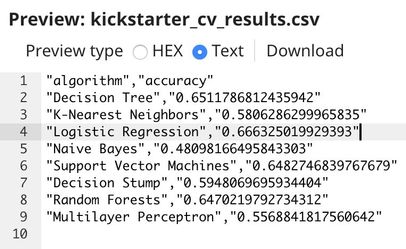

Below is the result. The logistic regression performs the best on this dataset at 66.6% accuracy. This is better than the baseline at 59.5%. However, it may not be practical to use. We may be able to do better than this by gathering more data about the project or improving the algorithm.

Downloads

Important steps to successfully reuse Pipelines

- Download and import the pipeline into the SnapLogic application.

- Configure Snap accounts as applicable.

- Provide pipeline parameters as applicable.

Have feedback? Email documentation@snaplogic.com | Ask a question in the SnapLogic Community

© 2017-2025 SnapLogic, Inc.